How to install Fedora and MicroShift on the NVIDIA Jetson AGX Xavier

On Dec 6, 2021, NVIDIA released the new UEFI/ACPI Experimental Firmware 1.1.2 for Jetson AGX Xavier and Jetson Xavier NX.

In this blog post, I will:

- flash the Jetson AGX Xavier with the UEFI/ACPI firmware

- install Fedora Linux aarch64 on the Jetson AGX Xavier

- deploy MicroShift on the Jetson AGX Xavier

- create one Python Flask application

- build one image based on ubi8 for arm64

- publish the image to the Quay registry

- deploy the image on MicroShift

MicroShift is a research project that explores how OpenShift and Kubernetes can be optimized for small form factor and edge computing. MicroShift is built from OKD, the Kubernetes distribution by the OpenShift community.

MicroShift’s design goals:

- make frugal use of system resources (CPU, memory, network, storage, etc.)

- tolerate severe networking constraints

- update securely, safely, speedily, and seamlessly (without disrupting workloads)

- build on and integrate cleanly with edge-optimized OSes like Fedora IoT and RHEL for Edge

- provide a consistent development and management experience with standard OpenShift

In Peter Robinson’s post, you can get more information on the Jetson UEFI enablement work in progress. I will not cover in this blog post the 512-core NVIDIA GPU enablement not available yet in Fedora.

Before playing with the new Jetson AGX Orin announced for Q1 2022, we will use the NVIDIA Jetson Xavier AGX Developer Kit. I bought this model in 2019 who has only 16GB of RAM and Carmel ARM64 8 Core CPU (the updated model has 32GB of RAM).

Install NVMe M.2 SSD

MicroShift requires only 1GB of free storage space, but to get more storage for a real test, I’m adding first one Sabrent 1TB Rocket NVMe PCIe M.2 2280 Internal SSD High Performance Solid State Drive (SB-ROCKET-1TB):

When you open the Xavier take care not to break the small black connection cable.

Consoles

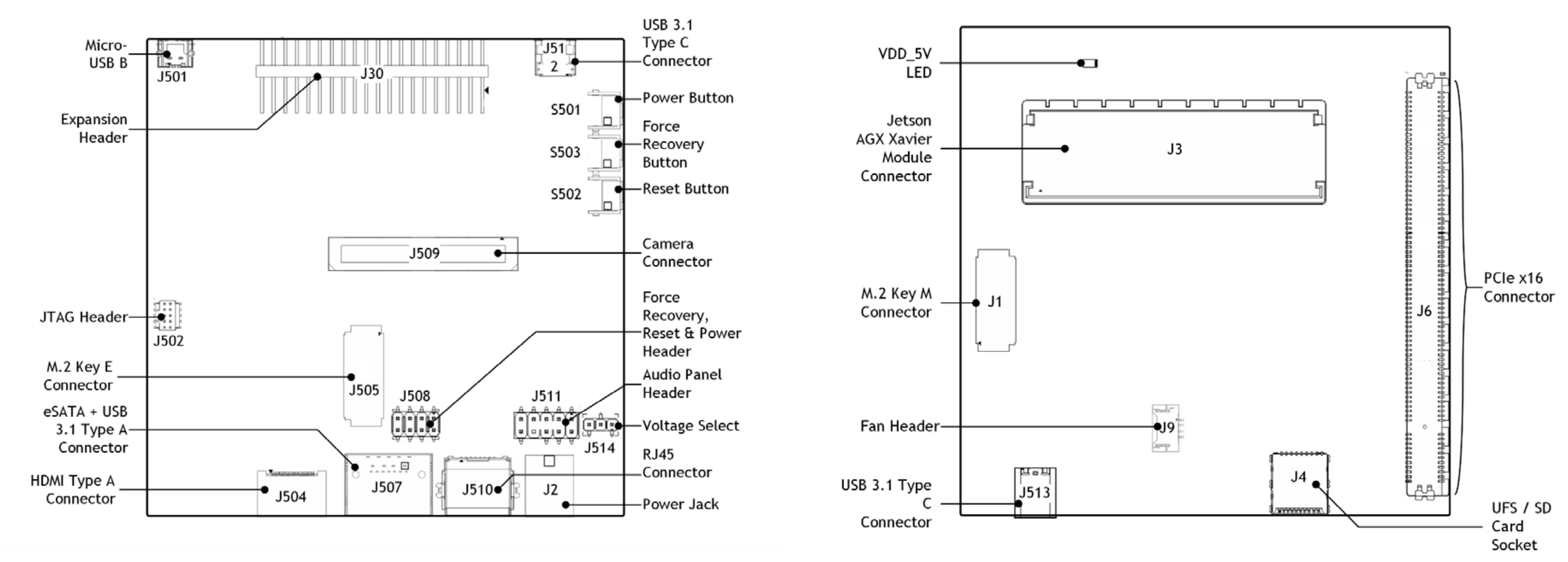

To get UART console access, connect one cable between the Micro USB connector (J501) and one laptop USB port:

We will use Minicom which is a text-based modem control and terminal emulator program for Unix-like operating systems.

We will use the UART console to setup the boot device or follow the flash process.

UART console from a Linux laptop

If you are using one Linux laptop, you have a new Future Technology Devices International device:

egallen@laptop:~$ lsusb | grep -i future

Bus 002 Device 035: ID 0403:6011 Future Technology Devices International, Ltd FT4232H Quad HS USB-UART/FIFO IC

You should be able to see the ttyUSB devices:

egallen@laptop:~$ ls /dev/ttyUSB*

/dev/ttyUSB0 /dev/ttyUSB1 /dev/ttyUSB2 /dev/ttyUSB3

Install Minicom for RHEL/CentOS/Fedora/Rocky Linux:

egallen@laptop:~$ sudo dnf install minicom -y

Install Minicom for Ubuntu:

egallen@laptop:~$ sudo apt install minicom -y

Connect with Minicom:

egallen@laptop:~$ sudo minicom -D /dev/ttyUSB3 -8 -b 115200

Jetson UEFI firmware (version v1.1.2-29165693 built on 11/19/21-12:40:51)

Press ESCAPE for boot options ...........

Press Ctrl+a+x if you want to quit minicom.

UART console from a macOS laptop

When the console cable is plugged on a macOS, you should see these devices:

egallen@macbook ~ % ls /dev/cu.usbserial*

/dev/cu.usbserial-14500 /dev/cu.usbserial-14501 /dev/cu.usbserial-14502 /dev/cu.usbserial-14503

Install the Homebrew package manager for macOS, if you don’t have it: https://brew.sh

You can now install minicom with the brew command:

egallen@macbook ~ % brew install minicom

==> Downloading https://ghcr.io/v2/homebrew/core/minicom/blobs/sha256:ac0a7c58888a3eeb78bbc24d8a47

==> Downloading from https://pkg-containers.githubusercontent.com/ghcr1/blobs/sha256:ac0a7c58888a3

...

Connect with Minicom on the device finishing by usbserial-*3:

egallen@macbook ~ % minicom -D /dev/cu.usbserial-*3 -8 -b 115200

The meta key on macOS is Esc not Ctrl, so Esc+x to leave Minicom:

+----------------------+

| Leave Minicom? |

| Yes No |

+----------------------+

Local console

During the Fedora Anaconda installation, we will use one small Rii 2.4G Mini Wireless Keyboard with a touchpad mouse:

The 2.4G USB dongle is plugged on the USB type C port (J513 port).

The 2.4G USB dongle is plugged on the USB type C port (J513 port).

Download NVIDIA UEFI resources

We will put our files in one xavier folder:

egallen@laptop:~$ mkdir xavier

egallen@laptop:~$ cd xavier/

Download Linux for Tegra (L4T) R32.6.1 release:

egallen@laptop:~/xavier$ wget "https://developer.nvidia.com/embedded/l4t/r32_release_v6.1/t186/jetson_linux_r32.6.1_aarch64.tbz2"

Download UEFI firmware (1.1.2 ATM) (Readme):

egallen@laptop:~/xavier$ wget "https://developer.nvidia.com/assets/embedded/downloads/nvidia_uefiacpi_experimental_firmware/nvidia-l4t-jetson-uefi-r32.6.1-20211119125725.tbz2"

This firmware is a standard UEFI firmware based on the open source TianoCore/EDK2 reference firmware, it allows booting in either ACPI or Device-Tree mode.

Extract the driver package archive:

egallen@laptop:~/xavier$ tar xjf jetson_linux_r32.6.1_aarch64.tbz2

Extract the nvidia-l4t-jetson-uefi-r32.6.1-20211119125725.tbz2 package provided over the top of the extracted driver package and go to the Linux_for_Tegra subdirectory:

egallen@laptop:~/xavier$ tar xpf nvidia-l4t-jetson-uefi-r32.6.1-20211119125725.tbz2

egallen@laptop:~/xavier$ cd Linux_for_Tegra

egallen@laptop:~/xavier/Linux_for_Tegra$ ls

apply_binaries.sh jetson-xavier-nx-uefi-sd.conf p2972-0000-devkit-maxn.conf

bootloader jetson-xavier-slvs-ec.conf p2972-0000-devkit-slvs-ec.conf

build_l4t_bup.sh jetson-xavier-uefi-acpi.conf p2972-0000-uefi-acpi.conf

clara-agx-xavier-devkit.conf jetson-xavier-uefi-acpi-min.conf p2972-0000-uefi-acpi-min.conf

e3900-0000+p2888-0004-b00.conf jetson-xavier-uefi.conf p2972-0000-uefi.conf

flash.sh jetson-xavier-uefi-min.conf p2972-0000-uefi-min.conf

jetson-agx-xavier-devkit.conf kernel p2972-as-galen-8gb.conf

jetson-agx-xavier-ind-noecc.conf l4t_generate_soc_bup.sh p2972.conf

jetson-agx-xavier-industrial.conf l4t_sign_image.sh p3449-0000+p3668-0000-qspi-sd.conf

jetson-agx-xavier-industrial-mxn.conf LICENSE.sce_t194 p3449-0000+p3668-0001-qspi-emmc.conf

jetson-tx2-4GB.conf nvautoflash.sh p3509-0000+p3636-0001.conf

jetson-tx2-as-4GB.conf NVIDIA_Jetson_Platform_Pre-Release_License.pdf p3509-0000+p3668-0000-qspi.conf

jetson-tx2.conf nvmassflashgen.sh p3509-0000+p3668-0000-qspi-sd.conf

jetson-tx2-devkit-4gb.conf nvsdkmanager_flash.sh p3509-0000+p3668-0000-uefi-acpi-qspi.conf

jetson-tx2-devkit.conf nv_tegra p3509-0000+p3668-0000-uefi-acpi-qspi-sd.conf

jetson-tx2-devkit-tx2i.conf nv_tools p3509-0000+p3668-0000-uefi-qspi.conf

jetson-tx2i.conf p2597-0000+p3310-1000-as-p3489-0888.conf p3509-0000+p3668-0000-uefi-qspi-sd.conf

jetson-xavier-as-8gb.conf p2597-0000+p3310-1000.conf p3509-0000+p3668-0001-qspi-emmc.conf

jetson-xavier-as-xavier-nx.conf p2597-0000+p3489-0000-ucm1.conf p3509-0000+p3668-0001-uefi-acpi-qspi-emmc.conf

jetson-xavier.conf p2597-0000+p3489-0000-ucm2.conf p3509-0000+p3668-0001-uefi-qspi-emmc.conf

jetson-xavier-maxn.conf p2597-0000+p3489-0888.conf p3636.conf.common

jetson-xavier-nx-devkit.conf p2771-0000.conf.common p3668.conf.common

jetson-xavier-nx-devkit-emmc.conf p2771-0000-dsi-hdmi-dp.conf README_Autoflash.txt

jetson-xavier-nx-devkit-qspi.conf p2822-0000+p2888-0001.conf README_Massflash.txt

jetson-xavier-nx-devkit-tx2-nx.conf p2822-0000+p2888-0004.conf rootfs

jetson-xavier-nx-uefi-acpi.conf p2822-0000+p2888-0008.conf source

jetson-xavier-nx-uefi-acpi-emmc.conf p2822-0000+p2888-0008-maxn.conf source_sync.sh

jetson-xavier-nx-uefi-acpi-sd.conf p2822-0000+p2888-0008-noecc.conf tools

jetson-xavier-nx-uefi.conf p2822+p2888-0001-as-p3668-0001.conf

jetson-xavier-nx-uefi-emmc.conf p2972-0000.conf.common

Flash the Jetson AGX Xavier

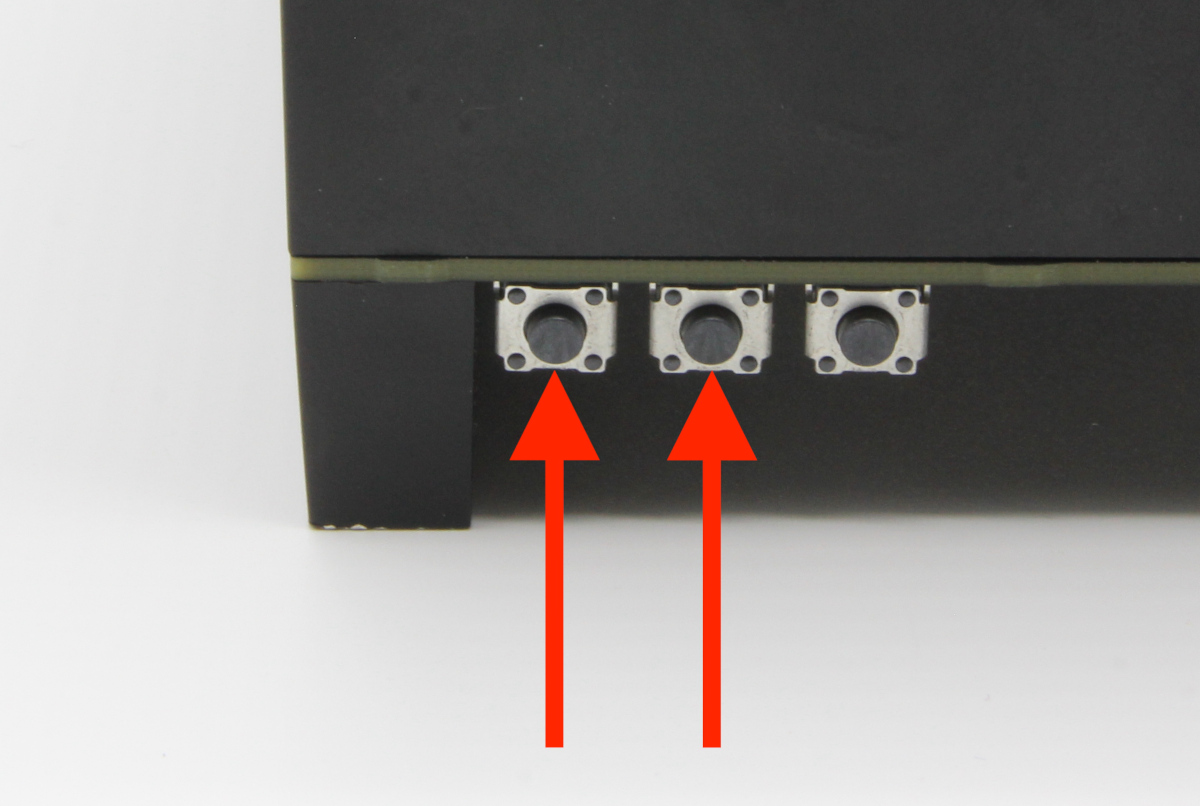

Boot your Jetson Xavier AGX into Force Recovery Mode (RCM):

You can see the USB device:

egallen@laptop:~/xavier/Linux_for_Tegra$ lsusb | grep -i nvidia

Bus 002 Device 014: ID 0955:7019 NVidia Corp.

We are flashing the the Jetson AGX Xavier with with Device-Tree firmware:

egallen@laptop:~/xavier/Linux_for_Tegra$ sudo ./flash.sh jetson-xavier-uefi-min external

###############################################################################

# L4T BSP Information:

# R32 , REVISION: 6.1

###############################################################################

# Target Board Information:

# Name: jetson-xavier-uefi-min, Board Family: t186ref, SoC: Tegra 194,

# OpMode: production, Boot Authentication: NS,

# Disk encryption: disabled ,

###############################################################################

copying soft_fuses(/home/egallen/xavier/Linux_for_Tegra/bootloader/t186ref/BCT/tegra194-mb1-soft-fuses-l4t.cfg)... done.

./tegraflash.py --chip 0x19 --applet "/home/egallen/xavier/Linux_for_Tegra/bootloader/mb1_t194_prod.bin" --skipuid --soft_fuses tegra194-mb1-soft-fuses-l4t.cfg --bins "mb2_applet nvtboot_applet_t194.bin" --cmd "dump eeprom boardinfo cvm.bin;reboot recovery"

Welcome to Tegra Flash

version 1.0.0

...

*** The target t186ref has been flashed successfully. ***

Make the target filesystem available to the device and reset the board to boot from external external.

(Full log)

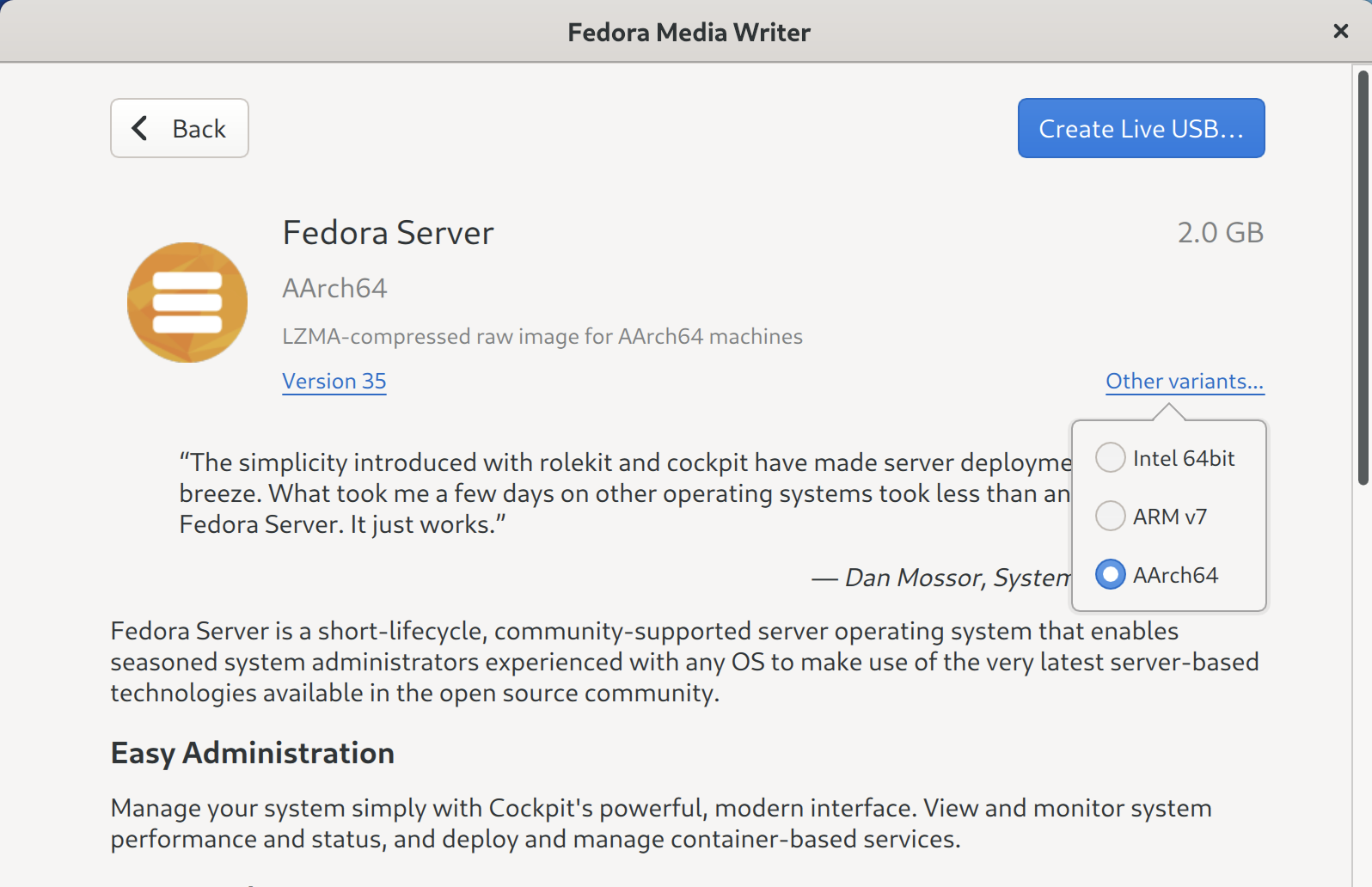

Prepare the Fedora installation USB key

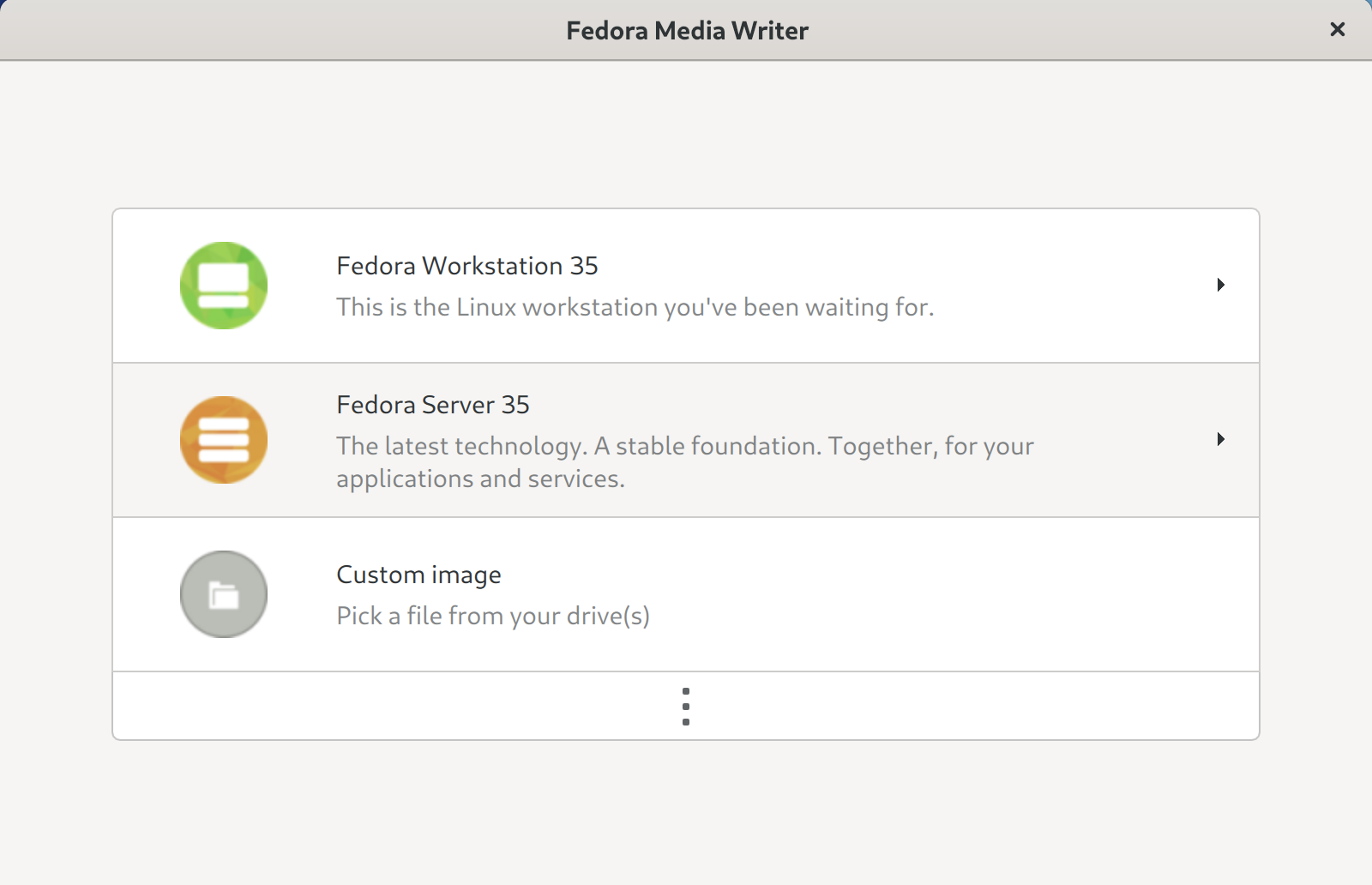

Download Fedora Media Writer here: https://arm.fedoraproject.org/.

This tool can run on Linux, macOS or Microsoft Windows, once Fedora Media Writer is installed, it will set up your flash drive to run a “Live” version of Fedora.

Launch the Fedora Media Writer from Linux:

Pick Fedora Server 35:

In Other variants choose AArch64, and click on Create Live USB...:

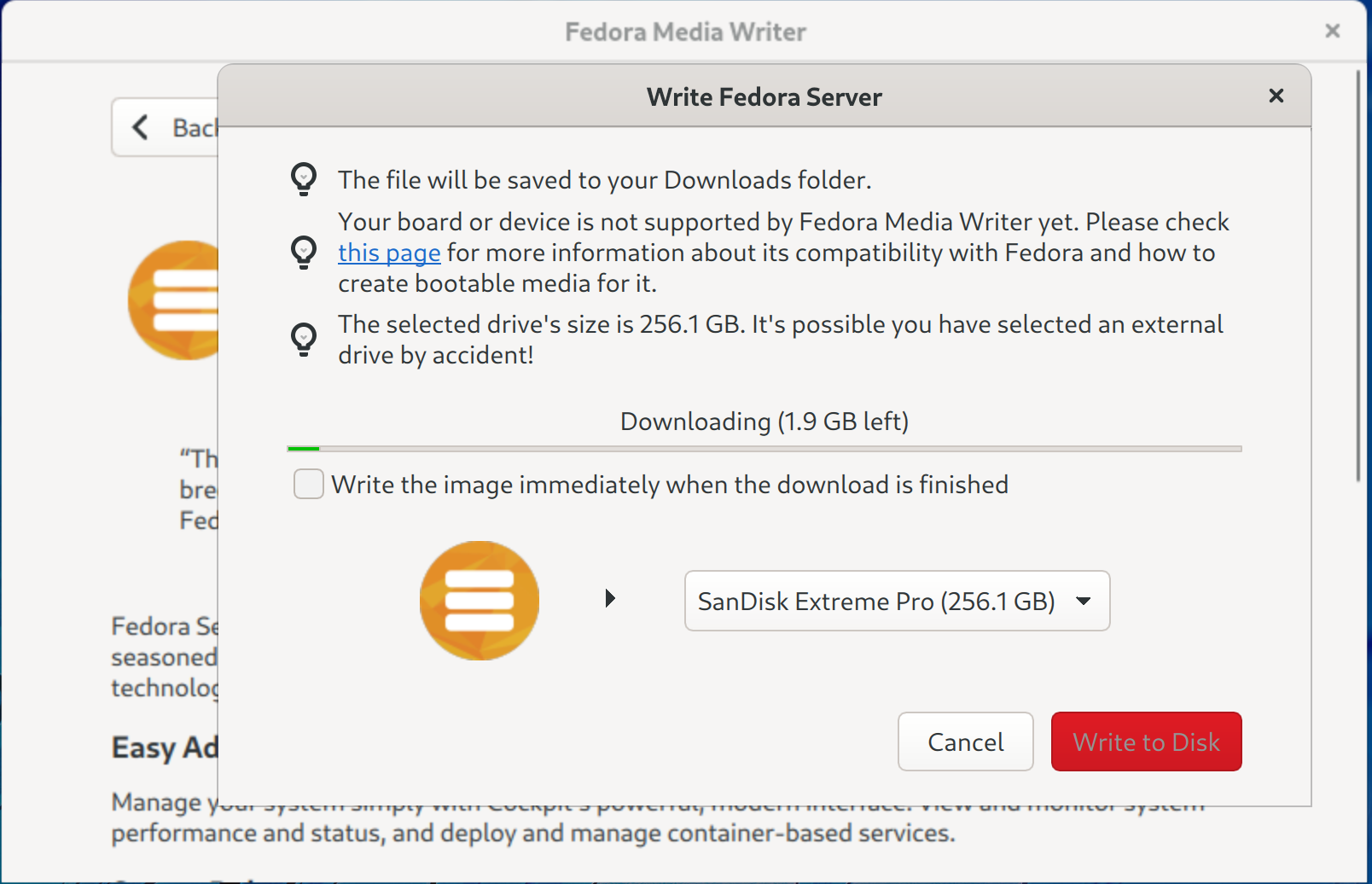

The Fedora Media Writer is downloading and writing to the device:

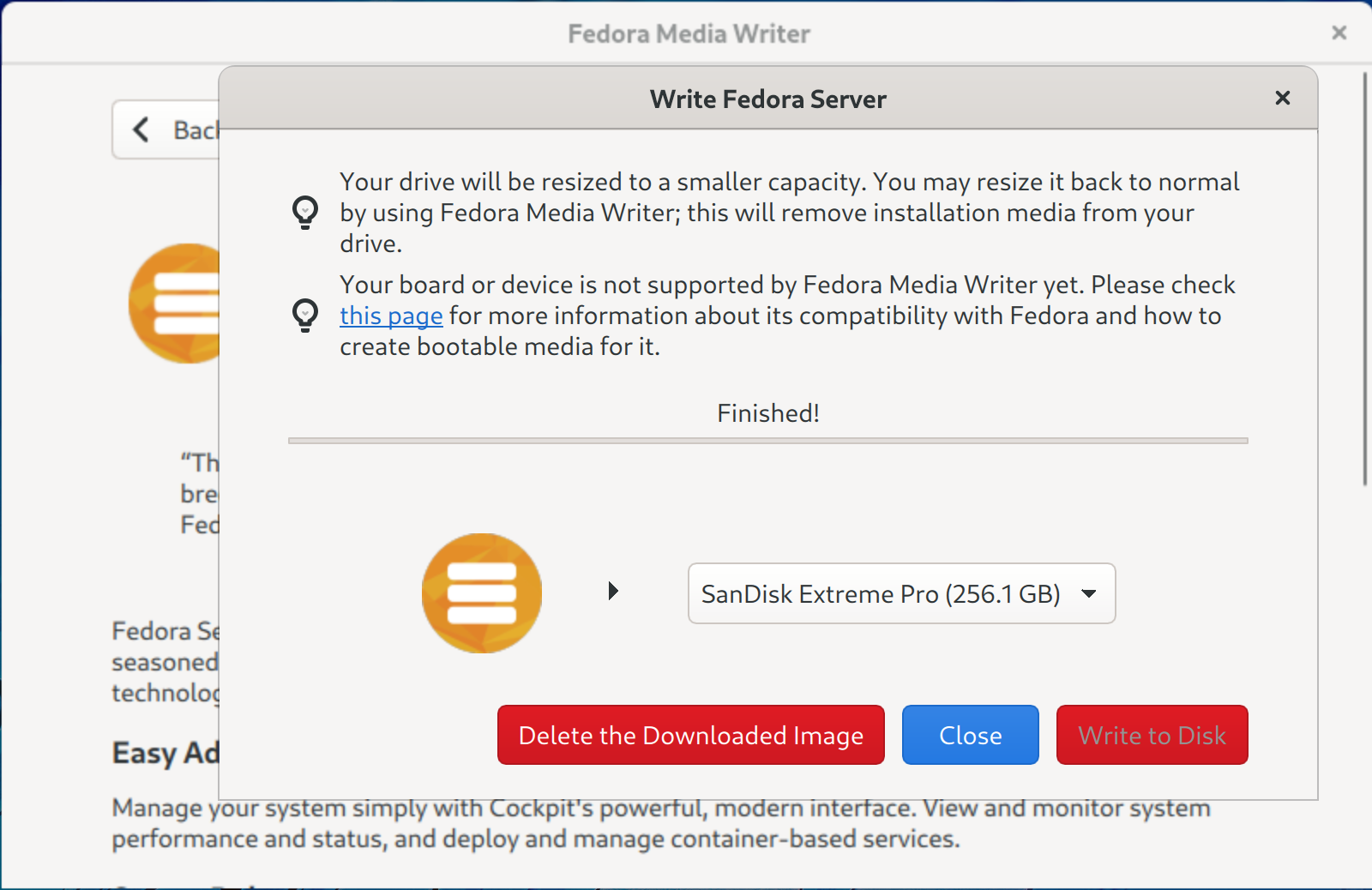

The USB flash drive is ready:

Install Fedora on the Jetson AGX Xavier

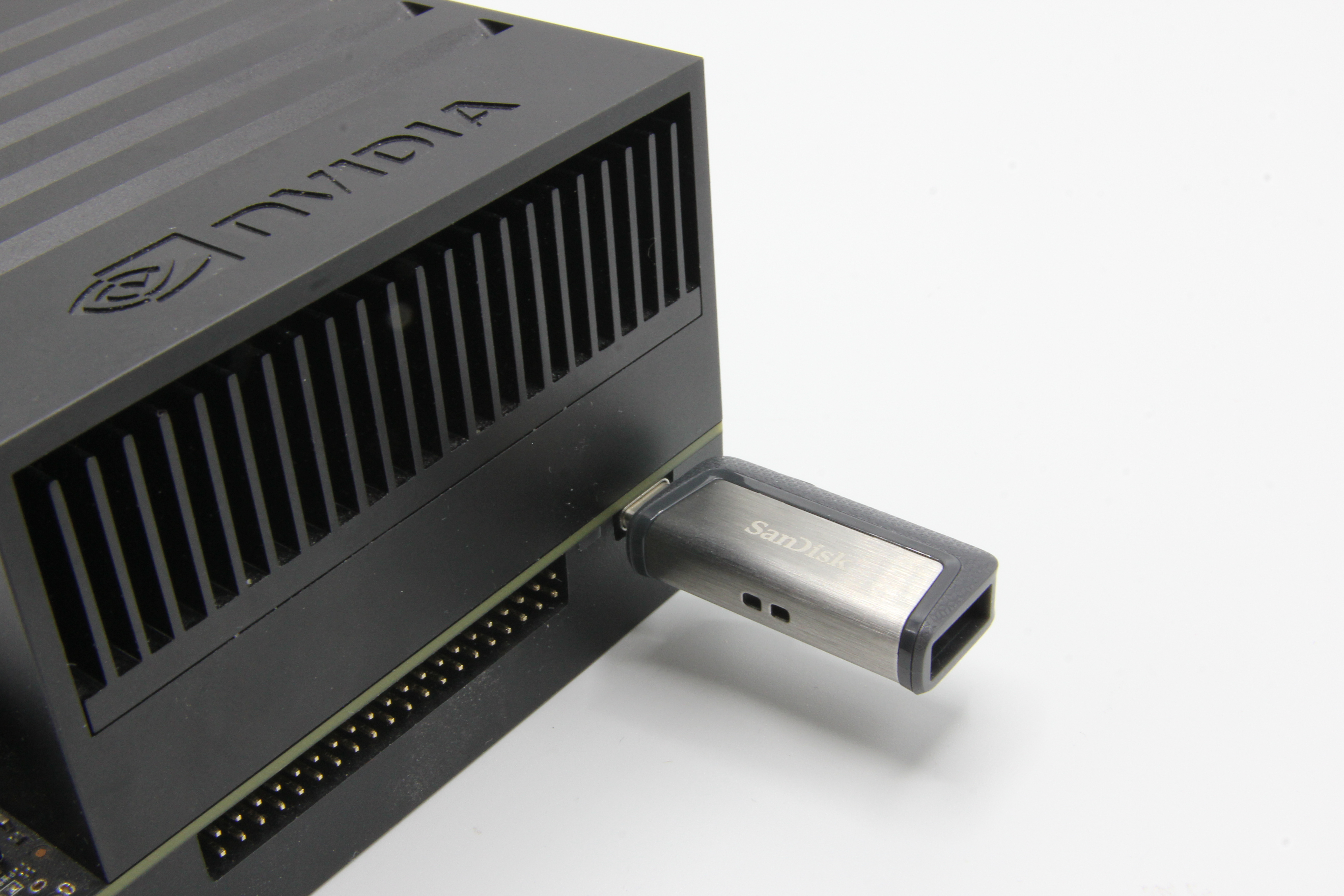

We can plug our SanDisk USB flash drive into the Jetson AGX Xavier (J512 port):

We are ready to go, we will capture the HDMI stream with one MYPIN USB 3.0 Game Capture Card plugged on the J504 port.

We can boot the Jetson AGX Xavier, and follow the boot with Minicom connected to the UART port.

During the boot we have to press “ESCAPE”:

EFI stub: Booting Linux Kernel...

EFI stub: Using DTB from configuration table

EFI stub: Exiting boot services and installing virtual address map...

Select the Boot Manager entry:

0 MB RAM

Select Language <Standard English> This selection will

take you to the Boot

> Device Manager Manager

> Boot Manager

> Boot Maintenance Manager

Continue

Reset

^v=Move Highlight <Enter>=Select Entry

( In the Device Manager menu, we can see we are using in Device Tree mode.

You can choose between ACPI and Device Tree hardware descriptions in the menu Device Manager > O/S Hardware Description Selection, we keep Device Tree )

In the Boot Manager screen, pick the USB key: UEFI USB SanDisk 3.2Gen1:

/------------------------------------------------------------------------------\

| Boot Manager |

\------------------------------------------------------------------------------/

^

Boot Manager Menu Device Path :

VenHw(1E5A432C-0466-4D

Fedora 31-B009-D4D9239271D3)/

UEFI eMMC Device MemoryMapped(0xB,0x361

UEFI eMMC Device 2 0000,0x364FFFF)/USB(0x

UEFI eMMC Device 3 4,0x0)

UEFI PXEv4 (MAC:00044BCBACE3)

UEFI PXEv6 (MAC:00044BCBACE3)

UEFI HTTPv4 (MAC:00044BCBACE3)

UEFI HTTPv6 (MAC:00044BCBACE3)

UEFI Shell

UEFI USB SanDisk 3.2Gen1

0101d133f326496e929669edb5cb27ccf839f2aa03d1b1e85e10

2da99f013309742d0000000000000000000043bee11700913d00

95558107862aabf0

v

/------------------------------------------------------------------------------\

| |

| ^v=Move Highlight <Enter>=Select Entry Esc=Exit |

\------------------------------------------------------------------------------/

We can continue the boot.

The installation is starting:

Install Fedora 35

Test this media & install Fedora 35

Troubleshooting -->

Use the ^ and v keys to change the selection.

Press 'e' to edit the selected item, or 'c' for a command prompt.

Fedora boot is in progress:

EFI stub: Booting Linux Kernel...

EFI stub: Using DTB from configuration table

EFI stub: Exiting boot services and installing virtual address map...

[ 0.000000] Booting Linux on physical CPU 0x0000000000 [0x4e0f0040]

[ 0.000000] Linux version 5.14.10-300.fc35.aarch64 (mockbuild@buildvm-a64-11.iad2.fedoraproject.org) (gcc (GCC) 11.2.1 20210728 (Red Hat 11.2.1-1), GNU ld versi1

[ 0.000000] Machine model: NVIDIA Jetson AGX Xavier Developer Kit

[ 0.000000] efi: EFI v2.70 by EDK II

[ 0.000000] efi: RTPROP=0x478b88f18 MEMATTR=0x477ef1018 MOKvar=0x45cca0000 RNG=0x47cb3c718 MEMRESERVE=0x45d3d0198

[ 0.000000] efi: seeding entropy pool

[ 0.000000] NUMA: No NUMA configuration found

[ 0.000000] NUMA: Faking a node at [mem 0x0000000080000000-0x000000047fffffff]

[ 0.000000] NUMA: NODE_DATA [mem 0x47dfc68c0-0x47dfdcfff]

[ 0.000000] Zone ranges:

[ 0.000000] DMA [mem 0x0000000080000000-0x00000000ffffffff]

[ 0.000000] DMA32 empty

[ 0.000000] Normal [mem 0x0000000100000000-0x000000047fffffff]

[ 0.000000] Device empty

[ 0.000000] Movable zone start for each node

...

On the HDMI output, we can see the Fedora 35 Anaconda Installer menu screen.

Pick your language:

We have access to all the configuration in the Installation summary:

We are choosing our Sabrent NVMe storage (it’s also working if you want to install on one SSD USB key) and we are picking the Custom storage configuration:

After some cleaning on the NVMe SSD, I’m extending the / partition to use all the free space of the 1TB of the NVMe storage:

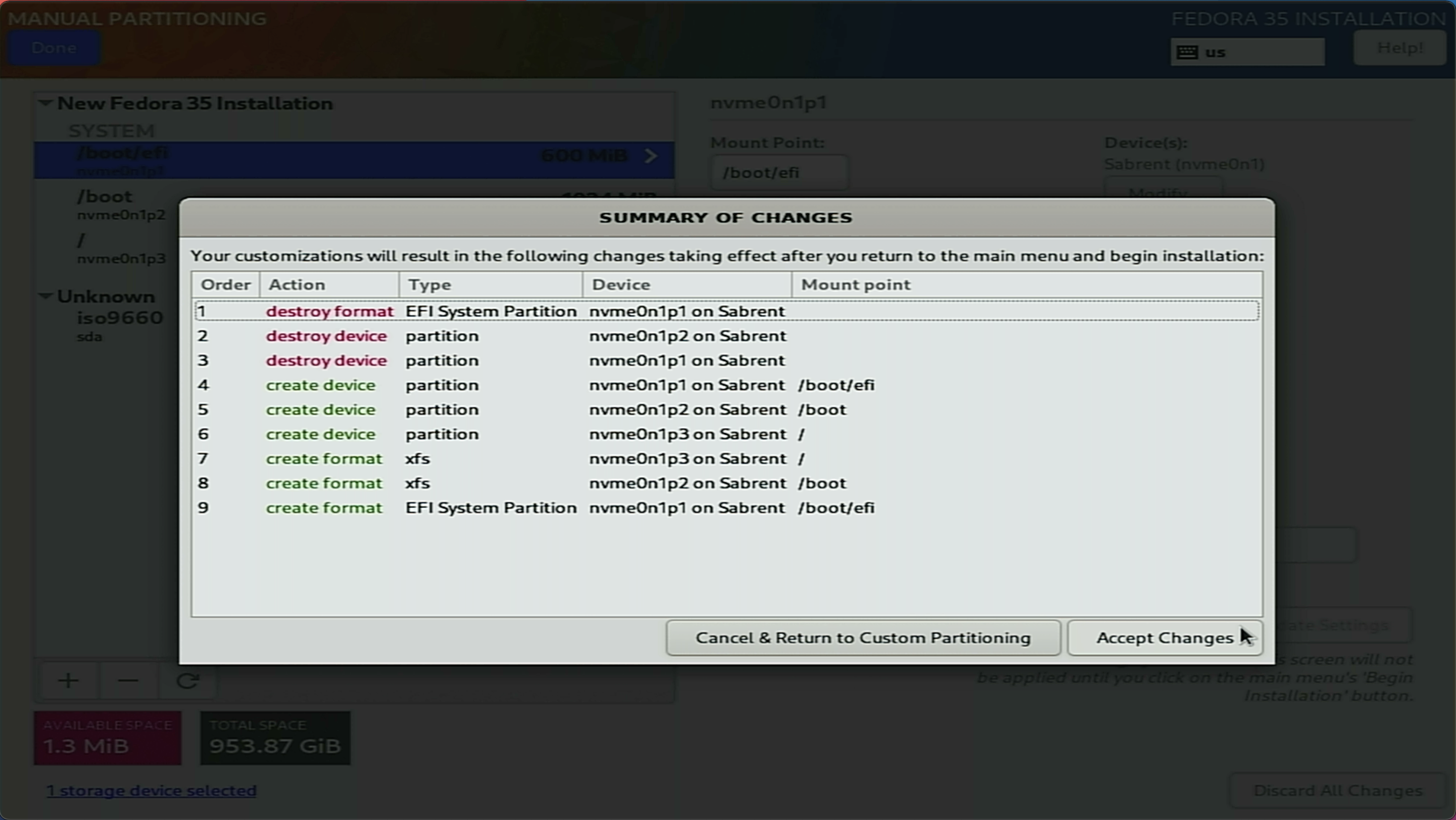

We can accept the partition changes:

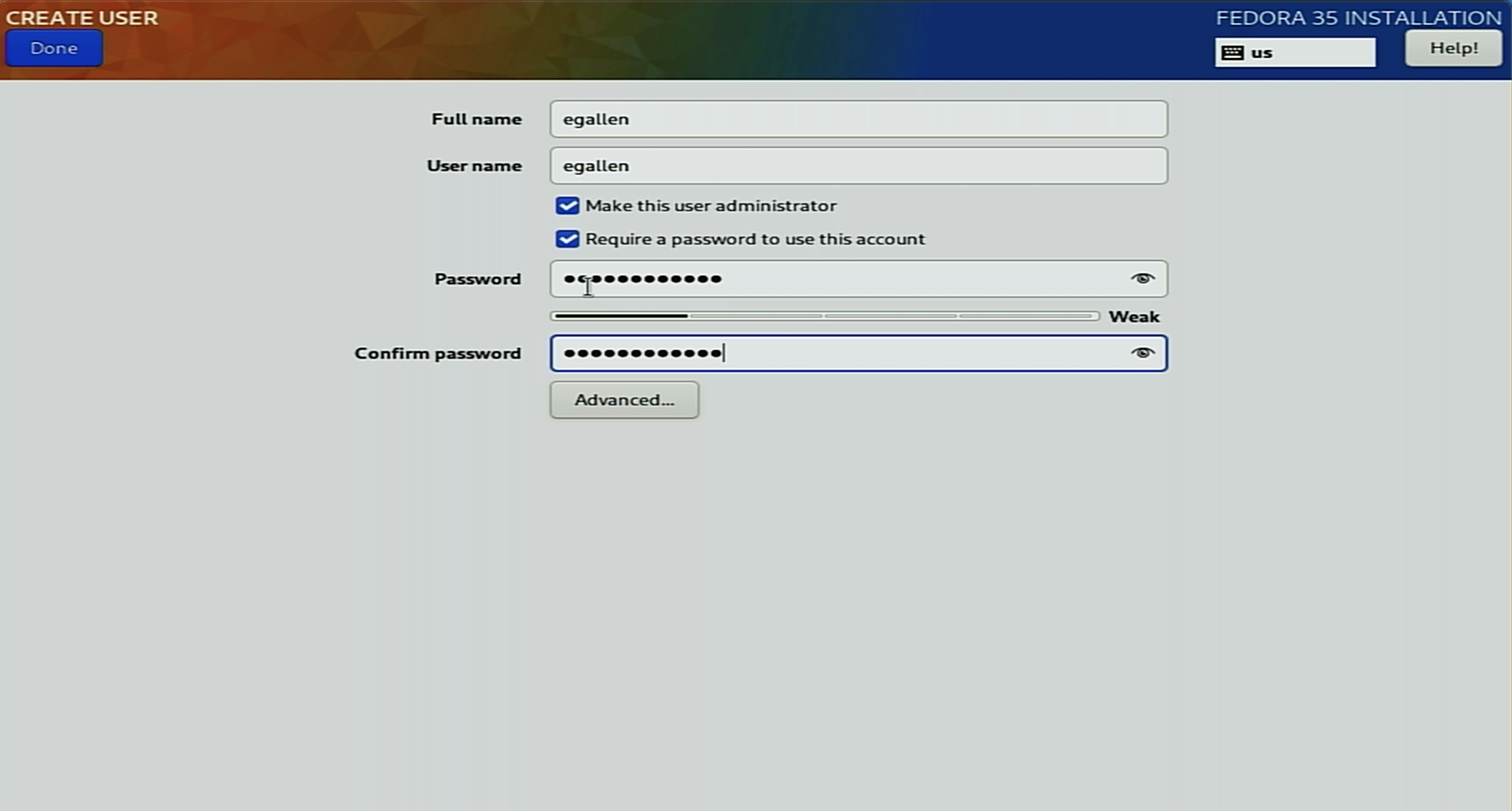

I can create my user egallen in the User creation page:

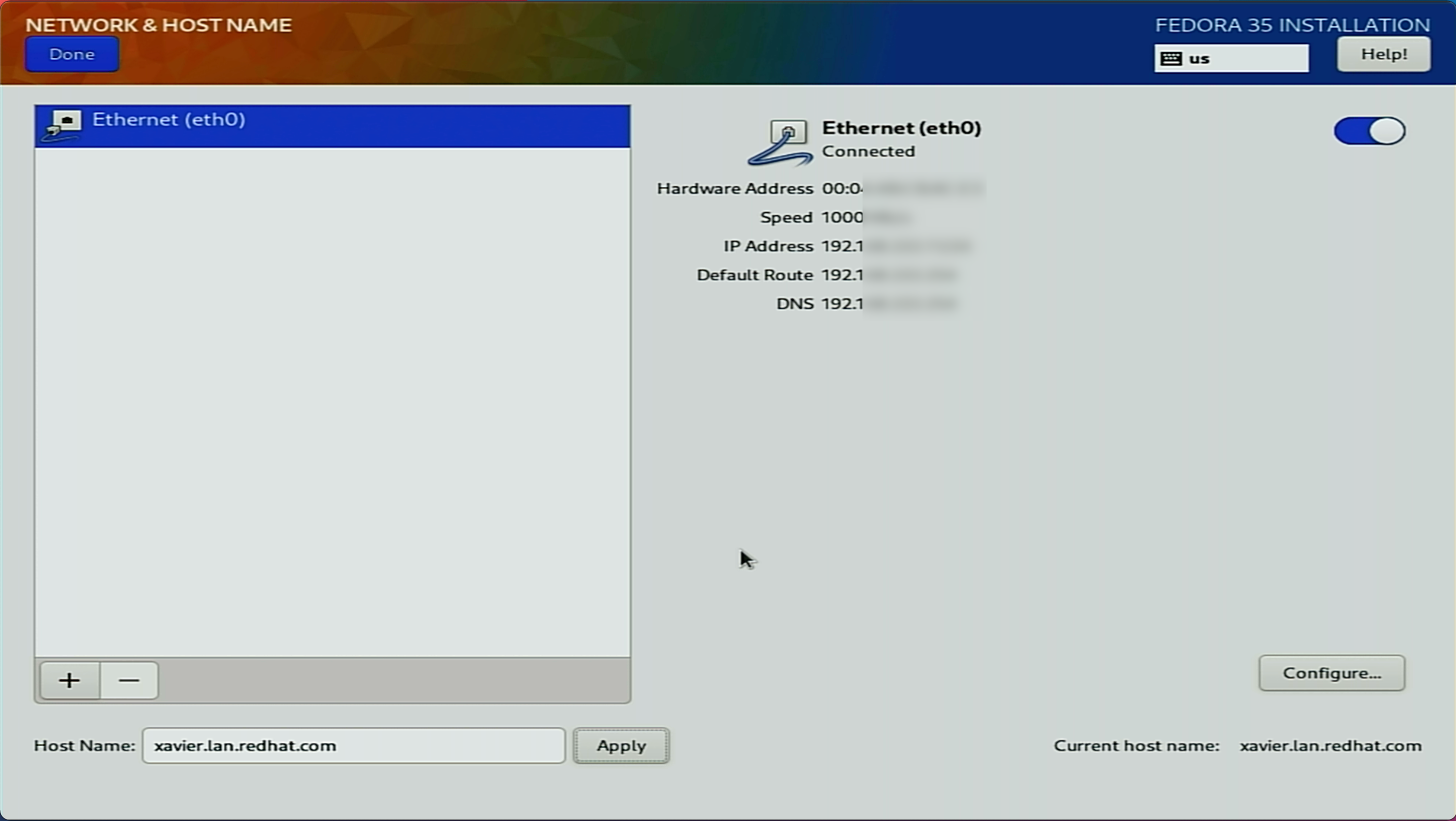

We can give a name to our Jetson AGX Xavier and double-check the network configuration:

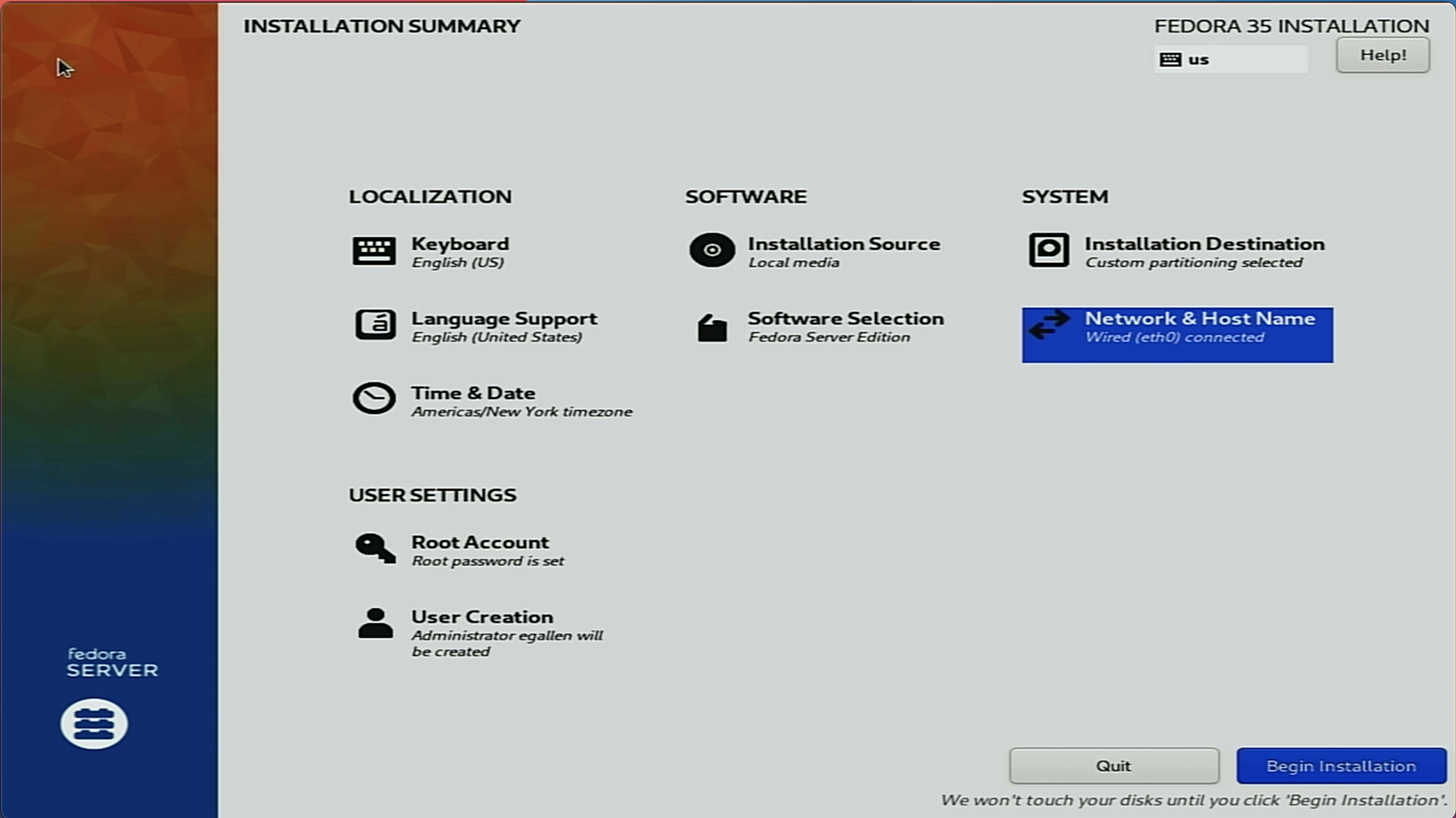

All the configuration is prepared, we can launch the installation by clicking on Begin Installation:

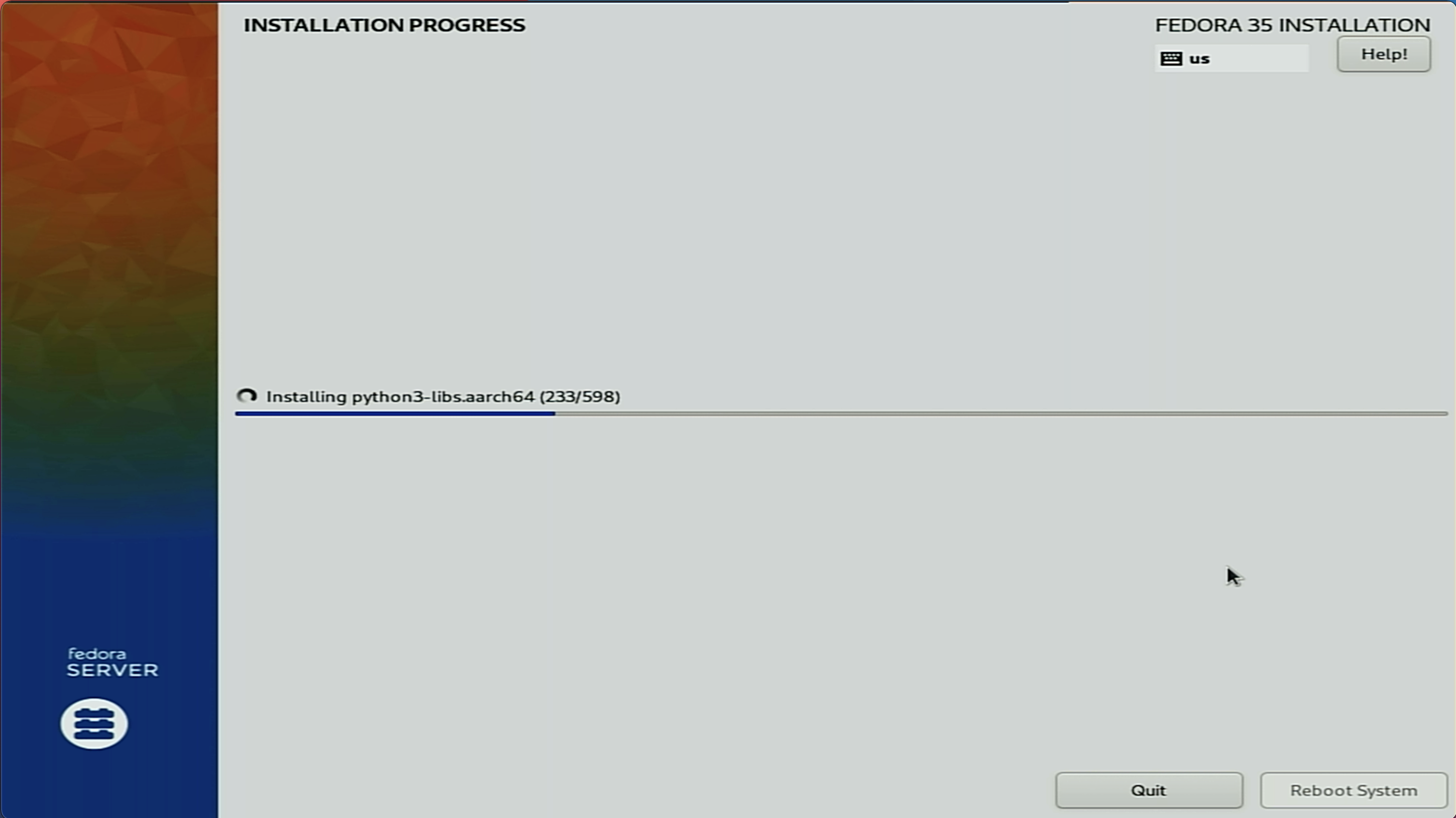

The storage is configured, and the package installation is in progress:

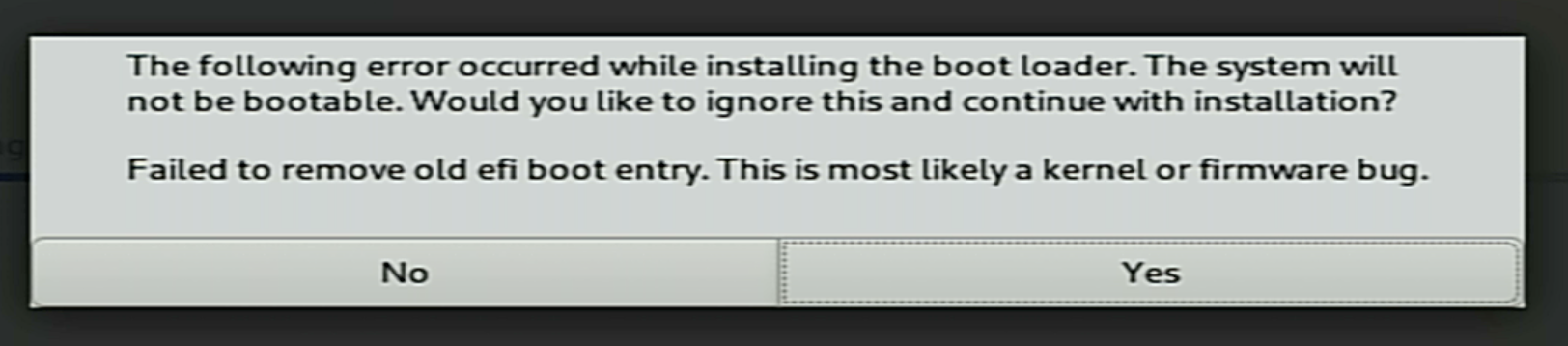

You could have one warning “Failed to remove old efi boot entry”, this is not a blocker:

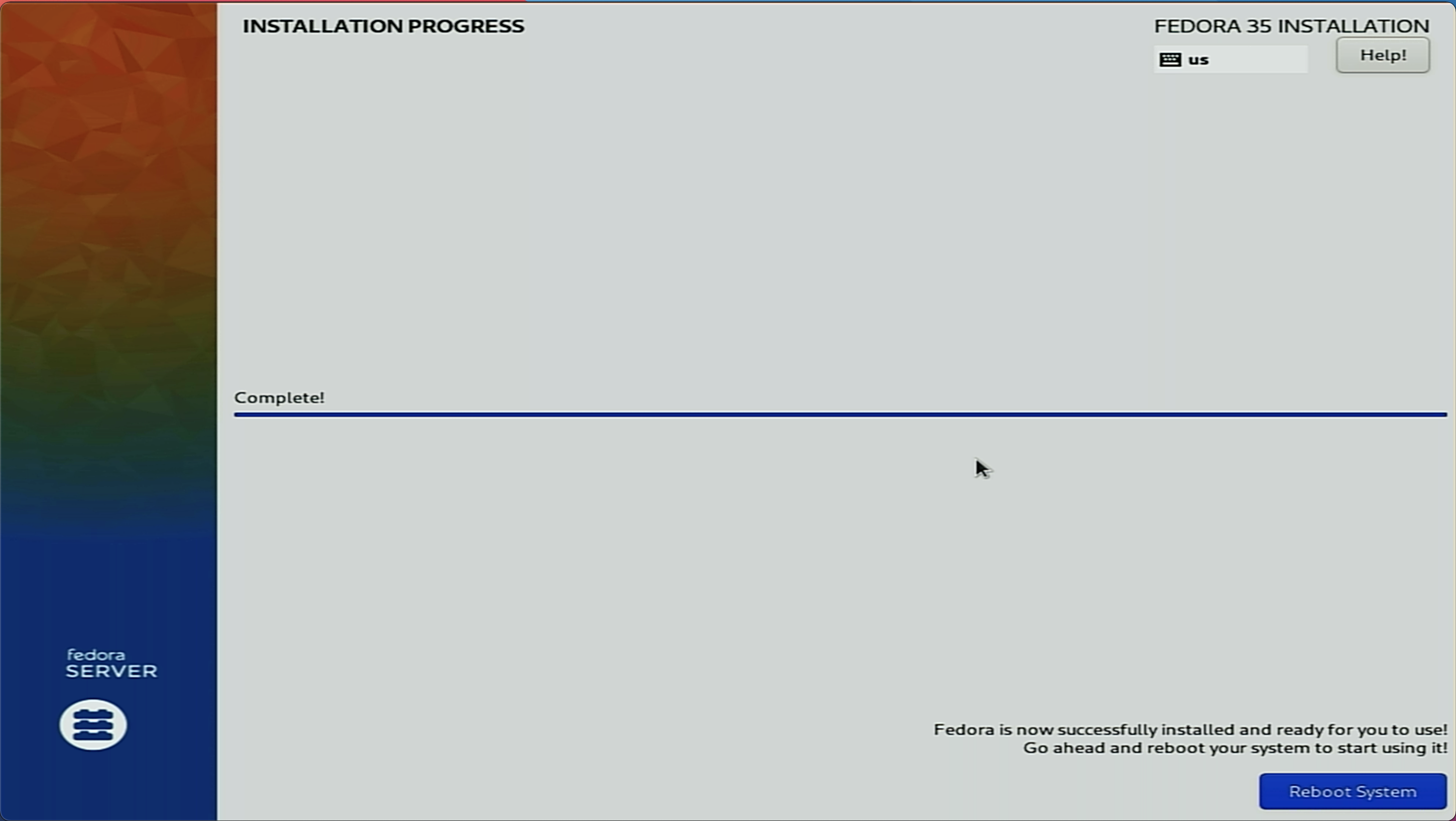

Sucess, the installation is completed!:

First Fedora boot

Reboot and remove the USB installation flash drive:

Jetson UEFI firmware (version v1.1.2-29165693 built on 11/19/21-12:40:51)

Press ESCAPE for boot options .....

We can go to the menu: > Boot Maintenance Manager > Boot Options > Change Boot Order.

With the sign +, put your NVMe storage at the top and press Enter, Esc and Y to save:

/------------------------------------------------------------------------------\

| Change Boot Order |

\------------------------------------------------------------------------------/

Change the order <UEFI HTTPv4 Change the order

/----------------------------------------\

| UEFI Sabrent 03F1079B114400656042 1 |

| UEFI HTTPv4 (MAC:00044BCBACE3) |

| UEFI HTTPv6 (MAC:00044BCBACE3) |

| UEFI Shell |

| Fedora |

| Fedora |

| UEFI eMMC Device |

| UEFI eMMC Device 2 |

| UEFI eMMC Device 3 |

| UEFI PXEv4 (MAC:00044BCBACE3) |

| UEFI PXEv6 (MAC:00044BCBACE3) |

\----------------------------------------/

<UEFI Sabrent

v

/------------------------------------------------------------------------------\

| + =Move Selection Up - =Move Selection Down |

| ^v=Move Highlight <Enter>=Complete Entry Esc=Exit Entry |

\------------------------------------------------------------------------------/

Fedora Linux is booting, we can see the GRUB2 list:

Fedora Linux (5.14.10-300.fc35.aarch64) 35 (Server Edition)

Fedora Linux (0-rescue-ca5d592fa7b74fc28657209c2670f0d4) 35 (Server Edit>

UEFI Firmware Settings

Use the ^ and v keys to change the selection.

Press 'e' to edit the selected item, or 'c' for a command prompt.

Press Escape to return to the previous menu.

The services are starting:

EFI stub: Booting Linux Kernel...

EFI stub: Using DTB from configuration table

EFI stub: Exiting boot services and installing virtual address map...

[ 0.000000] Booting Linux on physical CPU 0x0000000000 [0x4e0f0040]

[ 0.000000] Linux version 5.14.10-300.fc35.aarch64 (mockbuild@buildvm-a64-11.iad2.fedoraproject.org) (gcc (GCC) 11.2.1 20210728 (Red Hat 11.2.1-1), GNU ld versi1

[ 0.000000] Machine model: NVIDIA Jetson AGX Xavier Developer Kit

[ 0.000000] efi: EFI v2.70 by EDK II

[ 0.000000] efi: RTPROP=0x478b88f18 MEMATTR=0x4785c7018 MOKvar=0x45cbe0000 RNG=0x47cb3c718 MEMRESERVE=0x45d3d0318

[ 0.000000] efi: seeding entropy pool

...

First ssh login to the Jetson Xavier AGX

The system is running, we can try to connect to the system with SSH:

egallen@laptop ~ % ssh egallen@xavier

The authenticity of host '192.168.1.11 (192.168.1.11)' can't be established.

ECDSA key fingerprint is SHA256:6F4U7CJfdYiyiYWtGVTPdpU76I2PEAXR/rQZuAq7mMA.

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.1.11' (ECDSA) to the list of known hosts.

egallen@192.168.1.11's password:

Web console: https://xavier.lan.redhat.com:9090/ or https://192.168.1.11:9090/

[egallen@xavier ~]$

We can enable the sudo NOPASSWD:

[egallen@xavier ~]$ sudo -s

[root@xavier egallen]# sed --in-place 's/^\s*\(%wheel\s\+ALL=(ALL)\s\+ALL\)/# \1/' /etc/sudoers

[root@xavier egallen]# sed --in-place 's/^#\s*\(%wheel\s\+ALL=(ALL)\s\+NOPASSWD:\s\+ALL\)/\1/' /etc/sudoers

We can start by updating the system:

[egallen@xavier ~]$ sudo dnf update -y

...

Installed:

clevis-18-4.fc35.aarch64 clevis-luks-18-4.fc35.aarch64 clevis-pin-tpm2-0.5.0-1.fc35.aarch64

freetype-2.11.0-1.fc35.aarch64 gnupg2-smime-2.3.4-1.fc35.aarch64 graphite2-1.3.14-8.fc35.aarch64

grub2-tools-extra-1:2.06-10.fc35.aarch64 harfbuzz-2.8.2-2.fc35.aarch64 jose-11-3.fc35.aarch64

jq-1.6-10.fc35.aarch64 kernel-5.15.12-200.fc35.aarch64 kernel-core-5.15.12-200.fc35.aarch64

kernel-modules-5.15.12-200.fc35.aarch64 libjose-11-3.fc35.aarch64 libluksmeta-9-12.fc35.aarch64

libsecret-0.20.4-3.fc35.aarch64 luksmeta-9-12.fc35.aarch64 memstrack-0.2.4-1.fc35.aarch64

mkpasswd-5.5.10-2.fc35.aarch64 oniguruma-6.9.7.1-1.fc35.1.aarch64 pigz-2.5-2.fc35.aarch64

pinentry-1.2.0-1.fc35.aarch64 python-unversioned-command-3.10.1-2.fc35.noarch python3-psutil-5.8.0-12.fc35.aarch64

python3-tracer-0.7.8-1.fc35.noarch qrencode-libs-4.1.1-1.fc35.aarch64 reportd-0.7.4-7.fc35.aarch64

sscg-3.0.0-1.fc35.aarch64 sssd-proxy-2.6.1-1.fc35.aarch64 systemd-networkd-249.7-2.fc35.aarch64

tpm2-tools-5.2-1.fc35.aarch64 tracer-common-0.7.8-1.fc35.noarch vim-data-2:8.2.3755-1.fc35.noarch

whois-nls-5.5.10-2.fc35.noarch

Complete!

[egallen@xavier ~]$ sudo systemctl reboot

In the GRUB screen, we can see that the kernel was upgraded from 5.14.10-300.fc35.aarch64 to 5.15.12-200.fc35.aarch64:

Fedora Linux (5.15.12-200.fc35.aarch64) 35 (Server Edition)

Fedora Linux (5.14.10-300.fc35.aarch64) 35 (Server Edition)

Check the system installed

We can see the system details, we are running Fedora 35 on aarch64 hardware architecture:

[egallen@xavier ~]$ cat /etc/redhat-release

Fedora release 35 (Thirty Five)

[egallen@xavier ~]$ uname -m

aarch64

[egallen@xavier ~]$ uname -a

Linux xavier.lan.redhat.com 5.15.12-200.fc35.aarch64 #1 SMP Wed Dec 29 14:47:47 UTC 2021 aarch64 aarch64 aarch64 GNU/Linux

We can find our Carmel ARMv8 CPU (2 cores in 4 clusters = total of 8 cores):

[egallen@xavier edgeflask]$ lscpu | head -17

Architecture: aarch64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

CPU(s): 8

On-line CPU(s) list: 0-7

Vendor ID: NVIDIA

Model name: Carmel

Model: 0

Thread(s) per core: 1

Core(s) per cluster: 2

Socket(s): -

Cluster(s): 4

Stepping: 0x0

CPU max MHz: 2265.6001

CPU min MHz: 115.2000

BogoMIPS: 62.50

Flags: fp asimd evtstrm aes pmull sha1 sha2 crc32 atomics fphp asimdhp cpuid asimdrdm dcpop

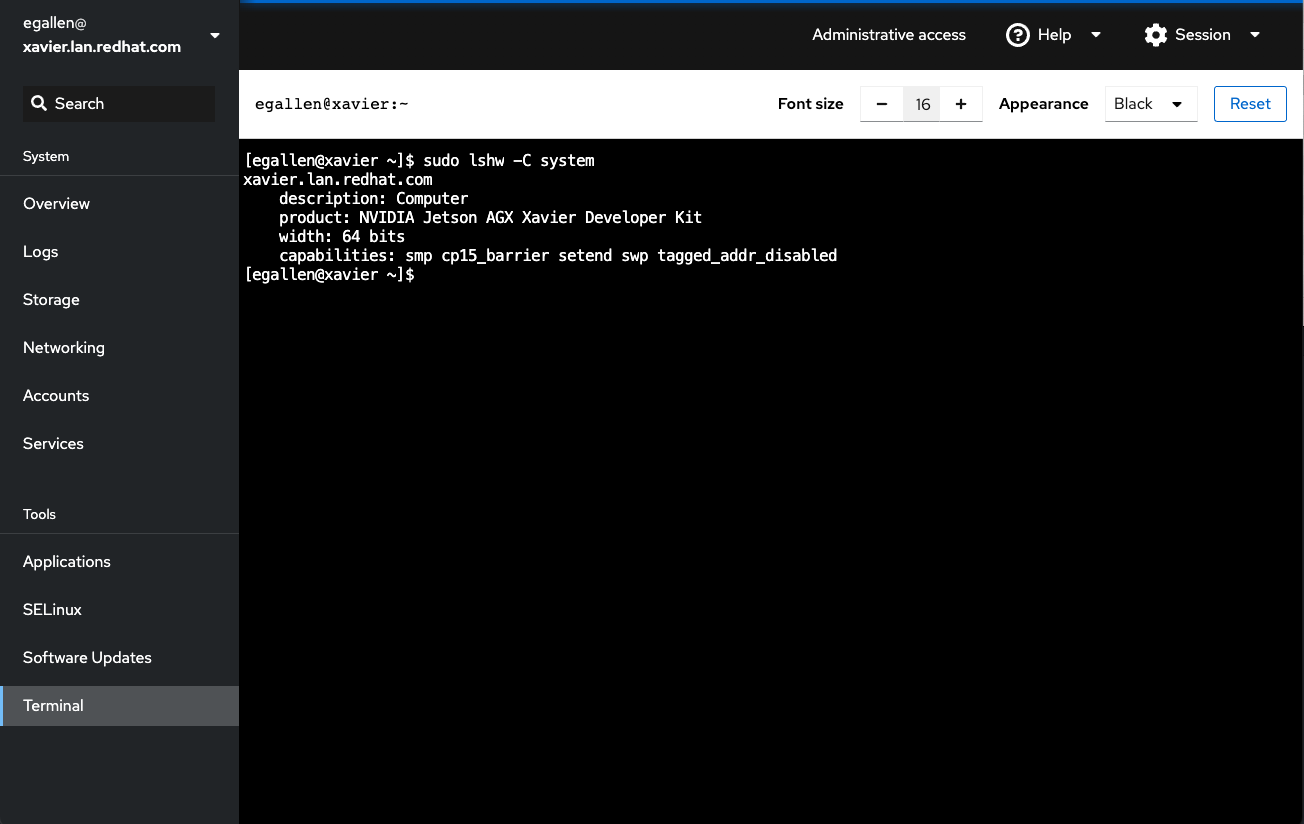

We can find the system model with the lshw tool:

[egallen@xavier ~]$ sudo dnf install lshw -y

[egallen@xavier ~]$ sudo lshw -C system

xavier.lan.redhat.com

description: Computer

product: NVIDIA Jetson AGX Xavier Developer Kit

width: 64 bits

capabilities: smp cp15_barrier setend swp tagged_addr_disabled

We can see the PCI bus:

[egallen@xavier ~]$ lspci

0000:00:00.0 PCI bridge: NVIDIA Corporation Tegra PCIe x8 Endpoint (rev a1)

0000:01:00.0 Non-Volatile memory controller: Phison Electronics Corporation E12 NVMe Controller (rev 01)

0001:00:00.0 PCI bridge: NVIDIA Corporation Tegra PCIe x1 Root Complex (rev a1)

0001:01:00.0 SATA controller: Marvell Technology Group Ltd. Device 9171 (rev 13)

SELinux is enabled:

[egallen@xavier ~]$ getenforce

Enforcing

[egallen@xavier ~]$ sestatus

SELinux status: enabled

SELinuxfs mount: /sys/fs/selinux

SELinux root directory: /etc/selinux

Loaded policy name: targeted

Current mode: enforcing

Mode from config file: enforcing

Policy MLS status: enabled

Policy deny_unknown status: allowed

Memory protection checking: actual (secure)

Max kernel policy version: 33

Cockpit

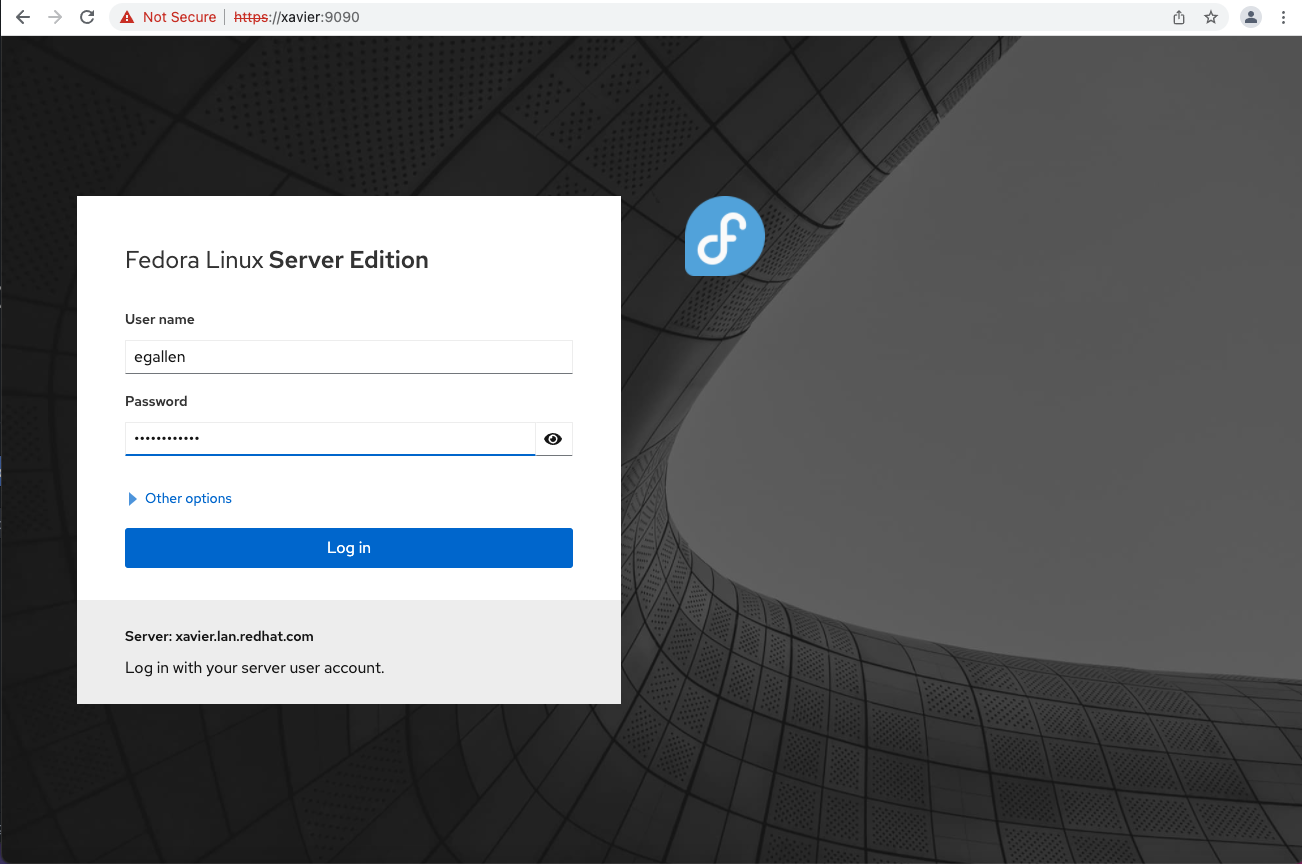

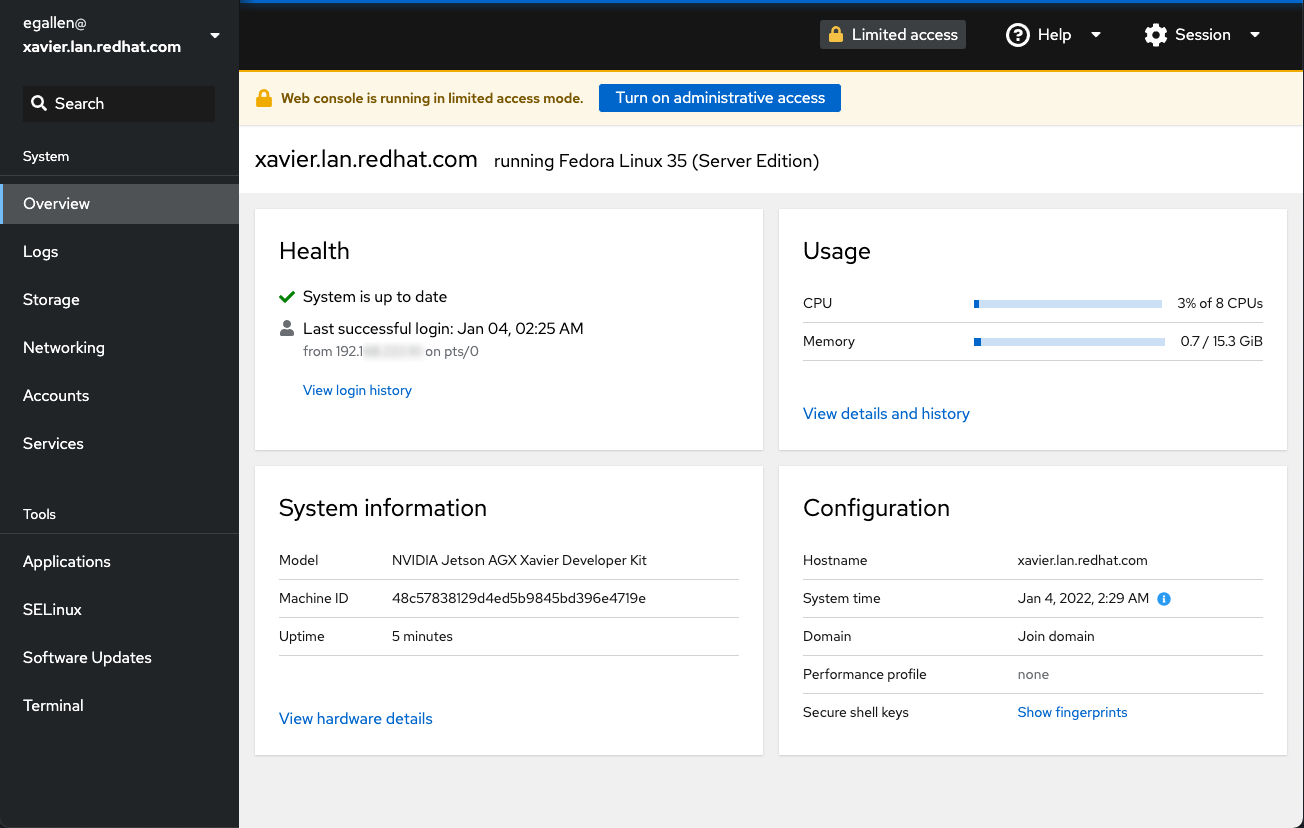

We can try the Cockpit, the server administration tool installed with Fedora:

If you connect with a browser, the port 9090 of you Jetson AGX Xavier, you will see the authentification screen:

We have access to the Health status:

One console is available in the browser, use the Terminal link:

Install MicroShift

You can find the detailed MicroShift Getting Started documentation here.

We will use the Podman containerized deployment method.

MicroShift requires CRI-O to be installed and running on the host:

[egallen@xavier ~]$ sudo dnf module enable -y cri-o:1.21

Last metadata expiration check: 0:12:27 ago on Tue 04 Jan 2022 11:14:33 AM CET.

Depende5cies resolved)

====================================================================================================================================================================

Package Architecture Version Repository Size

====================================================================================================================================================================

Enabling module streams:

cri-o 1.21

Transaction Summary

====================================================================================================================================================================

Complete!

Install cri-o and cri-tools packages:

[egallen@xavier ~]$ sudo dnf install -y cri-o cri-tools

...

Installed:

conmon-2:2.0.30-2.fc35.aarch64 container-selinux-2:2.170.0-2.fc35.noarch containernetworking-plugins-1.0.1-1.fc35.aarch64

containers-common-4:1-32.fc35.noarch cri-o-1.21.3-1.module_f35+13330+6bc9c749.aarch64 cri-tools-1.19.0-1.module_f35+12974+2bc66b5d.aarch64

criu-3.16.1-2.fc35.aarch64 fuse-common-3.10.5-1.fc35.aarch64 fuse-overlayfs-1.7.1-2.fc35.aarch64

fuse3-3.10.5-1.fc35.aarch64 fuse3-libs-3.10.5-1.fc35.aarch64 libbsd-0.10.0-8.fc35.aarch64

libnet-1.2-4.fc35.aarch64 libslirp-4.6.1-2.fc35.aarch64 runc-2:1.0.2-2.fc35.aarch64

slirp4netns-1.1.12-2.fc35.aarch64 socat-1.7.4.2-1.fc35.aarch64

Enable the crio service:

[egallen@xavier ~]$ sudo systemctl enable crio --now

Created symlink /etc/systemd/system/multi-user.target.wants/crio.service → /usr/lib/systemd/system/crio.service.

Install Podman:

[egallen@xavier ~]$ sudo dnf install -y podman

...

Installed:

catatonit-0.1.7-1.fc35.aarch64 podman-3:3.4.4-1.fc35.aarch64 podman-gvproxy-3:3.4.4-1.fc35.aarch64 podman-plugins-3:3.4.4-1.fc35.aarch64

shadow-utils-subid-2:4.9-8.fc35.aarch64

Download the systemd microshift.service file:

[egallen@xavier ~]$ sudo curl -o /etc/systemd/system/microshift.service \

https://raw.githubusercontent.com/redhat-et/microshift/main/packaging/systemd/microshift-containerized.service

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1177 100 1177 0 0 4118 0 --:--:-- --:--:-- --:--:-- 4129

Reload configuration:

[egallen@xavier ~]$ sudo systemctl daemon-reload

We can enable the microshift service:

[egallen@xavier ~]$ sudo systemctl enable microshift --now

Created symlink /etc/systemd/system/multi-user.target.wants/microshift.service → /etc/systemd/system/microshift.service.

Created symlink /etc/systemd/system/default.target.wants/microshift.service → /etc/systemd/system/microshift.service.

After the initial Quay download that took one minute, MicroShift is running:

[egallen@xavier ~]$ sudo systemctl status microshift.service

● microshift.service - MicroShift Containerized

Loaded: loaded (/etc/systemd/system/microshift.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-01-04 11:30:56 CET; 3s ago

Docs: man:podman-generate-systemd(1)

Process: 28474 ExecStartPre=/bin/rm -f /run/microshift.service.ctr-id (code=exited, status=0/SUCCESS)

Process: 28475 ExecStartPre=/usr/bin/mkdir -p /var/hpvolumes (code=exited, status=0/SUCCESS)

Main PID: 28655 (conmon)

Tasks: 2 (limit: 18723)

Memory: 407.1M

CPU: 50.233s

CGroup: /system.slice/microshift.service

└─28655 /usr/bin/conmon --api-version 1 -c 690fb9d4320e4ae76f165616add6308a97f74c7bd3b930a02fd19511a9bf4b38 -u 690fb9d4320e4ae76f165616add6308a97f74c7>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.264Z","caller":"membership/cluster.go:558","msg":"set initial cluste>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.265Z","caller":"etcdserver/server.go:2037","msg":"published local me>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: time="2022-01-04T10:30:36Z" level=info msg="etcd is ready"

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: time="2022-01-04T10:30:36Z" level=info msg="Starting kube-apiserver"

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.265Z","caller":"api/capability.go:76","msg":"enabled capabilities fo>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.266Z","caller":"etcdserver/server.go:2560","msg":"cluster version is>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.271Z","caller":"embed/serve.go:191","msg":"serving client traffic se>

Jan 04 11:30:36 xavier.lan.redhat.com conmon[28655]: {"level":"info","ts":"2022-01-04T10:30:36.281Z","caller":"embed/serve.go:191","msg":"serving client traffic se>

Jan 04 11:30:56 xavier.lan.redhat.com systemd[1]: Started MicroShift Containerized.

Jan 04 11:30:56 xavier.lan.redhat.com conmon[28655]: E0104 10:30:56.165943 1 controller.go:152] Unable to remove old endpoints from kubernetes service: Stora>

Install oc client

We can install the aarch64 oc client:

[egallen@xavier ~]$ curl -O https://mirror.openshift.com/pub/openshift-v4/aarch64/clients/ocp/latest/openshift-client-linux.tar.gz

[egallen@xavier ~]$ tar xvzf openshift-client-linux.tar.gz

README.md

oc

kubectl

[egallen@xavier ~]$ sudo install -t /usr/local/bin {kubectl,oc}

Prepare Kubeconfig client configuration

We can copy the kubeconfig file to the default location that can be accessed without administrator privilege:

[egallen@xavier ~]$ mkdir ~/.kube

[egallen@xavier ~]$ sudo cp /var/lib/microshift/resources/kubeadmin/kubeconfig ~/.kube/config

[egallen@xavier ~]$ sudo chown `whoami`: ~/.kube/config

Check MicroShift deployment

We can check the MicroShift version:

[egallen@xavier ~]$ sudo podman exec microshift microshift version

MicroShift Version: 4.8.0-0.microshift-2022-01-04-205130

Base OKD Version: 4.8.0-0.okd-2021-10-10-030117

We are using oc client version 4.9.11:

[egallen@xavier ~]$ oc version

Client Version: 4.9.11

Kubernetes Version: v1.21.1

After the service start, the container creation is in progress:

[egallen@xavier ~]$ oc get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system kube-flannel-ds-z274n 0/1 Init:1/2 0 22s

kubevirt-hostpath-provisioner kubevirt-hostpath-provisioner-qs8ck 0/1 ContainerCreating 0 11s

openshift-dns dns-default-cnnqk 0/2 ContainerCreating 0 22s

openshift-dns node-resolver-h9vjp 1/1 Running 0 22s

openshift-ingress router-default-85bcfdd948-4pxmd 0/1 ContainerCreating 0 26s

openshift-service-ca service-ca-7764c85869-zcmm8 0/1 ContainerCreating 0 27s

After 71s, all the MicroShift containers are running:

[egallen@xavier ~]$ oc get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system kube-flannel-ds-z274n 1/1 Running 0 71s

kubevirt-hostpath-provisioner kubevirt-hostpath-provisioner-qs8ck 1/1 Running 0 60s

openshift-dns dns-default-cnnqk 2/2 Running 0 71s

openshift-dns node-resolver-h9vjp 1/1 Running 0 71s

openshift-ingress router-default-85bcfdd948-4pxmd 1/1 Running 0 75s

openshift-service-ca service-ca-7764c85869-zcmm8 1/1 Running 0 76s

We are using the default namespace:

[egallen@xavier ~]$ oc project

Using project "default" from context named "microshift" on server "https://127.0.0.1:6443".

MicroShift has one node ready to run applications:

[egallen@xavier ~]$ oc get nodes

NAME STATUS ROLES AGE VERSION

xavier.lan.redhat.com Ready <none> 76s v1.21.0

Build our Flask test application

Because we have deployed one light Kubernetes, we will test one light application based on the micro web framework written in Python: Flask.

We will work in the folder edgeflask:

[egallen@xavier ~]$ mkdir edgeflask

[egallen@xavier ~]$ cd edgeflask

[egallen@xavier edgeflask]$

First, we can install buildah to create the OCI image:

[egallen@xavier ~]$ sudo dnf install buildah -y

...

Installed:

buildah-1.23.1-1.fc35.aarch64

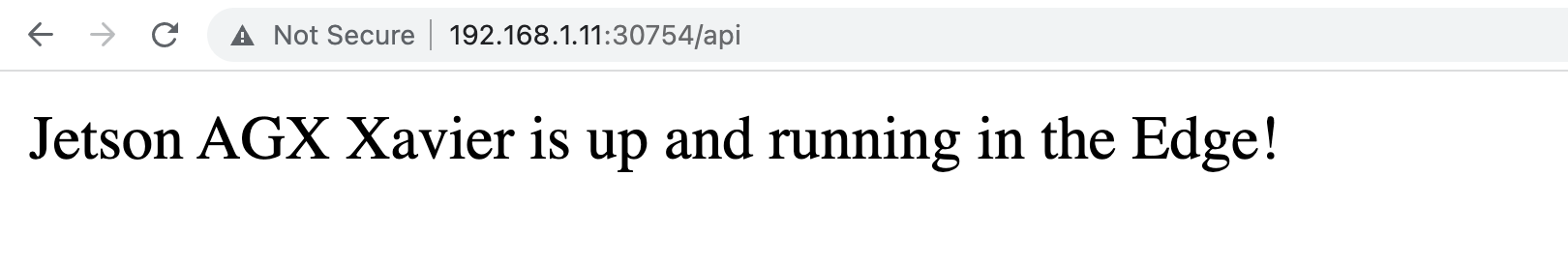

We will only listen in our Python application on port 5000 with the path /api:

[egallen@xavier edgeflask]$ cat << EOF > edgeflask.py

from flask import Flask

app = Flask(__name__)

@app.route('/api')

def helloIndex():

return 'Jetson AGX Xavier is up and running in the Edge!'

app.run(host='0.0.0.0', port=5000)

EOF

To prepare my Dockerfile, I’m testing some commands with podman on the Python 3.6 ubi8 image:

[egallen@xavier edgeflask]$ podman run -it registry.redhat.io/ubi8/python-36 bash

(app-root) cat /etc/redhat-release

Red Hat Enterprise Linux release 8.5 (Ootpa)

(app-root) pip install flask

Collecting flask

Downloading Flask-2.0.2-py3-none-any.whl (95 kB)

|████████████████████████████████| 95 kB 3.2 MB/s

...

We are preparing one simple requirements.txt file based on the Python commands tested in the ubi8 image:

[egallen@xavier edgeflask]$ cat << EOF > requirements.txt

flask

EOF

We can assemble this in one Dockerfile:

[egallen@xavier edgeflask]$ cat << EOF > Dockerfile

FROM registry.redhat.io/ubi8/python-36

ENV PORT 5000

EXPOSE 5000

WORKDIR /usr/src/app

COPY requirements.txt ./

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

ENTRYPOINT ["python"]

CMD ["edgeflask.py"]

EOF

We are ready for the build:

[egallen@xavier edgeflask]$ ls

Dockerfile edgeflask.py requirements.txt

We can login to registry.redhat.io to be able to pull the Python ubi8 image :

[egallen@xavier ~]$ buildah login registry.redhat.io

Username: myrhnuser

Password:

Login Succeeded!

Build the arm64 OCI image using a Dockerfile:

[egallen@xavier edgeflask]$ buildah build-using-dockerfile -t edgeflask:0.1 .

STEP 1/9: FROM registry.redhat.io/ubi8/python-36

STEP 2/9: ENV PORT 5000

STEP 3/9: EXPOSE 5000

STEP 4/9: WORKDIR /usr/src/app

STEP 5/9: COPY requirements.txt ./

STEP 6/9: RUN pip install --no-cache-dir -r requirements.txt

Collecting flask

Downloading Flask-2.0.2-py3-none-any.whl (95 kB)

Collecting Jinja2>=3.0

Downloading Jinja2-3.0.3-py3-none-any.whl (133 kB)

Collecting itsdangerous>=2.0

Downloading itsdangerous-2.0.1-py3-none-any.whl (18 kB)

Collecting click>=7.1.2

Downloading click-8.0.3-py3-none-any.whl (97 kB)

Collecting Werkzeug>=2.0

Downloading Werkzeug-2.0.2-py3-none-any.whl (288 kB)

Collecting importlib-metadata

Downloading importlib_metadata-4.8.3-py3-none-any.whl (17 kB)

Collecting MarkupSafe>=2.0

Downloading MarkupSafe-2.0.1-cp36-cp36m-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (26 kB)

Collecting dataclasses

Downloading dataclasses-0.8-py3-none-any.whl (19 kB)

Collecting typing-extensions>=3.6.4

Downloading typing_extensions-4.0.1-py3-none-any.whl (22 kB)

Collecting zipp>=0.5

Downloading zipp-3.6.0-py3-none-any.whl (5.3 kB)

Installing collected packages: zipp, typing-extensions, MarkupSafe, importlib-metadata, dataclasses, Werkzeug, Jinja2, itsdangerous, click, flask

Successfully installed Jinja2-3.0.3 MarkupSafe-2.0.1 Werkzeug-2.0.2 click-8.0.3 dataclasses-0.8 flask-2.0.2 importlib-metadata-4.8.3 itsdangerous-2.0.1 typing-extensions-4.0.1 zipp-3.6.0

WARNING: You are using pip version 21.0.1; however, version 21.3.1 is available.

You should consider upgrading via the '/opt/app-root/bin/python3.6 -m pip install --upgrade pip' command.

STEP 7/9: COPY . .

STEP 8/9: ENTRYPOINT ["python"]

STEP 9/9: CMD ["edgeflask.py"]

COMMIT edgeflask:0.1

Getting image source signatures

Copying blob 7565b94f8be5 skipped: already exists

Copying blob 197717547a28 skipped: already exists

Copying blob d009a34469eb skipped: already exists

Copying blob d79e51d8fe48 skipped: already exists

Copying blob a6b666b75521 skipped: already exists

Copying blob b19c7c2f0853 done

Copying config 8bdd14df56 done

Writing manifest to image destination

Storing signatures

The image is available locally:

[egallen@xavier edgeflask]$ buildah images

REPOSITORY TAG IMAGE ID CREATED SIZE

localhost/edgeflask 0.1 8bdd14df5632 3 minutes ago 875 MB

Push the application image to the Quay external registry

We can login to our favorite registry, I’m picking the Quay registry quay.io:

[egallen@xavier ~]$ buildah login quay.io

Username: egallen+token

Password:

Login Succeeded!

We can push our image to the Quay registry:

[egallen@xavier edgeflask]$ buildah push edgeflask:0.1 quay.io/egallen/edgeflask:0.1

Getting image source signatures

Copying blob b19c7c2f0853 done

Copying blob d79e51d8fe48 skipped: already exists

Copying blob a6b666b75521 skipped: already exists

Copying blob 197717547a28 skipped: already exists

Copying blob 7565b94f8be5 skipped: already exists

Copying blob d009a34469eb skipped: already exists

Copying config 8bdd14df56 done

Writing manifest to image destination

Copying config 8bdd14df56 done

Writing manifest to image destination

Storing signatures

Deploy the application on Kubernetes

We can create Kubernetes Service object that external clients can use to access the application Deployment.

Deployment scenario:

- Run four instances of the Flask application

- Create a Service object that exposes a node port

- Use the Service object to access the running application

We can prepare the application deployment yaml:

[egallen@xavier edgeflask]$ cat << EOF > edgeflask.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: edgeflask

spec:

selector:

matchLabels:

run: load-balancer-edgeflask

replicas: 4

template:

metadata:

labels:

run: load-balancer-edgeflask

spec:

containers:

- name: edgeflask

image: quay.io/egallen/edgeflask:0.1

ports:

- containerPort: 5000

protocol: TCP

EOF

We can create the Deployment with the associated ReplicaSet. The ReplicaSet has four Pods each of which runs the Flask application.

[egallen@xavier edgeflask]$ oc apply -f edgeflask.yaml

pod/edgeflask created

The deployment is completed, we can display information about the Deployment:

[egallen@xavier edgeflask]$ oc get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

edgeflask 4/4 4 4 7s

[egallen@xavier edgeflask]$ oc describe deployments edgeflask

Name: edgeflask

Namespace: default

CreationTimestamp: Tue, 11 Jan 2022 01:15:56 +0100

Labels: <none>

Annotations: deployment.kubernetes.io/revision: 1

Selector: run=load-balancer-edgeflask

Replicas: 4 desired | 4 updated | 4 total | 4 available | 0 unavailable

StrategyType: RollingUpdate

MinReadySeconds: 0

RollingUpdateStrategy: 25% max unavailable, 25% max surge

Pod Template:

Labels: run=load-balancer-edgeflask

Containers:

edgeflask:

Image: quay.io/egallen/edgeflask:0.1

Port: 5000/TCP

Host Port: 0/TCP

Environment: <none>

Mounts: <none>

Volumes: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: <none>

NewReplicaSet: edgeflask-976f7f755 (4/4 replicas created)

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal ScalingReplicaSet 4m55s deployment-controller Scaled up replica set edgeflask-976f7f755 to 4

All pods are running:

[egallen@xavier edgeflask]$ oc get pods

NAME READY STATUS RESTARTS AGE

edgeflask-976f7f755-6b88l 1/1 Running 0 11s

edgeflask-976f7f755-7z2sd 1/1 Running 0 11s

edgeflask-976f7f755-hqt66 1/1 Running 0 11s

edgeflask-976f7f755-q8bjt 1/1 Running 0 11s

We can check the logs of one pod:

[egallen@xavier edgeflask]$ oc logs edgeflask-976f7f755-6b88l

* Serving Flask app 'edgeflask' (lazy loading)

* Environment: production

WARNING: This is a development server. Do not use it in a production deployment.

Use a production WSGI server instead.

* Debug mode: off

* Running on all addresses.

WARNING: This is a development server. Do not use it in a production deployment.

* Running on http://10.42.0.54:5000/ (Press CTRL+C to quit)

We can create a Service object that exposes the deployment with a NodePort:

[egallen@xavier edgeflask]$ oc expose deployment edgeflask --type=NodePort --name=edgeflask-service

service/edgeflask-service exposed

The edgeflask Service is running on port 30754:

[egallen@xavier edgeflask]$ oc get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

edgeflask-service NodePort 10.43.108.178 <none> 5000:30754/TCP 9s

kubernetes ClusterIP 10.43.0.1 <none> 443/TCP 6d13h

openshift-apiserver ClusterIP None <none> 443/TCP 6d13h

openshift-oauth-apiserver ClusterIP None <none> 443/TCP 6d13h

We can check the edgeflask-service status:

[egallen@xavier edgeflask]$ oc describe services edgeflask-service

Name: edgeflask-service

Namespace: default

Labels: <none>

Annotations: <none>

Selector: run=load-balancer-edgeflask

Type: NodePort

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.43.108.178

IPs: 10.43.108.178

Port: <unset> 5000/TCP

TargetPort: 5000/TCP

NodePort: <unset> 30754/TCP

Endpoints: 10.42.0.51:5000,10.42.0.52:5000,10.42.0.53:5000 + 1 more...

Session Affinity: None

External Traffic Policy: Cluster

Events: <none>

The service is running and is reachable in the network:

egallen@macbook ~ % curl http://192.168.1.11:30754/api

Jetson AGX Xavier is up and running in the Edge!