Open Data Hub v0.5.1 release

Open Data Hub v0.5.1 was released February 16, 2020.

Release node: https://opendatahub.io/news/2020-02-16/odh-release-0.5.1-blog.html

Open Data Hub includes many tools that are essential to a comprehensive AI/ML end-to-end platform. This new release integrate some bug fixes that resolve issues when deploying on OpenShift Container Platform v4.3. JupyterHub deployment and Spark cluster resources have now a greater customization. Let’s try the Data science tools JupyterHub 3.0.7 on OpenShift 4.3.

First, open an OpenShift 4.3 console.

Install the Open Data Hub Operator

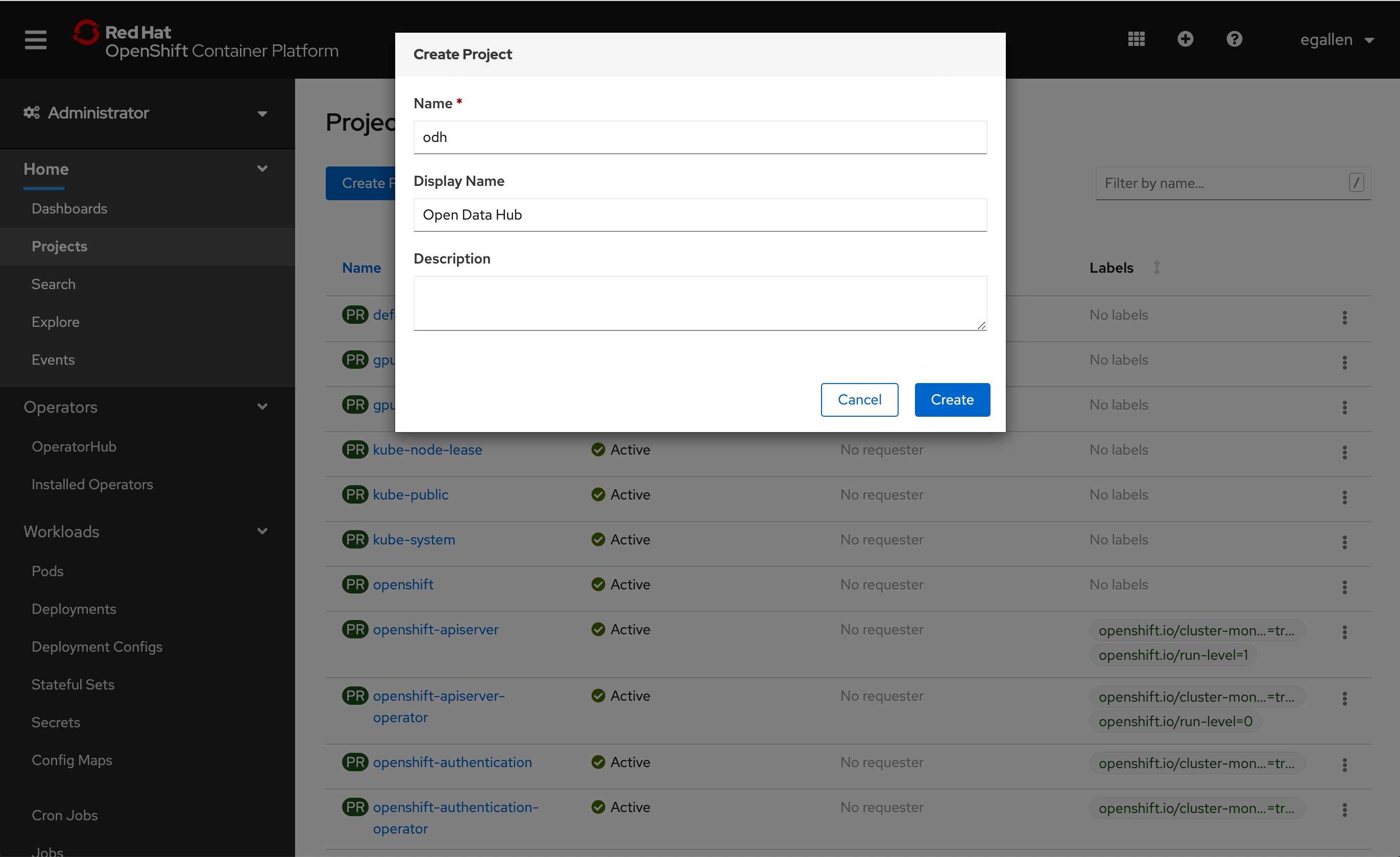

Create a project “odh”:

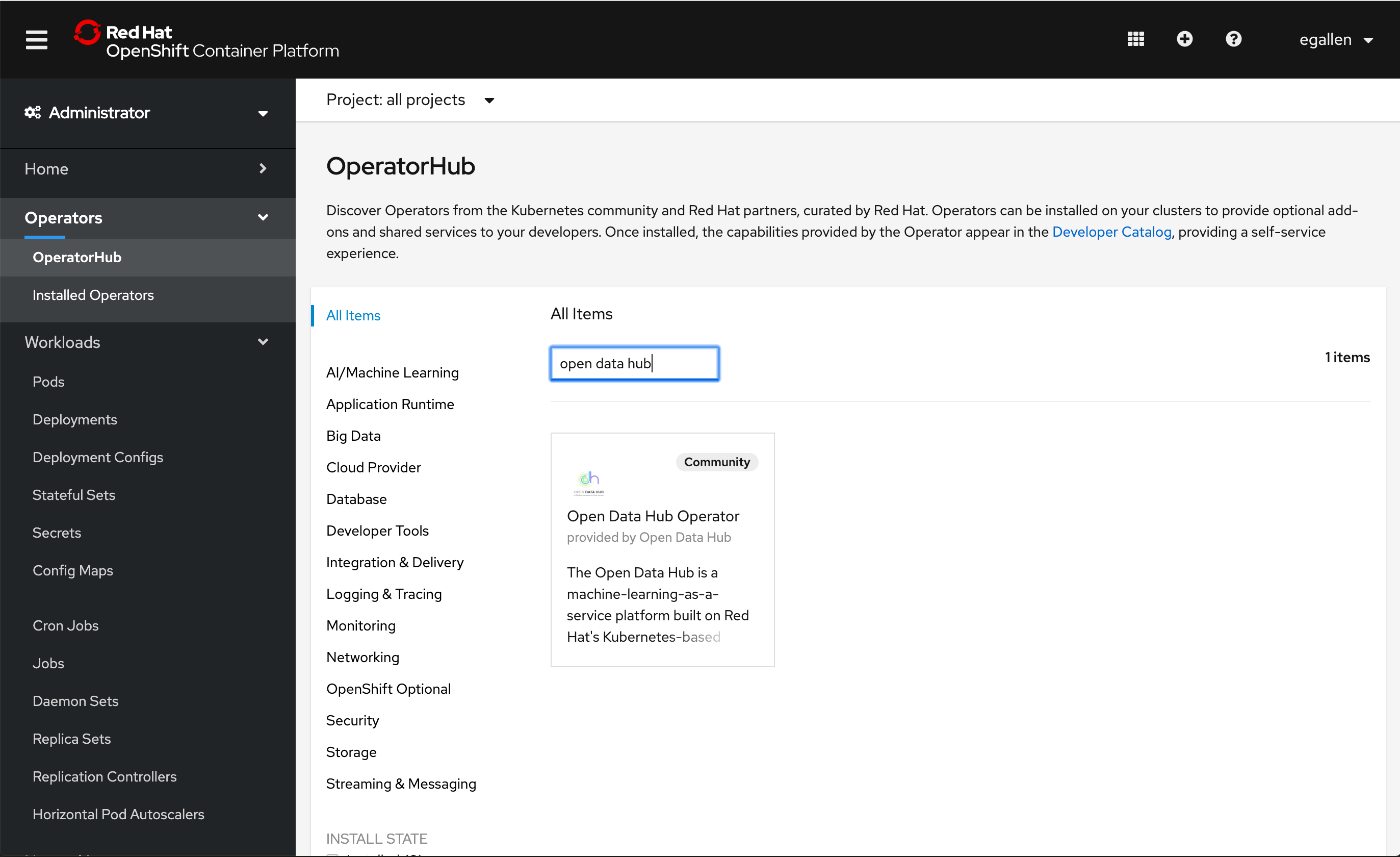

Go to Operators > OperatorHub and search “open data hub”:

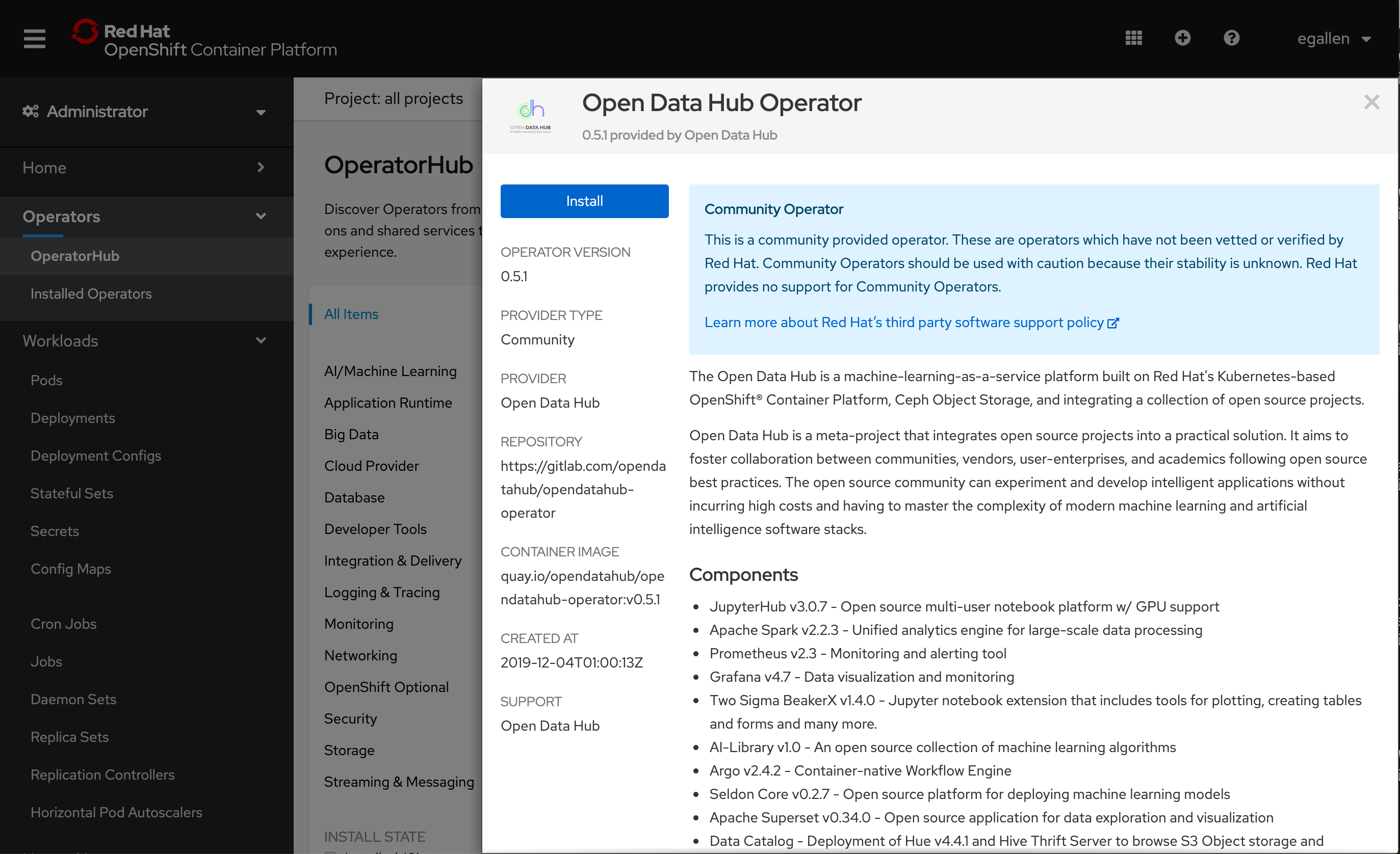

Click on “Install”:

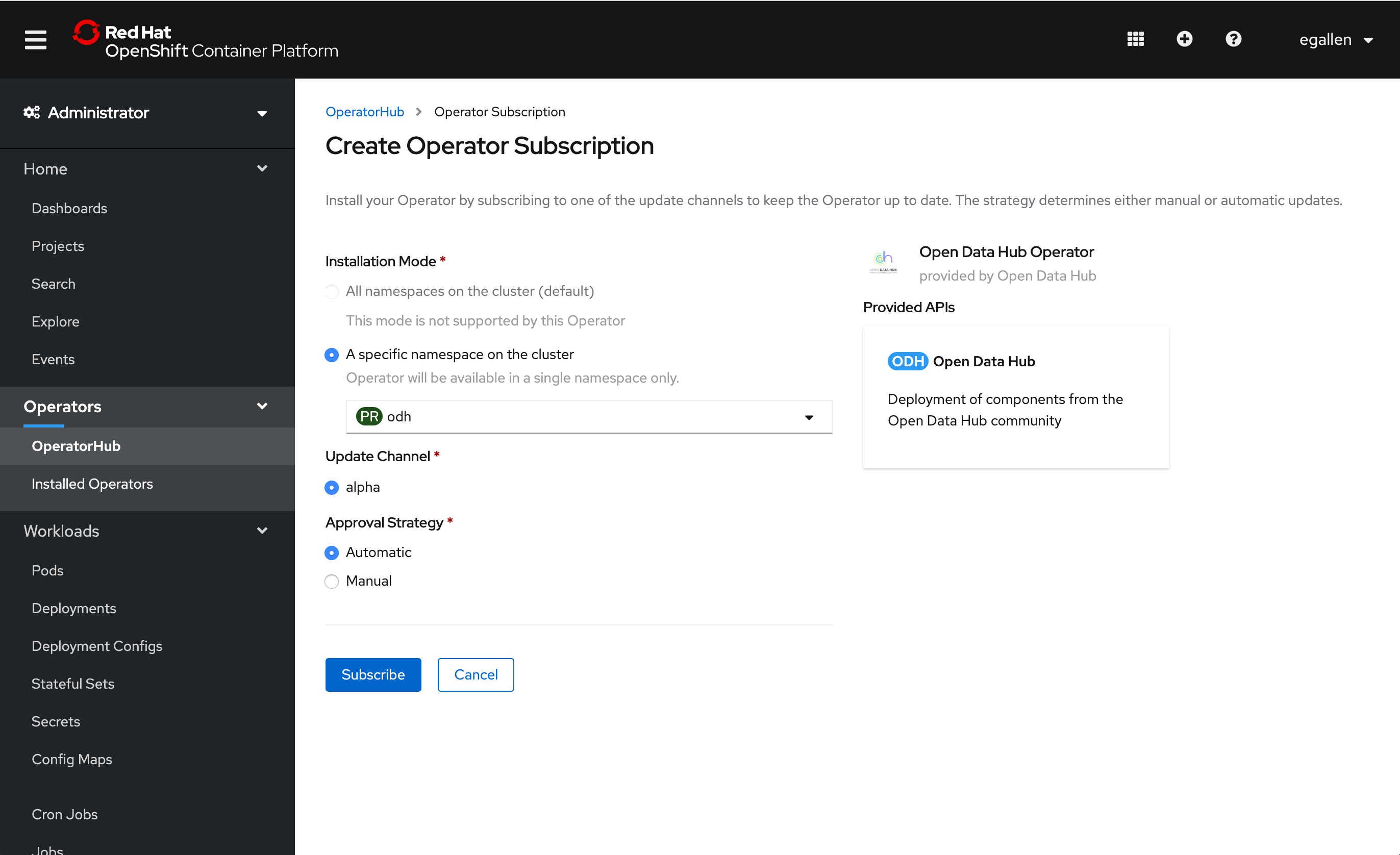

Click on “Subscribe”:

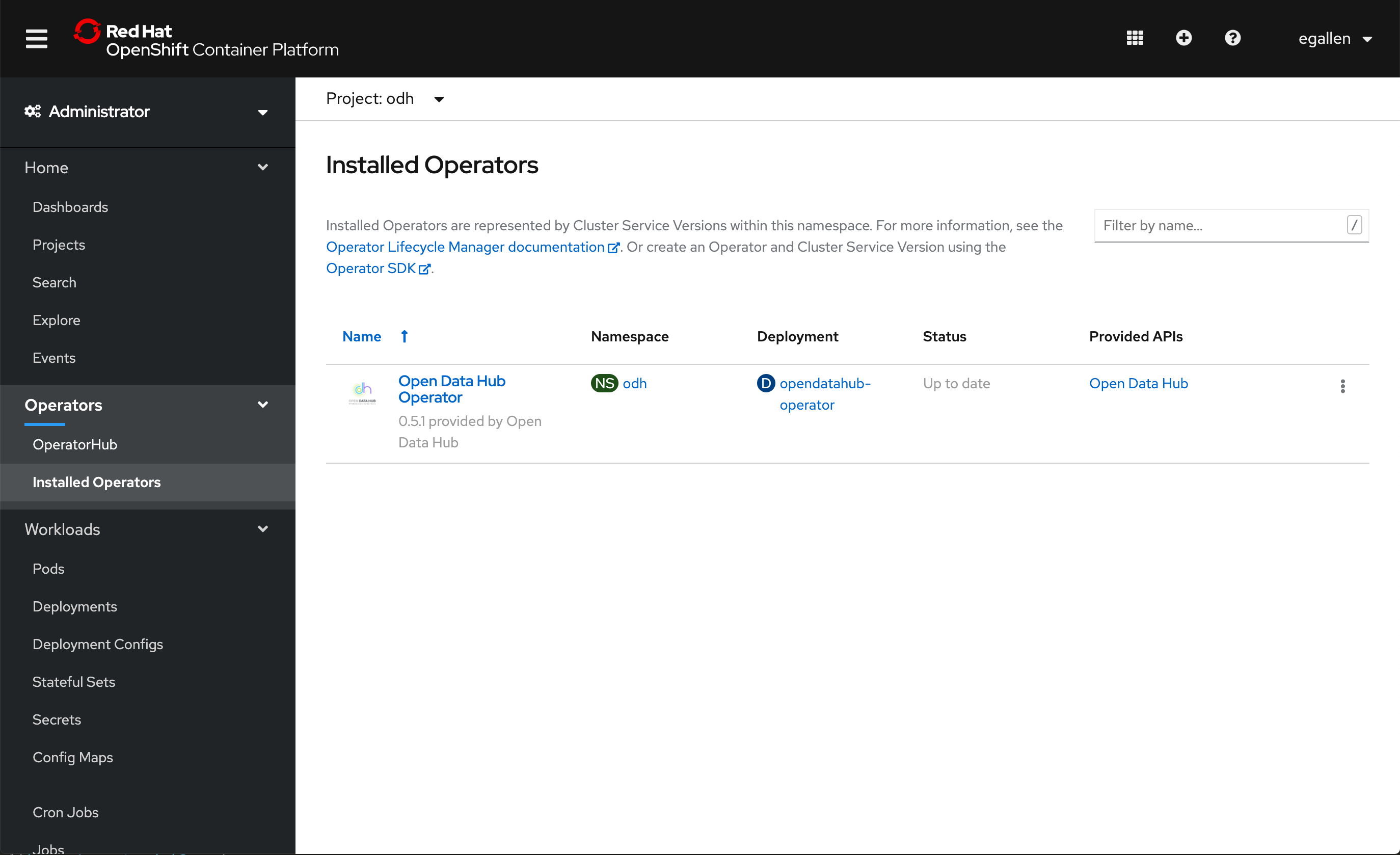

Open data Hub v0.5.1 is installed:

Create a New Open Data Hub deployment

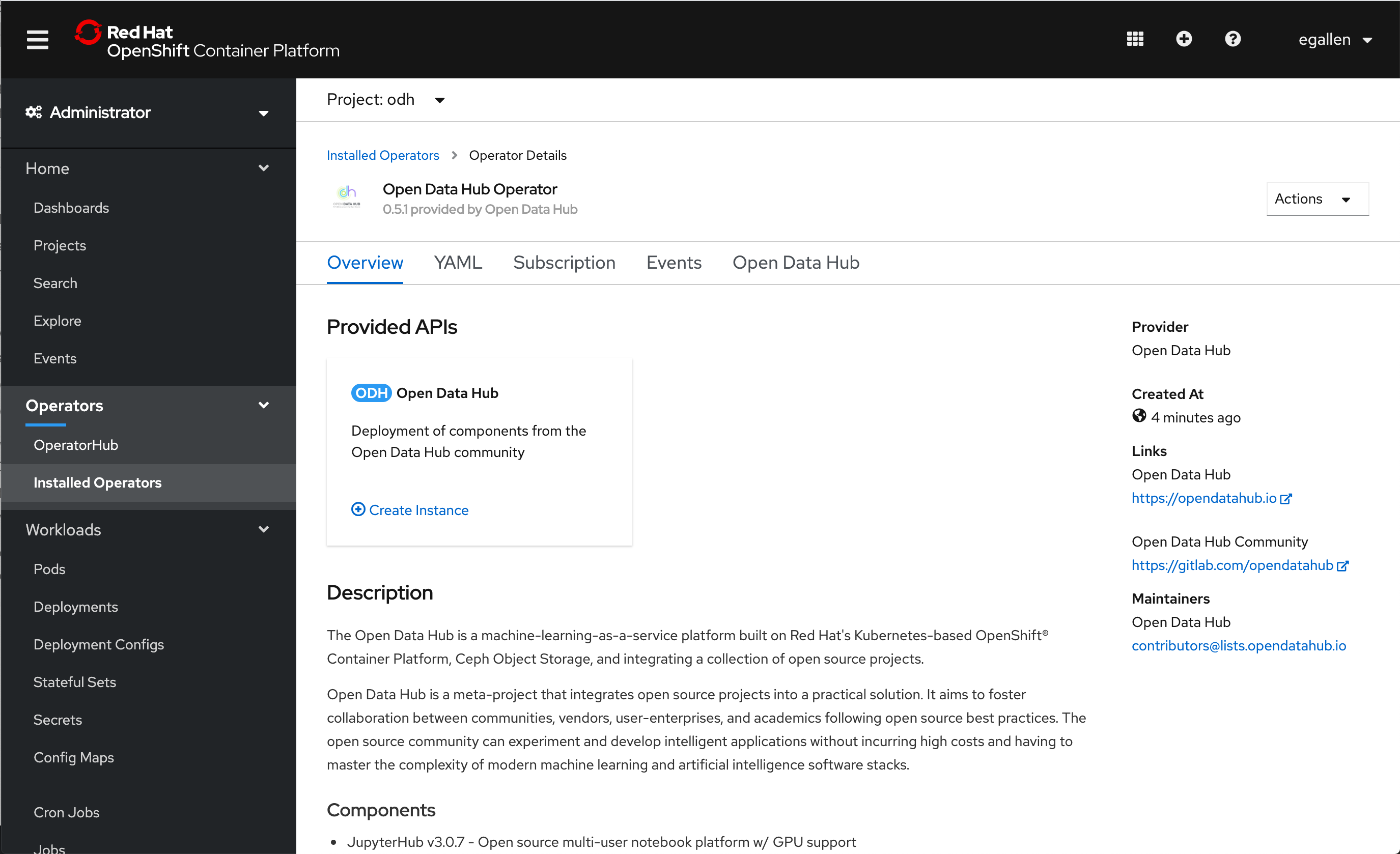

Click on “Create Instance”

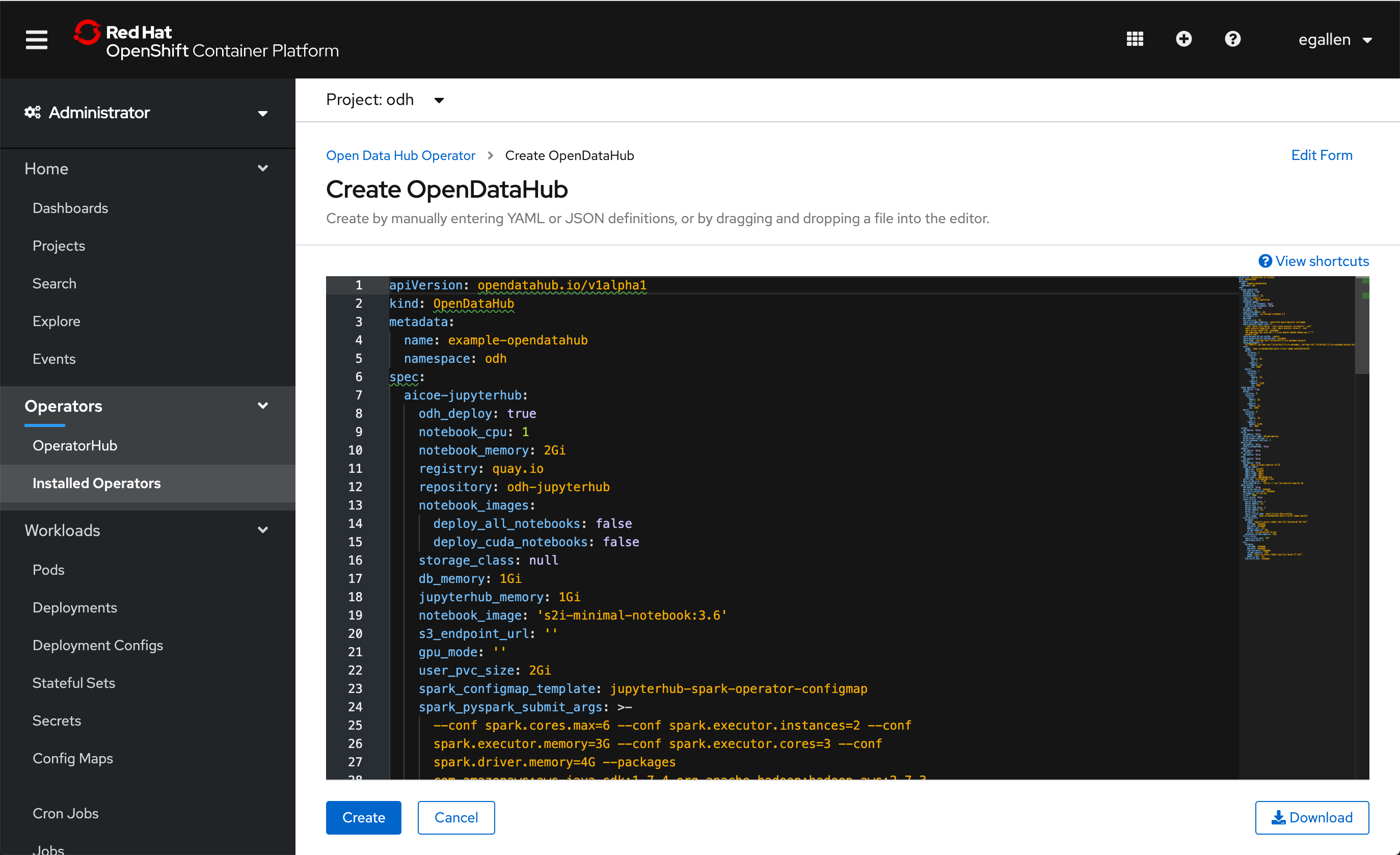

Click on “Create”:

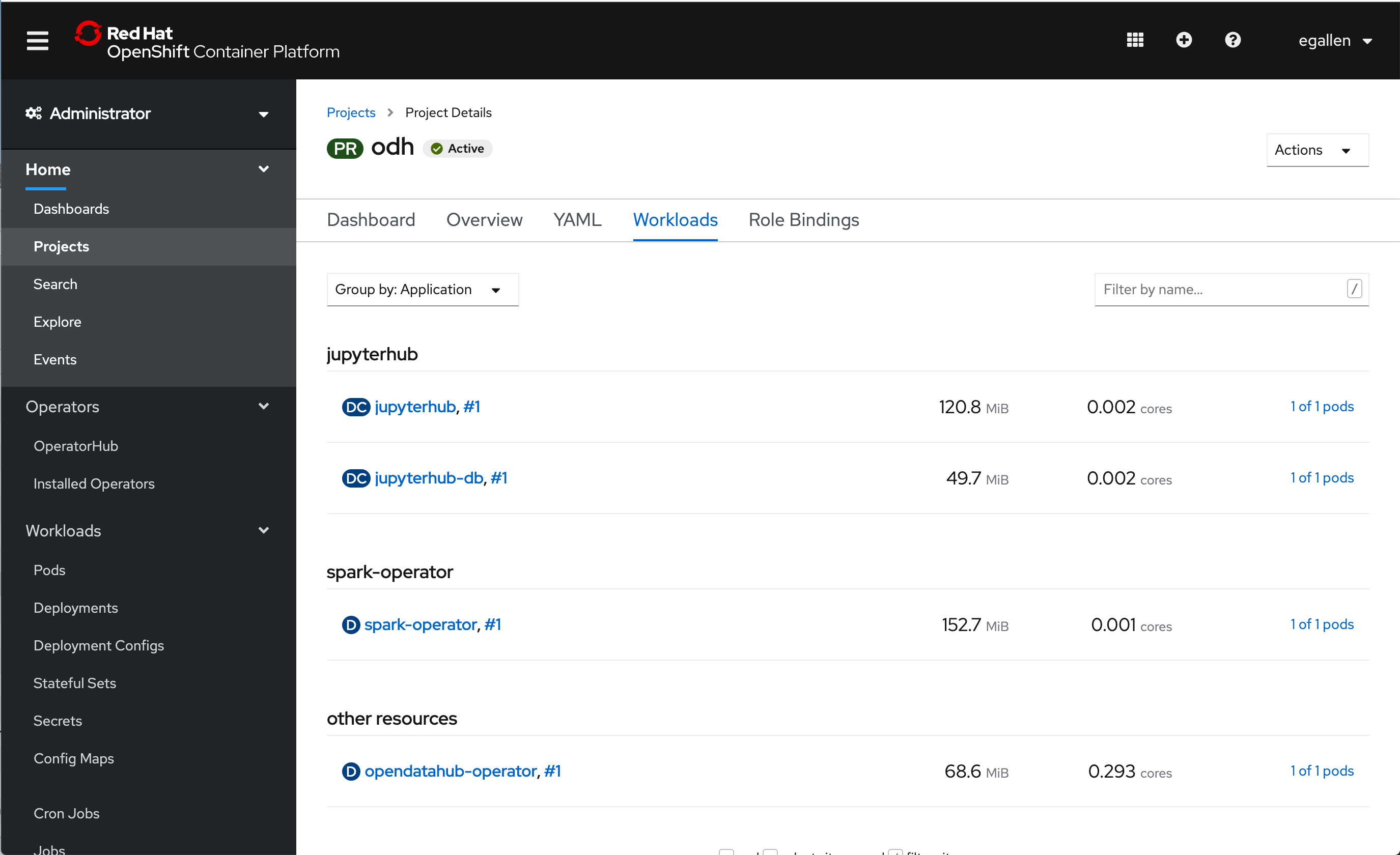

When the instance is created, you can check the running workloads in “Home” > “Projects” > “odh” > Tab “Workloads”:

Test Jupyter Hub

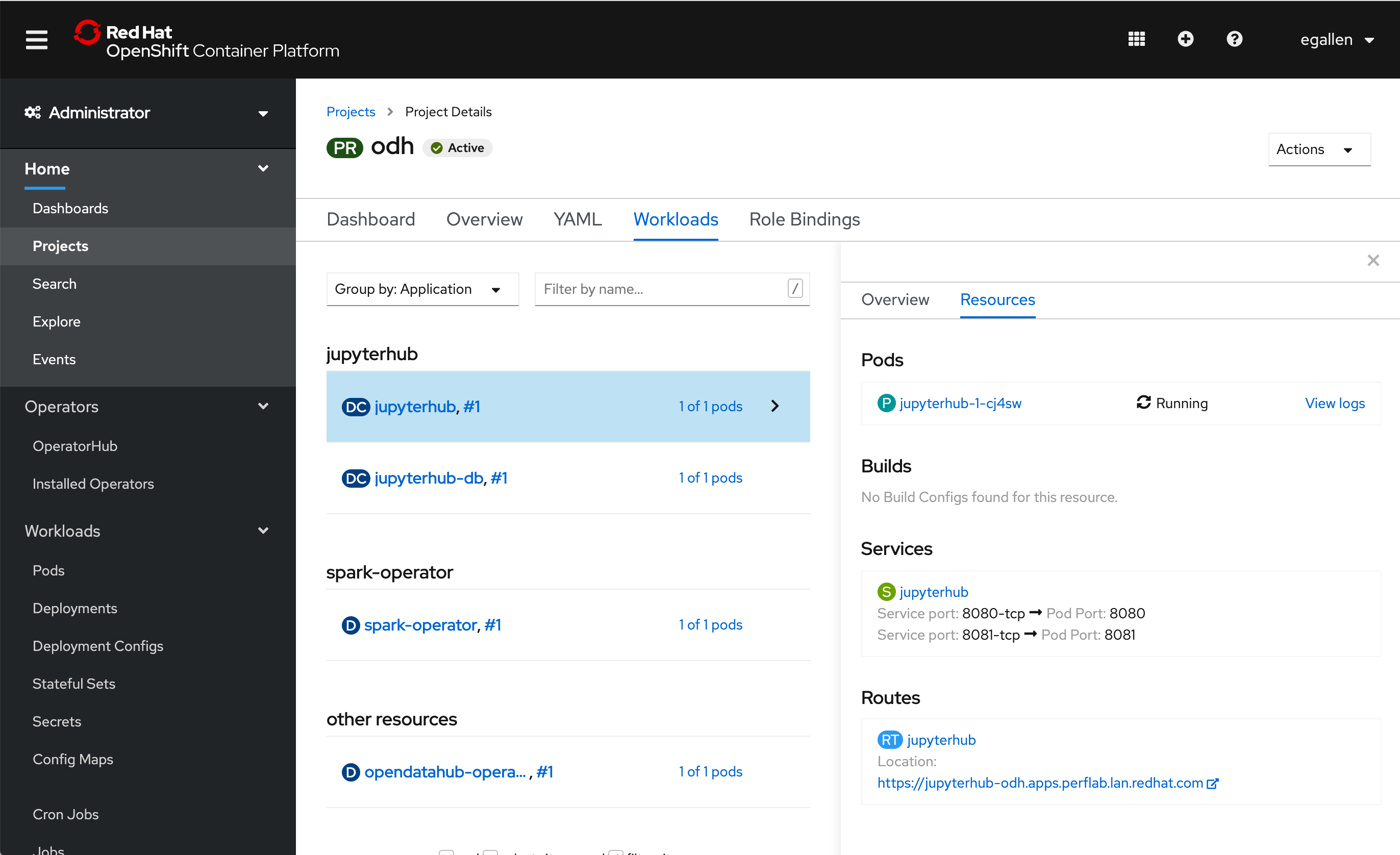

Click on the workoad “jupyterhub” and the tab “Resources”, you can copy the route configured.

The route is “https://jupyterhub-odh.apps.perflab.lan.redhat.com”, copy this URL:

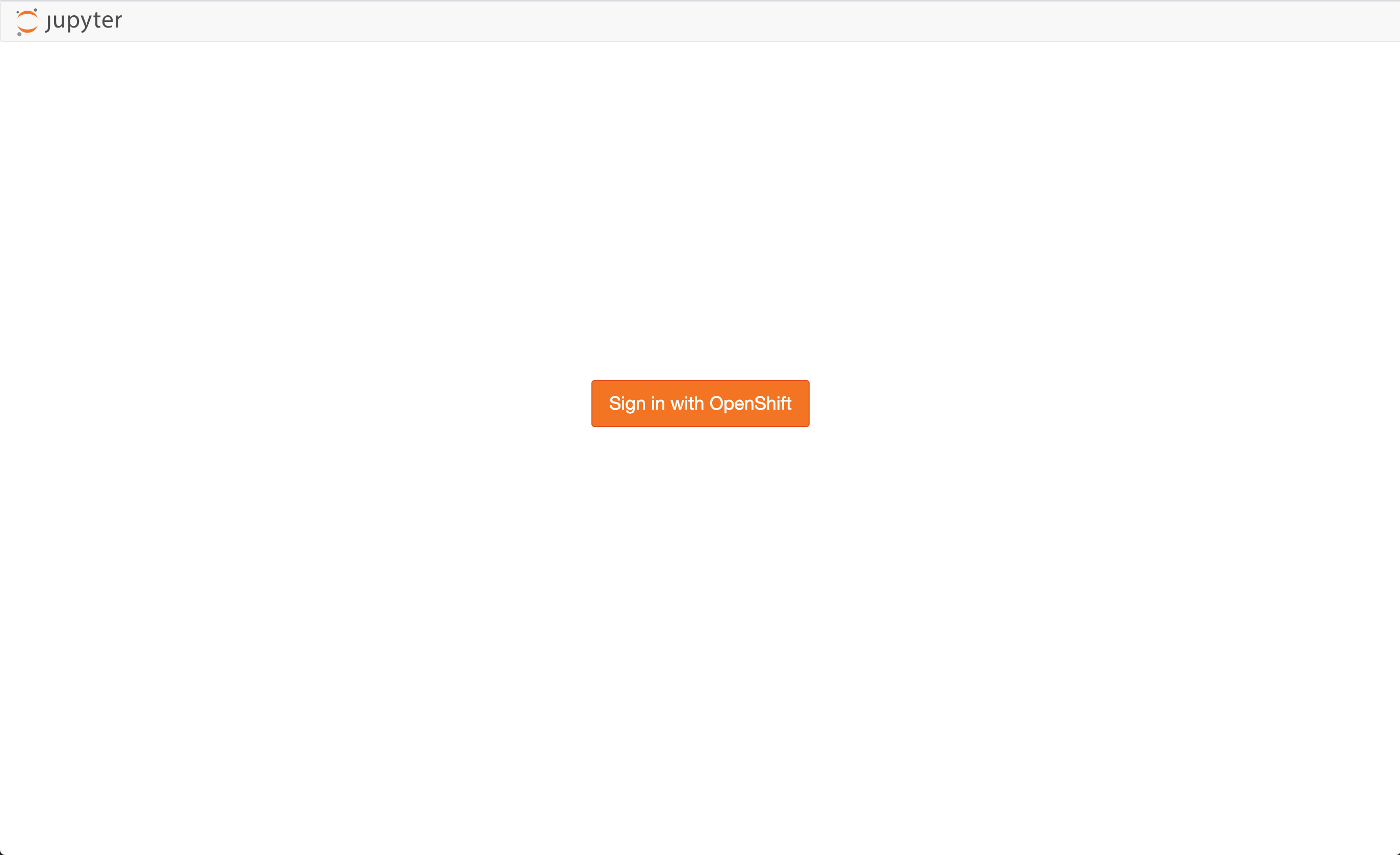

In a new browser tab paste and open the jupyterhub URL, click on “Sign in with OpenShift”:

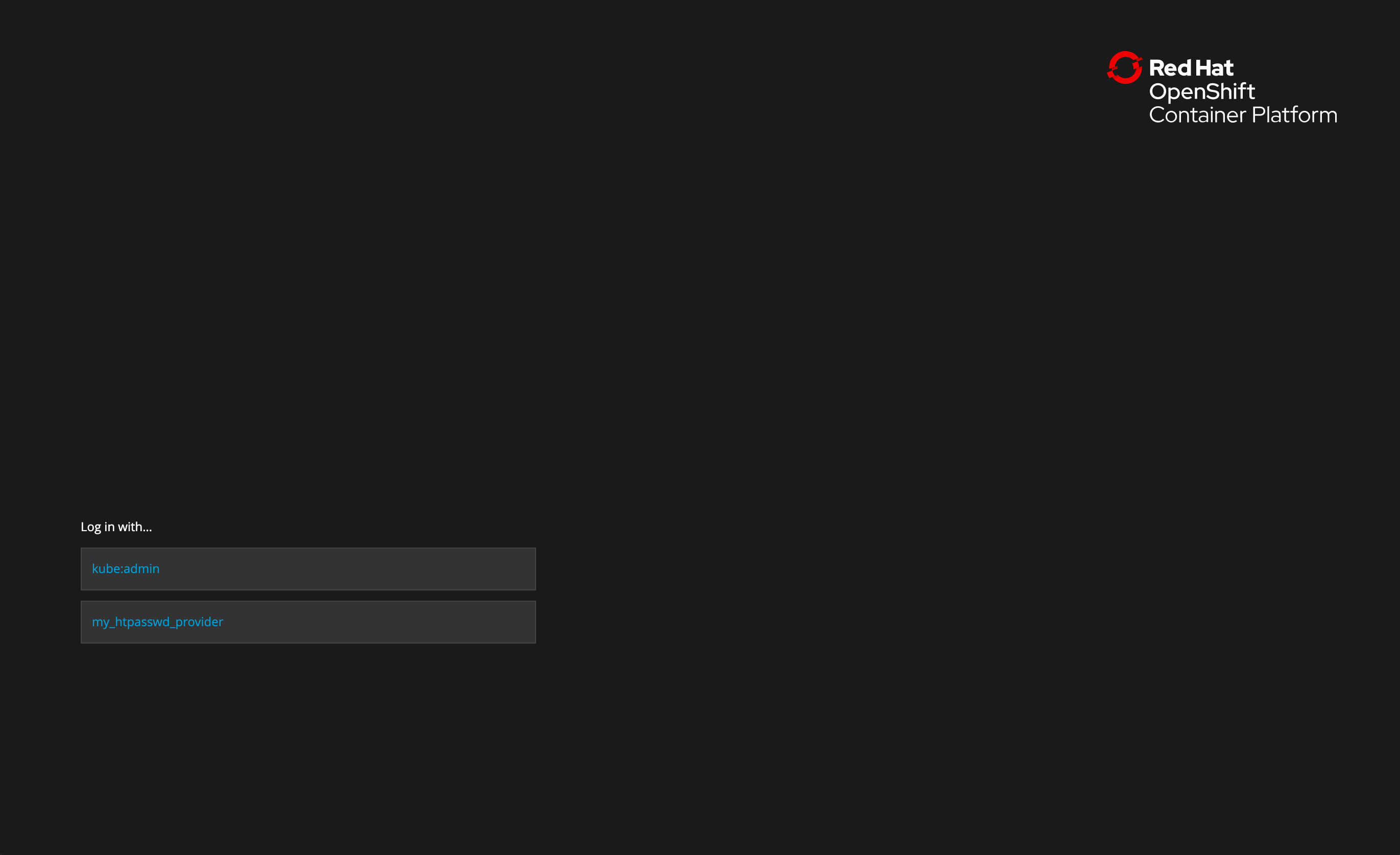

Choose the provider:

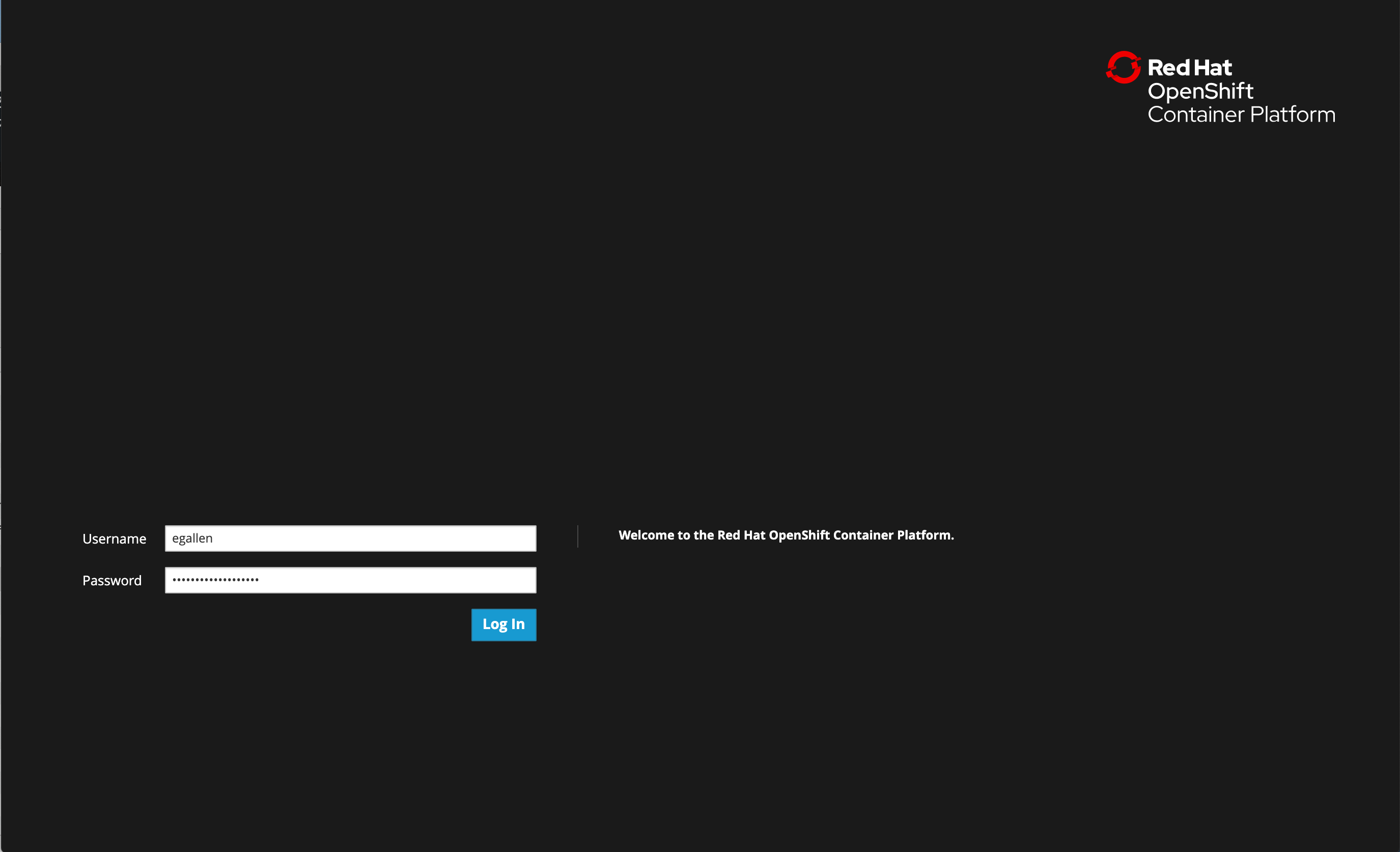

Type your credentials:

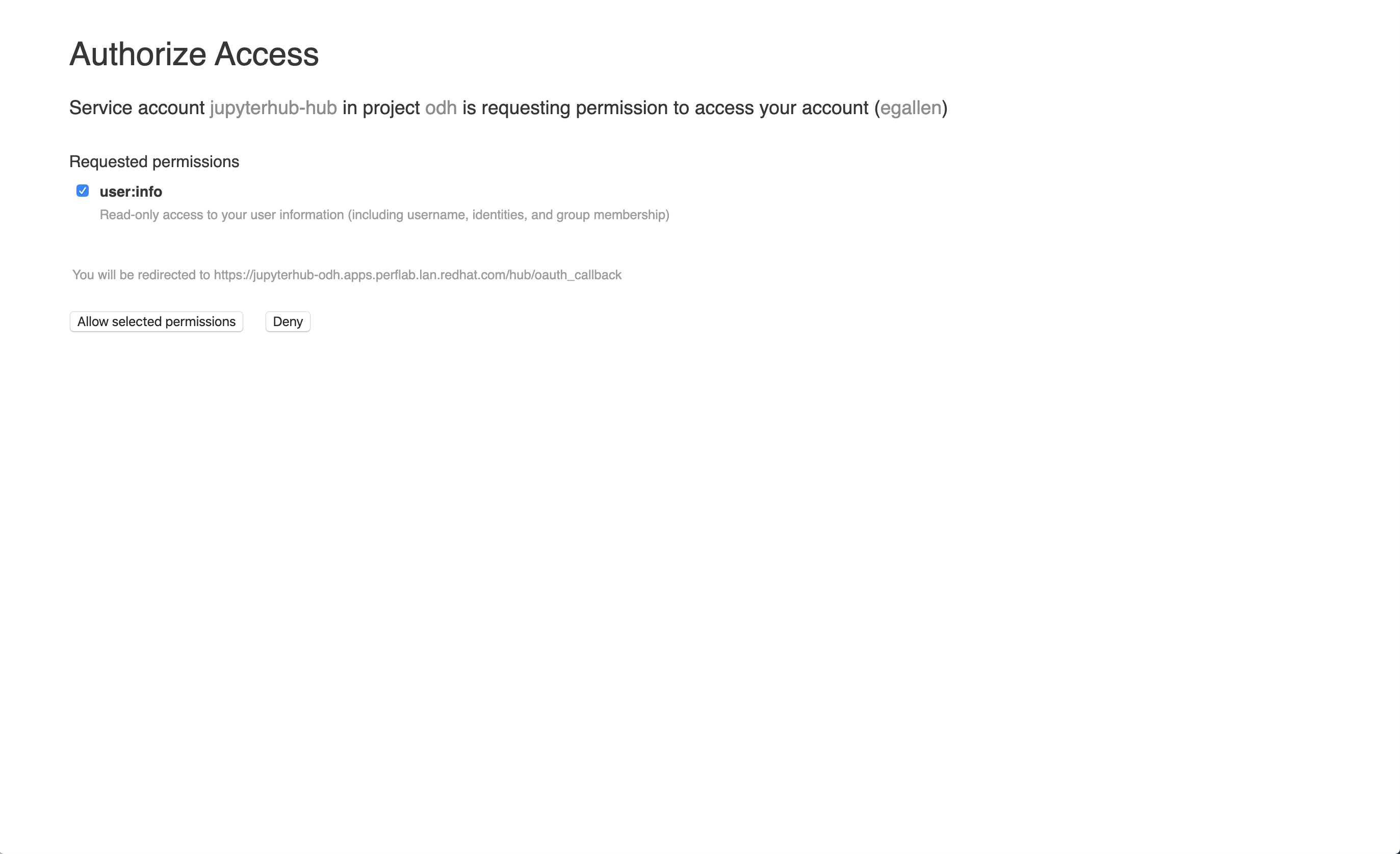

Authorize Access, click on “Allow selected permissions”:

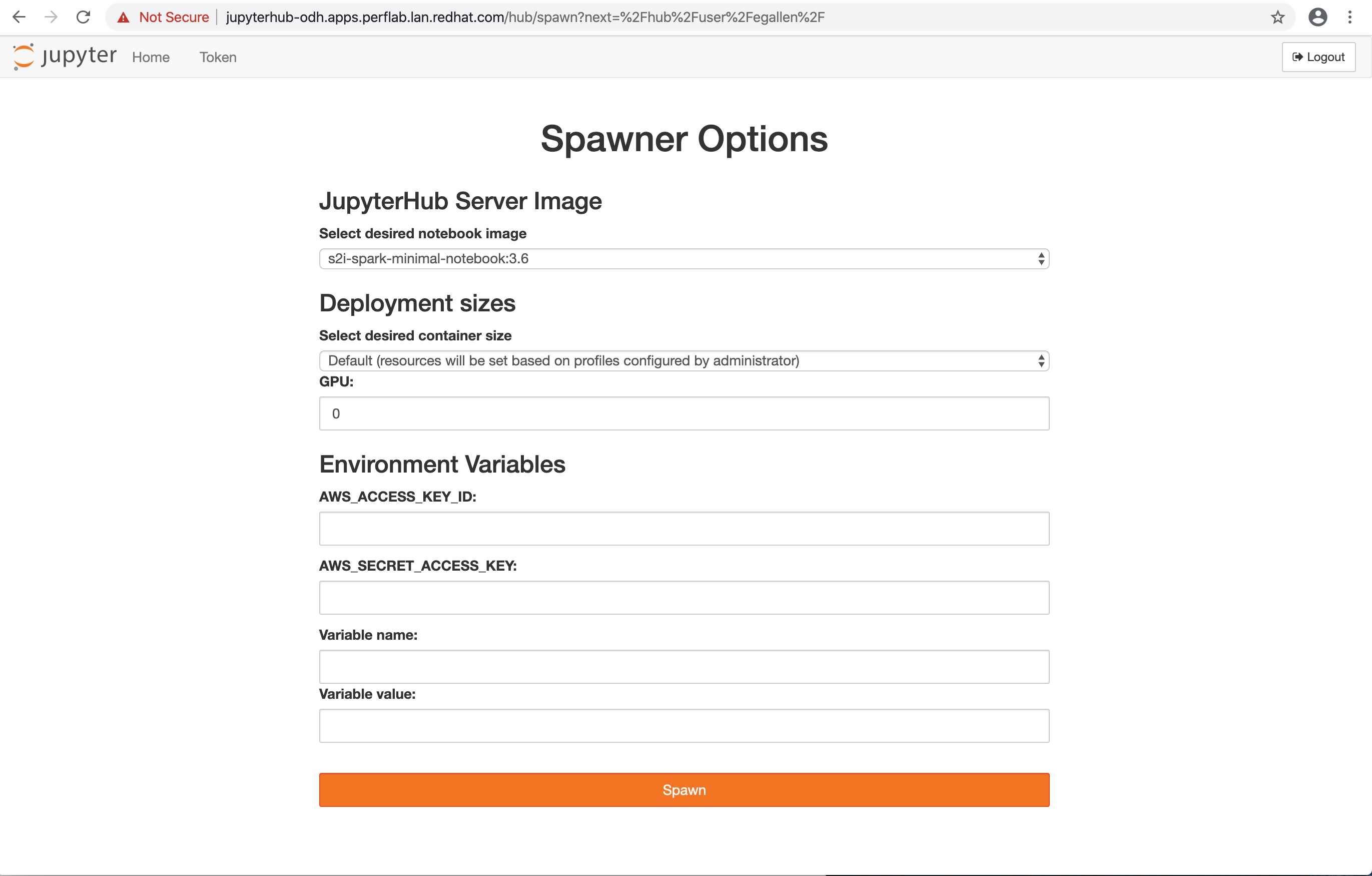

You are ready with the Jupyter Hub form to configure your first Jupyter Notebook.

First launch a notebook without GPU:

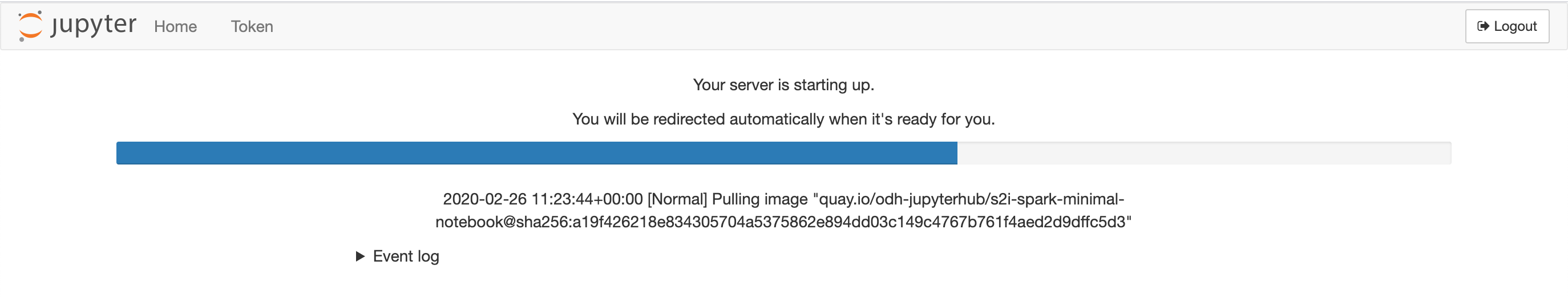

The server is starting up:

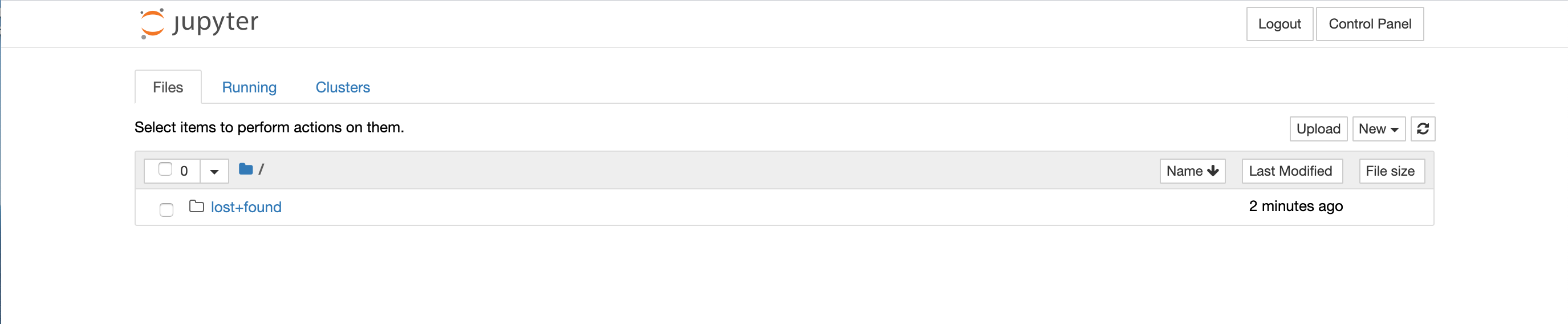

The Notebook is available:

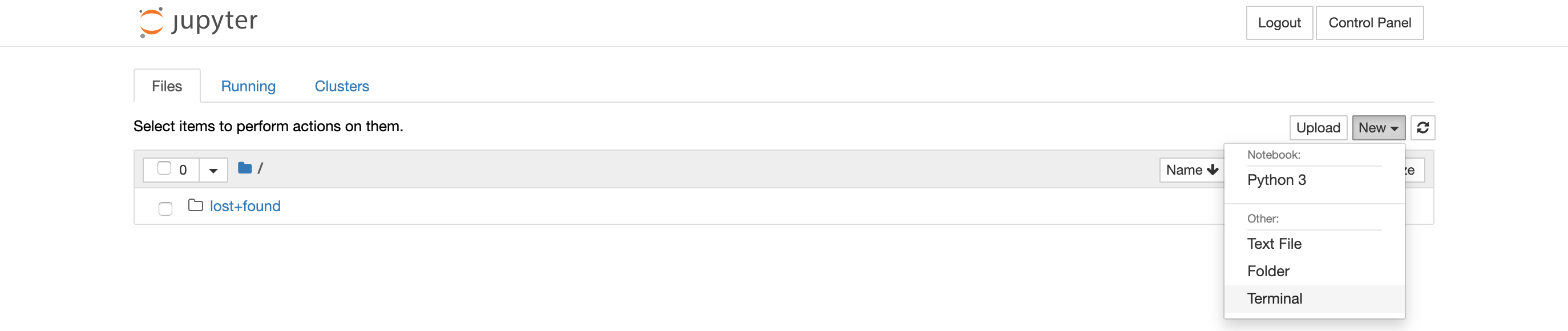

Launch a Terminal:

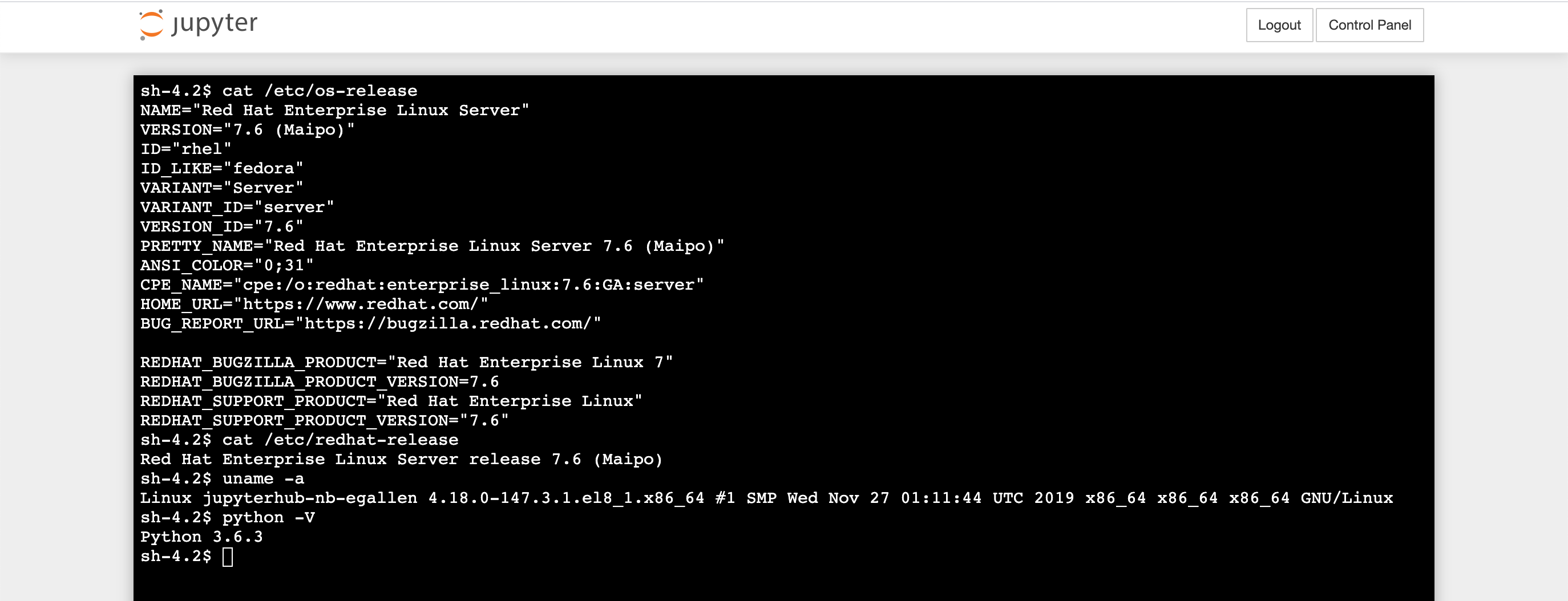

Check the UBI minimal image:

Check the pods running in the odh project:

[stack@perflab-director ~]$ oc project odh

Now using project "odh" on server "https://api.perflab.lan.redhat.com:6443".

[stack@perflab-director ~]$ oc get pods

NAME READY STATUS RESTARTS AGE

jupyterhub-1-cj4sw 1/1 Running 0 119m

jupyterhub-1-deploy 0/1 Completed 0 120m

jupyterhub-db-1-64pl4 1/1 Running 0 119m

jupyterhub-db-1-deploy 0/1 Completed 0 120m

jupyterhub-nb-egallen 2/2 Running 0 88m

opendatahub-operator-59985b769-2nrpn 1/1 Running 0 127m

spark-cluster-egallen-m-ln2qz 1/1 Running 0 88m

spark-cluster-egallen-w-f8dtc 1/1 Running 0 88m

spark-cluster-egallen-w-gskm6 1/1 Running 0 88m

spark-operator-8485787fd8-z4j5m 1/1 Running 0 119m

If you don’t want to use the Terminal UI, you can rsh in the container:

[stack@perflab-director ~]$ oc rsh jupyterhub-nb-egallen

Defaulting container name to notebook.

Use 'oc describe pod/jupyterhub-nb-egallen -n odh' to see all of the containers in this pod.

(app-root) sh-4.2$

Check the default jupyterhub notebook image s2i-spark-minimal-notebook:3.6:

sh-4.2$ cat /etc/os-release

NAME="Red Hat Enterprise Linux Server"

VERSION="7.6 (Maipo)"

ID="rhel"

ID_LIKE="fedora"

VARIANT="Server"

VARIANT_ID="server"

VERSION_ID="7.6"

PRETTY_NAME="Red Hat Enterprise Linux Server 7.6 (Maipo)"

ANSI_COLOR="0;31"

CPE_NAME="cpe:/o:redhat:enterprise_linux:7.6:GA:server"

HOME_URL="https://www.redhat.com/"

BUG_REPORT_URL="https://bugzilla.redhat.com/"

REDHAT_BUGZILLA_PRODUCT="Red Hat Enterprise Linux 7"

REDHAT_BUGZILLA_PRODUCT_VERSION=7.6

REDHAT_SUPPORT_PRODUCT="Red Hat Enterprise Linux"

REDHAT_SUPPORT_PRODUCT_VERSION="7.6"

sh-4.2$ cat /etc/redhat-release

Red Hat Enterprise Linux Server release 7.6 (Maipo)

sh-4.2$ uname -a

Linux jupyterhub-nb-egallen 4.18.0-147.3.1.el8_1.x86_64 #1 SMP Wed Nov 27 01:11:44 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

sh-4.2$ python -V

Python 3.6.3

sh-4.2$ ls /etc/yum.repos.d/

redhat.repo ubi.repo

sh-4.2$ cat /etc/yum.repos.d/ubi.repo | grep -A5 "\[ubi-7\]"

[ubi-7]

name = Red Hat Universal Base Image 7 Server (RPMs)

baseurl = https://cdn-ubi.redhat.com/content/public/ubi/dist/ubi/server/7/7Server/$basearch/os

enabled = 1

gpgkey = file:///etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release

gpgcheck = 1

GPU Notebook

sh-4.2$ nvidia-smi

Wed Feb 26 13:37:53 2020

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 440.33.01 Driver Version: 440.33.01 CUDA Version: N/A |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla V100-PCIE... On | 00000000:00:05.0 Off | Off |

| N/A 29C P0 24W / 250W | 0MiB / 16160MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

sh-4.2$ nvidia-smi --list-gpus

GPU 0: Tesla V100-PCIE-16GB (UUID: GPU-XXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXX)

sh-4.2$ whereis nvidia-smi

nvidia-smi: /usr/bin/nvidia-smi

sh-4.2$ lsmod | grep nvidia

nvidia_modeset 1114112 0

nvidia_uvm 1085440 0

nvidia 19927040 53 nvidia_uvm,nvidia_modeset

ipmi_msghandler 110592 2 ipmi_devintf,nvidia