Red Hat OpenStack Platform 16.1

We are testing OCS on OCP on OSP, this installation is described in three parts:

- Part 1: Red Hat OpenStack Platform 16.1 installation

- Part 2: OpenShift Container Platform 4.6 installation

- Part 3: OpenShift Container Storage 4.5 installation

Let’s first deploy Red Hat OpenStack Platform 16.1.

Red Hat OpenStack Platform 16.1 (RHOSP) was released Jul 29, 2020. RHOSP 16.1 is based on “OpenStack Train” and RHEL 8.2.

Most of the time, I’m using Ansible for this type of deployment (automatic setup of the director node), but we will show here the manual step by step commands from the documentation.

You can see a short summary of this OSP 16.1 installation in this 3mn video:

Table of content:

Platform

We are taking 7 x Dell PowerEdge R740xd servers, with dual Xeon and and 192GB of RAM.

We will apply this documentation

We can use a classic network segmentation with these IP ranges:

| Network | Subnet | Mask | VLAN |

|---|---|---|---|

| External | 172.16.14.0 | 24 | (0) |

| Provisioning | 172.16.16.0 | 24 | (0) |

| Internal | 172.16.11.0 | 24 | 101 |

| Storage | 172.16.12.0 | 24 | 102 |

| Tenant | 172.16.13.0 | 24 | 103 |

Installing virtualized RHOSP director

Prepare a KVM host with RHEL 8.2

We are taking one Dell PowerEdge R740xd who will host the virtualized RHOSP director (one KVM Virtual Machne).

We install RHEL 8.2 on this host with PXE or ISO USB Key

Check the server version of your RHEL installed:

[root@e26-h15-740xd ~]# cat /etc/redhat-release

Red Hat Enterprise Linux release 8.2 (Ootpa)

[root@e26-h15-740xd ~]# uname -a

Linux e26-h15-740xd.XXXXXXXX.redhat.com 4.18.0-193.el8.x86_64 #1 SMP Fri Mar 27 14:35:58 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

Add one user:

[root@e26-h15-740xd ~]# adduser egallen

[root@e26-h15-740xd ~]# passwd egallen

Changing password for user egallen.

New password:

BAD PASSWORD: The password fails the dictionary check - it does not contain enough DIFFERENT characters

Retype new password:

passwd: all authentication tokens updated successfully.

[root@e26-h15-740xd ~]# echo "egallen ALL=(root) NOPASSWD:ALL" | sudo tee -a /etc/sudoers.d/egallen

egallen ALL=(root) NOPASSWD:ALL

Copy the SSH key from your laptop:

egallen@laptop ~ % ssh-copy-id egallen@e26-h15-740xd

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

egallen@10.19.96.164's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'egallen@e26-h15-740xd'"

and check to make sure that only the key(s) you wanted were added.

Register the node

Register the node:

[egallen@e26-h15-740xd ~]$ sudo subscription-manager register --username myrhnaccount

[egallen@e26-h15-740xd ~]$ sudo subscription-manager attach --pool=XXXXXXXXXXXXXXXXXXXXXXXXX

Successfully attached a subscription for: Red Hat

[egallen@e26-h15-740xd ~]$ sudo subscription-manager repos --disable=*

[egallen@e26-h15-740xd ~]$ sudo subscription-manager repos --enable=rhel-8-for-x86_64-baseos-rpms --enable=rhel-8-for-x86_64-appstream-rpms --enable=advanced-virt-for-rhel-8-x86_64-rpms

Repository 'rhel-8-for-x86_64-baseos-rpms' is enabled for this system.

Repository 'rhel-8-for-x86_64-appstream-rpms' is enabled for this system.

Repository 'advanced-virt-for-rhel-8-x86_64-rpms' is enabled for this system.

Set the virt repository module to version 8.2:

[egallen@e26-h15-740xd ~]$ sudo dnf module disable -y virt:rhel

Updating Subscription Management repositories.

Red Hat Enterprise Linux 8 for x86_64 - AppStream (RPMs) 20 kB/s | 4.5 kB 00:00

Red Hat Enterprise Linux 8 for x86_64 - BaseOS (RPMs) 18 kB/s | 4.1 kB 00:00

Advanced Virtualization for RHEL 8 x86_64 (RPMs) 900 kB/s | 757 kB 00:00

Only module name is required. Ignoring unneeded information in argument: 'virt:rhel'

Dependencies resolved.

=================================================================================================================================================================================================================

Package Architecture Version Repository Size

=================================================================================================================================================================================================================

Disabling modules:

virt

Transaction Summary

=================================================================================================================================================================================================================

Complete!

[egallen@e26-h15-740xd ~]$ sudo dnf module enable -y virt:8.2

Updating Subscription Management repositories.

Last metadata expiration check: 0:00:05 ago on Thu 22 Oct 2020 08:58:51 PM UTC.

Dependencies resolved.

=================================================================================================================================================================================================================

Package Architecture Version Repository Size

=================================================================================================================================================================================================================

Enabling module streams:

virt 8.2

Transaction Summary

=================================================================================================================================================================================================================

Complete!

Update and reboot:

[egallen@e26-h15-740xd ~]$ sudo dnf update -y

[egallen@e26-h15-740xd ~]$ sudo systemctl reboot

Prepare KVM host to host the director VM (undercloud)

Red Hat OpenStack Platform director requires that the latest version of Red Hat Enterprise Linux 8 is installed as the host operating system. This means your virtualization platform must also support the underlying Red Hat Enterprise Linux version.

We need to enable these repositories:

| Repository | Name |

|---|---|

| rhel-8-for-x86_64-baseos-eus-rpms | Red Hat Enterprise Linux 8 for x86_64 - BaseOS (RPMs) Extended Update Support (EUS) |

| rhel-8-for-x86_64-appstream-eus-rpms | Red Hat Enterprise Linux 8 for x86_64 - AppStream (RPMs) |

| rhel-8-for-x86_64-highavailability-eus-rpms | Red Hat Enterprise Linux 8 for x86_64 - High Availability (RPMs) Extended Update Support (EUS) |

| ansible-2.9-for-rhel-8-x86_64-rpms | Red Hat Ansible Engine 2.9 for RHEL 8 x86_64 (RPMs) |

| advanced-virt-for-rhel-8-x86_64-rpms | Advanced Virtualization for RHEL 8 x86_64 (RPMs) |

| openstack-16.1-for-rhel-8-x86_64-rpms | Red Hat OpenStack Platform 16.1 for RHEL 8 (RPMs) |

| fast-datapath-for-rhel-8-x86_64-rpms | Red Hat Fast Datapath for RHEL 8 (RPMS) |

| rhceph-4-tools-for-rhel-8-x86_64-rpms | Red Hat Ceph Storage Tools 4 for RHEL 8 x86_64 (RPMs) |

Prepare the director VM

[root@e26-h15-740xd ~]# cd /var/lib/libvirt/images/

[root@e26-h15-740xd images]# wget "https://access.cdn.redhat.com/content/origin/files/sha256/XXXX/rhel-8.2-x86_64-kvm.qcow2"

Prepare the qemu image:

[egallen@e26-h15-740xd ~]$ sudo qemu-img create -f qcow2 -o preallocation=metadata /var/lib/libvirt/images/e26-director.qcow2 200G;

Formatting '/var/lib/libvirt/images/e26-director.qcow2', fmt=qcow2 size=214748364800 cluster_size=65536 preallocation=metadata lazy_refcounts=off refcount_bits=16

!!! We have to take care, the current QCOW2 KVM Cloud rhel-8.2 image do not have only one partition we will have to expand /dev/sda3 instead of /dev/sda1:

[egallen@e26-h15-740xd ~]$ virt-filesystems -a rhel-8.2-update-2-x86_64-kvm.qcow2

/dev/sda2

/dev/sda3

Copy the RHEL 8.2 QEMU Copy On Write data into the image:

[egallen@e26-h15-740xd ~]$ sudo virt-resize --expand /dev/sda3 /var/lib/libvirt/images/rhel-8.2-x86_64-kvm.qcow2 /var/lib/libvirt/images/e26-director.qcow2

[ 0.0] Examining /var/lib/libvirt/images/rhel-8.2-x86_64-kvm.qcow2

**********

Summary of changes:

/dev/sda1: This partition will be left alone.

/dev/sda2: This partition will be left alone.

/dev/sda3: This partition will be resized from 9.9G to 199.9G. The

filesystem xfs on /dev/sda3 will be expanded using the ‘xfs_growfs’

method.

**********

[ 2.5] Setting up initial partition table on /var/lib/libvirt/images/e26-director.qcow2

[ 13.2] Copying /dev/sda1

[ 13.3] Copying /dev/sda2

[ 13.3] Copying /dev/sda3

100% ⟦▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒⟧ 00:00

[ 24.4] Expanding /dev/sda3 using the ‘xfs_growfs’ method

Resize operation completed with no errors. Before deleting the old disk,

carefully check that the resized disk boots and works correctly.

Add a root password and remove cloud-init:

[egallen@e26-h15-740xd ~]$ sudo virt-customize -a /var/lib/libvirt/images/e26-firewall.qcow2 --uninstall cloud-init --root-password password:XXXXXX

[ 0.0] Examining the guest ...

[ 4.0] Setting a random seed

[ 4.0] Uninstalling packages: cloud-init

[ 5.3] Setting passwords

[ 6.6] Finishing off

Prepare the libvirt VM:

[egallen@e26-h15-740xd ~]$ sudo virt-install --ram 65536 --vcpus 10 --os-variant rhel8.0 \

--disk path=/var/lib/libvirt/images/e26-director.qcow2,device=disk,bus=virtio,format=qcow2 \

--graphics vnc,listen=0.0.0.0 --noautoconsole \

--network type=direct,source=eno1,source_mode=bridge,model=virtio \

--network type=direct,source=ens7f0,source_mode=bridge,model=virtio \

--network default \

--name e26-director --dry-run \

--print-xml > /tmp/e26-director.xml;

[egallen@kvmhost0 ~]$ sudo virsh define --file /tmp/e26-director.xml

Domain vgpu-license-server defined from /tmp/vgpu-license-server.xml

Start the e26-director Virtual Machine:

[egallen@e26-h15-740xd ~]$ sudo virsh start e26-director

Domain e26-director started

Check which IP is provided by the libvirt DHCP:

[egallen@e26-h15-740xd ~]$ sudo journalctl | grep DHCPOFFER

Oct 22 21:22:33 e26-h15-740xd.XXXXXXXX.redhat.com dnsmasq-dhcp[3080]: DHCPOFFER(virbr0) 192.168.122.2 52:54:00:25:42:d3

Oct 22 21:23:29 e26-h15-740xd.XXXXXXXX.redhat.com dnsmasq-dhcp[3080]: DHCPOFFER(virbr0) 192.168.122.2 52:54:00:25:42:d3

Oct 22 21:32:23 e26-h15-740xd.XXXXXXXX.redhat.com dnsmasq-dhcp[3080]: DHCPOFFER(virbr0) 192.168.122.2 52:54:00:25:42:d3

Connect into the VM:

[egallen@e26-h15-740xd ~]$ ssh root@192.168.122.2

The authenticity of host '192.168.122.2 (192.168.122.2)' can't be established.

ECDSA key fingerprint is SHA256:acdYPDOAoWJ+QoREO1jX0vVq9I8Qlo8RfX5x/GlO+A0.

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.122.2' (ECDSA) to the list of known hosts.

root@192.168.122.2's password:

Activate the web console with: systemctl enable --now cockpit.socket

This system is not registered to Red Hat Insights. See https://cloud.redhat.com/

To register this system, run: insights-client --register

[root@localhost ~]#

Define the hostname:

[root@localhost ~]# hostnamectl set-hostname e26-director.lan.redhat.com

[root@localhost ~]# hostname

e26-director.lan.redhat.com

[root@localhost ~]# hostname -f

e26-director.lan.redhat.com

Create the new stack user:

[root@e26-director ~]# adduser stack

[root@e26-director ~]# passwd stack

[root@e26-director ~]# echo "stack ALL=(root) NOPASSWD:ALL" | tee -a /etc/sudoers.d/stack

[root@e26-director ~]# chmod 0440 /etc/sudoers.d/stack

Authorize the ssh public key:

[egallen@e26-h15-740xd ~]$ ssh-copy-id stack@192.168.122.2

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

stack@192.168.122.2's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'stack@192.168.122.2'"

and check to make sure that only the key(s) you wanted were added.

Director registration

Register the VM:

[stack@e26-director ~]$ sudo subscription-manager register

Registering to: subscription.rhsm.redhat.com:443/subscription

Username: myrhnaccount

Password:

The system has been registered with ID: XXXXX-XXXX-XXXX-XXXX-XXXXXXXX

The registered system name is: e26-director.lan.redhat.com

Attach the pool:

[stack@e26-director ~]$ sudo subscription-manager attach --pool=XXXXXXXXXXXXXXXXXXXXXXXXXXXX

Successfully attached a subscription for: Red Hat SKU

RHOSP 16.1 us using RHEL EUS version.

IMPORTANT: for RHEL 8.2 EUS, fix the 8.2 version:

IMPORTANT: for RHEL 8.2 EUS, fix the 8.2 version:

[stack@e26-director ~]$ sudo subscription-manager release --set=8.2

Release set to: 8.2

[stack@e26-director ~]$ sudo subscription-manager release

Release: 8.2

Enable the RHOSP repositories:

[stack@e26-director ~]$ sudo subscription-manager repos --disable=*

[stack@e26-director ~]$ sudo sudo dnf clean all

[stack@e26-director ~]$ sudo subscription-manager repos --enable=rhel-8-for-x86_64-baseos-eus-rpms --enable=rhel-8-for-x86_64-appstream-eus-rpms --enable=rhel-8-for-x86_64-highavailability-eus-rpms --enable=ansible-2.9-for-rhel-8-x86_64-rpms --enable=openstack-16.1-for-rhel-8-x86_64-rpms --enable=fast-datapath-for-rhel-8-x86_64-rpms --enable=advanced-virt-for-rhel-8-x86_64-rpms --enable=rhceph-4-tools-for-rhel-8-x86_64-rpms

Repository 'rhel-8-for-x86_64-baseos-eus-rpms' is enabled for this system.

Repository 'rhel-8-for-x86_64-appstream-eus-rpms' is enabled for this system.

Repository 'rhel-8-for-x86_64-highavailability-eus-rpms' is enabled for this system.

Repository 'ansible-2.9-for-rhel-8-x86_64-rpms' is enabled for this system.

Repository 'openstack-16.1-for-rhel-8-x86_64-rpms' is enabled for this system.

Repository 'fast-datapath-for-rhel-8-x86_64-rpms' is enabled for this system.

Repository 'advanced-virt-for-rhel-8-x86_64-rpms' is enabled for this system.

Repository 'rhceph-4-tools-for-rhel-8-x86_64-rpms' is enabled for this system.

Set the container-tools repository module to version 2.0:

[stack@e26-director ~]$ sudo dnf module disable -y container-tools:rhel8

[stack@e26-director ~]$ sudo dnf module enable -y container-tools:2.0

Set the virt repository module to version 8.2:

[stack@e26-director ~]$ sudo dnf module disable -y virt:rhel

[stack@e26-director ~]$ sudo dnf module enable -y virt:8.2

Check enabled repositories:

[stack@e26-director ~]$ sudo subscription-manager repos --list-enabled

+----------------------------------------------------------+

Available Repositories in /etc/yum.repos.d/redhat.repo

+----------------------------------------------------------+

Repo ID: openstack-16.1-for-rhel-8-x86_64-rpms

Repo Name: Red Hat OpenStack Platform 16.1 for RHEL 8 x86_64 (RPMs)

Repo URL: https://cdn.redhat.com/content/dist/layered/rhel8/x86_64/openstack/16.1/os

Enabled: 1

Repo ID: ansible-2.9-for-rhel-8-x86_64-rpms

Repo Name: Red Hat Ansible Engine 2.9 for RHEL 8 x86_64 (RPMs)

Repo URL: https://cdn.redhat.com/content/dist/layered/rhel8/x86_64/ansible/2.9/os

Enabled: 1

Repo ID: rhel-8-for-x86_64-highavailability-eus-rpms

Repo Name: Red Hat Enterprise Linux 8 for x86_64 - High Availability - Extended Update Support (RPMs)

Repo URL: https://cdn.redhat.com/content/eus/rhel8/8.2/x86_64/highavailability/os

Enabled: 1

Repo ID: rhel-8-for-x86_64-baseos-eus-rpms

Repo Name: Red Hat Enterprise Linux 8 for x86_64 - BaseOS - Extended Update Support (RPMs)

Repo URL: https://cdn.redhat.com/content/eus/rhel8/8.2/x86_64/baseos/os

Enabled: 1

Repo ID: rhceph-4-tools-for-rhel-8-x86_64-rpms

Repo Name: Red Hat Ceph Storage Tools 4 for RHEL 8 x86_64 (RPMs)

Repo URL: https://cdn.redhat.com/content/dist/layered/rhel8/x86_64/rhceph-tools/4/os

Enabled: 1

Repo ID: rhel-8-for-x86_64-appstream-eus-rpms

Repo Name: Red Hat Enterprise Linux 8 for x86_64 - AppStream - Extended Update Support (RPMs)

Repo URL: https://cdn.redhat.com/content/eus/rhel8/8.2/x86_64/appstream/os

Enabled: 1

Repo ID: advanced-virt-for-rhel-8-x86_64-rpms

Repo Name: Advanced Virtualization for RHEL 8 x86_64 (RPMs)

Repo URL: https://cdn.redhat.com/content/dist/layered/rhel8/x86_64/advanced-virt/os

Enabled: 1

Repo ID: fast-datapath-for-rhel-8-x86_64-rpms

Repo Name: Fast Datapath for RHEL 8 x86_64 (RPMs)

Repo URL: https://cdn.redhat.com/content/dist/layered/rhel8/x86_64/fast-datapath/os

Enabled: 1

Upgrade RHEL and reboot:

[stack@e26-director ~]$ sudo yum upgrade -y

[stack@e26-director ~]$ sudo systemctl reboot

Director network preparation

Setup the network configuration with the proper VLAN:

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth1

DEVICE=eth1

NAME=eth1

ONBOOT=yes

HOTPLUG=no

NM_CONTROLLED=yes

PEERDNS=no

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth1.101

DEVICE=eth1.101

NAME=eth1.101

VLAN=yes

ONBOOT=yes

HOTPLUG=no

NM_CONTROLLED=yes

PEERDNS=no

BOOTPROTO=static

IPADDR=172.16.11.10

NETMASK=255.255.255.0

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth1.102

DEVICE=eth1.102

NAME=eth1.102

VLAN=yes

ONBOOT=yes

HOTPLUG=no

NM_CONTROLLED=yes

PEERDNS=no

BOOTPROTO=static

IPADDR=172.16.12.10

NETMASK=255.255.255.0

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth1.103

DEVICE=eth1.103

NAME=eth1.103

VLAN=yes

ONBOOT=yes

HOTPLUG=no

NM_CONTROLLED=yes

PEERDNS=no

BOOTPROTO=static

IPADDR=172.16.13.10

NETMASK=255.255.255.0

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth1.104

DEVICE=eth1.104

NAME=eth1.104

VLAN=yes

ONBOOT=yes

HOTPLUG=no

NM_CONTROLLED=yes

PEERDNS=no

BOOTPROTO=static

IPADDR=172.16.14.10

NETMASK=255.255.255.0

[stack@e26-director ~] cat /etc/sysconfig/network-scripts/ifcfg-eth2

DEVICE="eth2"

BOOTPROTO="dhcp"

BOOTPROTOv6="dhcp"

ONBOOT="yes"

TYPE="Ethernet"

USERCTL="yes"

PEERDNS="yes"

IPV6INIT="yes"

PERSISTENT_DHCLIENT="1"

Check network configuration:

[stack@e26-director ~]$ ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 52:54:00:68:bc:cb brd ff:ff:ff:ff:ff:ff

inet6 fe80::16eb:2b52:9663:558b/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 52:54:00:e3:f1:95 brd ff:ff:ff:ff:ff:ff

inet6 fe80::5054:ff:fee3:f195/64 scope link

valid_lft forever preferred_lft forever

4: eth2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 52:54:00:25:42:d3 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.2/24 brd 192.168.122.255 scope global dynamic noprefixroute eth2

valid_lft 3577sec preferred_lft 3577sec

inet6 fe80::5054:ff:fe25:42d3/64 scope link noprefixroute

valid_lft forever preferred_lft forever

5: eth1.101@eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 52:54:00:e3:f1:95 brd ff:ff:ff:ff:ff:ff

inet 172.16.11.10/24 brd 172.16.11.255 scope global noprefixroute eth1.101

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fee3:f195/64 scope link

valid_lft forever preferred_lft forever

6: eth1.102@eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 52:54:00:e3:f1:95 brd ff:ff:ff:ff:ff:ff

inet 172.16.12.10/24 brd 172.16.12.255 scope global noprefixroute eth1.102

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fee3:f195/64 scope link

valid_lft forever preferred_lft forever

7: eth1.104@eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 52:54:00:e3:f1:95 brd ff:ff:ff:ff:ff:ff

inet 172.16.14.10/24 brd 172.16.14.255 scope global noprefixroute eth1.104

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fee3:f195/64 scope link

valid_lft forever preferred_lft forever

8: eth1.103@eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 52:54:00:e3:f1:95 brd ff:ff:ff:ff:ff:ff

inet 172.16.13.10/24 brd 172.16.13.255 scope global noprefixroute eth1.103

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fee3:f195/64 scope link

valid_lft forever preferred_lft forever

[stack@e26-director ~]$ nmcli connection show

NAME UUID TYPE DEVICE

Wired connection 1 4ce3a9b3-dcca-3df3-ac4e-9629fa4d6eb3 ethernet eth0

System eth2 3a73717e-65ab-93e8-b518-24f5af32dc0d ethernet eth2

eth1.101 8a1717c8-d07f-ebe3-ef54-705f6dbecc08 vlan eth1.101

eth1.102 370fca10-b2fd-7ec5-22ba-e6b5f0efc8ef vlan eth1.102

eth1.103 9aa62f32-bb46-4a15-8e72-da71d51b971e vlan eth1.103

eth1.104 51885900-37a0-77ec-8d71-4507157585c5 vlan eth1.104

eth1 9c92fad9-6ecb-3e6c-eb4d-8a47c6f50c04 ethernet eth1

[stack@e26-director ~]$ ls /sys/class/net

eth0 eth1 eth1.101 eth1.102 eth1.103 eth1.104 eth2 lo

[stack@e26-director ~]$ cat /proc/net/dev

Inter-| Receive | Transmit

face |bytes packets errs drop fifo frame compressed multicast|bytes packets errs drop fifo colls carrier compressed

eth1.104: 28606 337 0 0 0 0 0 0 1964 26 0 0 0 0 0 0

eth1.101: 4094 89 0 0 0 0 0 0 1832 24 0 0 0 0 0 0

eth2: 8327 55 0 0 0 0 0 0 16101 93 0 0 0 415 0 0

eth0: 0 0 0 0 0 0 0 0 5040 34 0 0 0 0 0 0

eth1.103: 0 0 0 0 0 0 0 0 1992 26 0 0 0 0 0 0

lo: 416 6 0 0 0 0 0 0 416 6 0 0 0 0 0 0

eth1.102: 276 6 0 0 0 0 0 0 1832 24 0 0 0 0 0 0

eth1: 42480 459 0 0 0 0 0 0 9148 113 0 0 0 0 0 0

Install director packages

Installing director packages:

stack@e26-director ~]$ sudo dnf install -y python3-tripleoclient

Updating Subscription Management repositories.

Unable to read consumer identity

This system is not registered to Red Hat Subscription Management. You can use subscription-manager to register.

Last metadata expiration check: 0:08:56 ago on Thu 22 Oct 2020 03:20:16 PM EDT.

Dependencies resolved.

=================================================================================================================================================================================================================

Package Architecture Version Repository Size

=================================================================================================================================================================================================================

Installing:

python3-tripleoclient noarch 12.3.2-1.20200914164928.el8ost rhelosp-16.1 537 k

Installing dependencies:

ansible noarch 2.9.14-1.el8ae rhosp-ansible-2.9 17 M

ansible-config_template noarch 1.1.2-1.20200818183400.ea07ed0.el8ost rhelosp-16.1 29 k

...

Prepare containers images for director

Prepare the container images:

[stack@e26-director ~]$ openstack tripleo container image prepare default --local-push-destination --output-env-file containers-prepare-parameter.yaml

# Generated with the following on 2020-10-22T15:58:12.528939

#

# openstack tripleo container image prepare default --local-push-destination --output-env-file containers-prepare-parameter.yaml

#

parameter_defaults:

ContainerImagePrepare:

- push_destination: true

set:

ceph_alertmanager_image: ose-prometheus-alertmanager

ceph_alertmanager_namespace: registry.redhat.io/openshift4

ceph_alertmanager_tag: 4.1

ceph_grafana_image: rhceph-4-dashboard-rhel8

ceph_grafana_namespace: registry.redhat.io/rhceph

ceph_grafana_tag: 4

ceph_image: rhceph-4-rhel8

ceph_namespace: registry.redhat.io/rhceph

ceph_node_exporter_image: ose-prometheus-node-exporter

ceph_node_exporter_namespace: registry.redhat.io/openshift4

ceph_node_exporter_tag: v4.1

ceph_prometheus_image: ose-prometheus

ceph_prometheus_namespace: registry.redhat.io/openshift4

ceph_prometheus_tag: 4.1

ceph_tag: latest

name_prefix: openstack-

name_suffix: ''

namespace: registry.redhat.io/rhosp-rhel8

neutron_driver: ovn

rhel_containers: false

tag: '16.1'

tag_from_label: '{version}-{release}'

ContainerImageRegistryCredentials:

registry.redhat.io:

myrhnusername: XXXXXXXXXX

Add your credential or tokens at the end:

ContainerImageRegistryCredentials:

registry.redhat.io:

myrhnusername: XXXXXXXXXX

Install director services

You can review a director configuration here: /usr/share/python-tripleoclient/undercloud.conf.sample

Create your undercloud file:

[DEFAULT]

undercloud_hostname = e26-director.lan.redhat.com

local_ip = 172.16.16.10/24

undercloud_public_host = 172.16.16.11

undercloud_admin_host = 172.16.16.12

local_interface = eth0

undercloud_nameservers = 10.19.96.1

undercloud_ntp_servers = clock.redhat.com

overcloud_domain_name = lan.redhat.com

container_images_file=/home/stack/containers-prepare-parameter.yaml

[ctlplane-subnet]

cidr = 172.16.16.0/24

dhcp_start = 172.16.16.201

dhcp_end = 172.16.16.220

inspection_iprange = 172.16.16.221,172.16.16.230

gateway = 172.16.16.10

masquerade_network = True

subnets = ctlplane-subnet

local_subnet = ctlplane-subnet

masquerade = true

Before running the director installation, no containers are running:

[stack@e26-director ~]$ sudo podman ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

Setup the host:

[stack@e26-director ~]$ echo "172.16.16.10 e26-director.lan.redhat.com e26-director " | sudo tee -a /etc/hosts

Check the base and full hostname of the undercloud:

[stack@e26-director ~]$ hostname

e26-director.lan.redhat.com

[stack@e26-director ~]$ hostname -f

e26-director.lan.redhat.com

Installing director:

[stack@e26-director ~]$ time openstack undercloud install

Using /tmp/undercloud-disk-space.yaml3npnqp0sansible.cfg as config file

[WARNING]: log file at /usr/share/ansible/validation-playbooks/ansible.log is not writeable and we cannot create it, aborting

Success! The validation passed for all hosts:

...

Install artifact is located at /home/stack/undercloud-install-20201030114358.tar.bzip2

########################################################

Deployment successful!

########################################################

Writing the stack virtual update mark file /var/lib/tripleo-heat-installer/update_mark_undercloud

##########################################################

The Undercloud has been successfully installed.

Useful files:

Password file is at /home/stack/undercloud-passwords.conf

The stackrc file is at ~/stackrc

Use these files to interact with OpenStack services, and

ensure they are secured.

##########################################################

real 22m36.243s

user 10m4.918s

sys 2m12.439s

List running director services:

[stack@e26-director ~]$ source stackrc

(undercloud) [stack@e26-director ~]$ openstack service list

+----------------------------------+------------------+-------------------------+

| ID | Name | Type |

+----------------------------------+------------------+-------------------------+

| 102b8909f551418392b9b4063cb1e385 | glance | image |

| 3a5c3570f1bd473d8dfe8e723e56e80c | neutron | network |

| 45855c71f14c46e68bf2730e4ef57d72 | heat | orchestration |

| 4a79d093a63d4f228bdca0ae374fc659 | swift | object-store |

| 6ac387421d5e41a1a60aab853d5b2de9 | mistral | workflowv2 |

| 78614ac7070e4c17a6926248ce91c4fe | placement | placement |

| 8002300c24344b02be2f63191d0e628a | ironic-inspector | baremetal-introspection |

| 85e78de5012247e2b7856a32c8f8a15f | zaqar-websocket | messaging-websocket |

| 9d602eadc6b94d85adbc2ec38a5d4758 | keystone | identity |

| a62ee3526e55457190f1bbcf7b8b0e29 | ironic | baremetal |

| c8dc75c8ee6e43fabdbb3b441a03b3c6 | zaqar | messaging |

| d3a4a9511fad4b21b4fcc727af892899 | nova | compute |

+----------------------------------+------------------+-------------------------+

Check running containers:

(undercloud) [stack@e26-director ~]$ sudo podman ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

3b31bbafa93f e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-neutron-dhcp-agent:16.1 /usr/sbin/dnsmasq... 9 minutes ago Up 9 minutes ago neutron-dnsmasq-qdhcp-63ca867b-7662-4ca7-9207-ea1a0e5ca9de

53fb5a35dcf5 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-nova-compute-ironic:16.1 kolla_start 10 minutes ago Up 10 minutes ago nova_compute

59abc2b8f47a e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-inspector:16.1 kolla_start 10 minutes ago Up 10 minutes ago ironic_inspector_dnsmasq

415c202ec266 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-inspector:16.1 kolla_start 10 minutes ago Up 10 minutes ago ironic_inspector

fcc8184795bf e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-pxe:16.1 kolla_start 11 minutes ago Up 11 minutes ago ironic_pxe_http

7219993deac8 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-pxe:16.1 /bin/bash -c BIND... 11 minutes ago Up 11 minutes ago ironic_pxe_tftp

3d1451ec8547 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-neutron-agent:16.1 kolla_start 11 minutes ago Up 11 minutes ago ironic_neutron_agent

bec2a12a88dd e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-conductor:16.1 kolla_start 11 minutes ago Up 11 minutes ago ironic_conductor

8819efea95bc e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-mistral-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago mistral_api

364529e1317e e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-neutron-openvswitch-agent:16.1 kolla_start 11 minutes ago Up 11 minutes ago neutron_ovs_agent

a7356488fa61 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-neutron-l3-agent:16.1 kolla_start 11 minutes ago Up 11 minutes ago neutron_l3_agent

f92f60186ccb e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-neutron-dhcp-agent:16.1 kolla_start 11 minutes ago Up 11 minutes ago neutron_dhcp

3fafb46a5a55 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-ironic-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago ironic_api

771f8468f664 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-nova-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago nova_api_cron

147dcfbc25b5 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-proxy-server:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_proxy

e81d76cf0ca9 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-nova-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago nova_api

43644f178847 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-glance-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago glance_api

7b6e2f65de27 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-placement-api:16.1 kolla_start 11 minutes ago Up 11 minutes ago placement_api

4da9e9b6af3f e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-zaqar-wsgi:16.1 kolla_start 11 minutes ago Up 11 minutes ago zaqar_websocket

923282d49052 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-zaqar-wsgi:16.1 kolla_start 11 minutes ago Up 11 minutes ago zaqar

c9499b59ac14 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-object:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_rsync

31a6891071ee e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-object:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_object_updater

cc5c3834939a e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-object:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_object_server

eeb7e6002f73 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-proxy-server:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_object_expirer

4e1b4b6e730c e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-container:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_container_updater

e59e0891996d e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-container:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_container_server

cae76618f07d e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-account:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_account_server

054061e0db3b e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-swift-account:16.1 kolla_start 11 minutes ago Up 11 minutes ago swift_account_reaper

fc972835b141 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-nova-scheduler:16.1 kolla_start 11 minutes ago Up 11 minutes ago nova_scheduler

d8781bdb19af e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-nova-conductor:16.1 kolla_start 11 minutes ago Up 11 minutes ago nova_conductor

101499ca726f e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-neutron-server:16.1 kolla_start 11 minutes ago Up 11 minutes ago neutron_api

2160d9d5b867 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-mistral-executor:16.1 kolla_start 11 minutes ago Up 11 minutes ago mistral_executor

1d6e0e4a751d e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-mistral-event-engine:16.1 kolla_start 12 minutes ago Up 11 minutes ago mistral_event_engine

f324f7759950 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-mistral-engine:16.1 kolla_start 12 minutes ago Up 12 minutes ago mistral_engine

75e0172e708a e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-cron:16.1 kolla_start 12 minutes ago Up 12 minutes ago logrotate_crond

d59c7ccc1ad4 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-heat-engine:16.1 kolla_start 12 minutes ago Up 12 minutes ago heat_engine

25e786b7d8e4 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-heat-api:16.1 kolla_start 12 minutes ago Up 12 minutes ago heat_api_cron

110cf9e9520d e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-heat-api:16.1 kolla_start 12 minutes ago Up 12 minutes ago heat_api

3a87e6a066c4 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-keystone:16.1 kolla_start 15 minutes ago Up 15 minutes ago keystone

25670d7079d2 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-iscsid:16.1 kolla_start 15 minutes ago Up 15 minutes ago iscsid

40354ba3d9a6 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-mariadb:16.1 kolla_start 16 minutes ago Up 16 minutes ago mysql

9738c007ebfa e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-rabbitmq:16.1 kolla_start 18 minutes ago Up 18 minutes ago rabbitmq

e9439b89457c e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-haproxy:16.1 kolla_start 18 minutes ago Up 18 minutes ago haproxy

93b71631efd8 e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-memcached:16.1 kolla_start 18 minutes ago Up 18 minutes ago memcached

14dc5f11259c e26-director.ctlplane.lan.redhat.com:8787/rhosp-rhel8/openstack-keepalived:16.1 /usr/local/bin/ko... 18 minutes ago Up 18 minutes ago keepalived

Check listening ports:

(undercloud) [stack@e26-director ~]$ sudo netstat -tulpn

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 172.16.16.10:8004 0.0.0.0:* LISTEN 37214/httpd

tcp 0 0 172.16.16.12:8004 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:36357 0.0.0.0:* LISTEN 41679/python3

tcp 0 0 172.16.16.11:13989 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:8774 0.0.0.0:* LISTEN 42598/httpd

tcp 0 0 172.16.16.12:8774 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 127.0.0.1:199 0.0.0.0:* LISTEN 36415/snmpd

tcp 0 0 172.16.16.10:9000 0.0.0.0:* LISTEN 41679/python3

tcp 0 0 172.16.16.10:5000 0.0.0.0:* LISTEN 31276/httpd

tcp 0 0 172.16.16.10:5672 0.0.0.0:* LISTEN 22406/beam.smp

tcp 0 0 0.0.0.0:25672 0.0.0.0:* LISTEN 22406/beam.smp

tcp 0 0 172.16.16.12:9000 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:5000 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13000 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 127.0.0.1:6633 0.0.0.0:* LISTEN 44804/openvswitch-a

tcp 0 0 172.16.16.10:873 0.0.0.0:* LISTEN 41194/rsync

tcp 0 0 172.16.16.11:13385 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:1993 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:1993 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:8778 0.0.0.0:* LISTEN 42045/httpd

tcp 0 0 172.16.16.10:3306 0.0.0.0:* LISTEN 26251/mysqld

tcp 0 0 172.16.16.12:8778 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:3306 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:11211 0.0.0.0:* LISTEN 21406/memcached

tcp 0 0 0.0.0.0:5355 0.0.0.0:* LISTEN 869/systemd-resolve

tcp 0 0 172.16.16.10:9292 0.0.0.0:* LISTEN 42324/python3

tcp 0 0 172.16.16.11:13004 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:9292 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13292 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13774 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 0.0.0.0:111 0.0.0.0:* LISTEN 1/systemd

tcp 0 0 127.0.0.1:6640 0.0.0.0:* LISTEN 3457/ovsdb-server

tcp 0 0 172.16.16.10:8080 0.0.0.0:* LISTEN 43001/python3

tcp 0 0 172.16.16.10:6000 0.0.0.0:* LISTEN 40611/python3

tcp 0 0 172.16.16.12:8080 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13808 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:6385 0.0.0.0:* LISTEN 43598/httpd

tcp 0 0 172.16.16.10:6001 0.0.0.0:* LISTEN 40061/python3

tcp 0 0 172.16.16.10:4369 0.0.0.0:* LISTEN 22309/epmd

tcp 0 0 127.0.0.1:4369 0.0.0.0:* LISTEN 22309/epmd

tcp 0 0 172.16.16.12:6385 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:6002 0.0.0.0:* LISTEN 39834/python3

tcp 0 0 172.16.16.11:13778 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 14876/sshd

tcp 0 0 172.16.16.10:8888 0.0.0.0:* LISTEN 41413/httpd

tcp 0 0 127.0.0.1:15672 0.0.0.0:* LISTEN 22406/beam.smp

tcp 0 0 172.16.16.11:3000 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:8888 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:5050 0.0.0.0:* LISTEN 46292/python3

tcp 0 0 172.16.16.12:5050 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13050 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:8989 0.0.0.0:* LISTEN 44835/python3

tcp 0 0 172.16.16.10:35357 0.0.0.0:* LISTEN 31276/httpd

tcp 0 0 172.16.16.12:8989 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:35357 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.10:9696 0.0.0.0:* LISTEN 38913/server.log

tcp 0 0 172.16.16.11:13888 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.12:9696 0.0.0.0:* LISTEN 21893/haproxy

tcp 0 0 172.16.16.11:13696 0.0.0.0:* LISTEN 21893/haproxy

tcp6 0 0 :::5355 :::* LISTEN 869/systemd-resolve

tcp6 0 0 :::111 :::* LISTEN 1/systemd

tcp6 0 0 ::1:4369 :::* LISTEN 22309/epmd

tcp6 0 0 :::8787 :::* LISTEN 4983/httpd

tcp6 0 0 :::22 :::* LISTEN 14876/sshd

tcp6 0 0 :::8088 :::* LISTEN 46043/httpd

udp 0 0 127.0.0.53:53 0.0.0.0:* 869/systemd-resolve

udp 0 0 0.0.0.0:67 0.0.0.0:* 46516/dnsmasq

udp 0 0 172.16.16.10:69 0.0.0.0:* 45850/in.tftpd

udp 0 0 0.0.0.0:111 0.0.0.0:* 1/systemd

udp 0 0 0.0.0.0:123 0.0.0.0:* 6516/chronyd

udp 0 0 0.0.0.0:161 0.0.0.0:* 36415/snmpd

udp 0 0 127.0.0.1:323 0.0.0.0:* 6516/chronyd

udp 0 0 0.0.0.0:5355 0.0.0.0:* 869/systemd-resolve

udp6 0 0 :::111 :::* 1/systemd

udp6 0 0 ::1:161 :::* 36415/snmpd

udp6 0 0 ::1:323 :::* 6516/chronyd

udp6 0 0 :::5355 :::* 869/systemd-resolve

Set-up director routing and container images

Add the FORWARDING iptables for the external network :

[stack@e26-director ~]$ vi /etc/sysconfig/iptables

...

-A FORWARD -s 172.16.14.0/24 -m state --state NEW,RELATED,ESTABLISHED -m comment --comment "139 routed_network forward source 172.16.16.0/24 ipv4" -j ACCEPT

-A FORWARD -d 172.16.14.0/24 -m state --state NEW,RELATED,ESTABLISHED -m comment --comment "140 routed_network forward destinations 172.16.16.0/24 ipv4" -j ACCEPT

...

-A POSTROUTING -s 172.16.14.0/24 -m state --state NEW,RELATED,ESTABLISHED -m comment --comment "142 routed_network masquerade 172.16.14.0/24 ipv4" -j MASQUERADE

...

Restart iptables:

(undercloud) [stack@e26-director ~]$ sudo systemctl restart iptables

Prepare overcloud images

Import rhosp-director Ironic images:

[stack@e26-director ~]$ sudo dnf install rhosp-director-images rhosp-director-images-ipa -y

Create directories for system images:

(undercloud) [stack@e26-director ~]$ sudo mkdir /var/images

(undercloud) [stack@e26-director ~]$ sudo chown stack:stack /var/images

(undercloud) [stack@e26-director ~]$ cd /var/images

(undercloud) [stack@e26-director images]$ for i in /usr/share/rhosp-director-images/overcloud-full-latest-16.1.tar /usr/share/rhosp-director-images/ironic-python-agent-latest-16.1.tar; do tar -xvf $i; done

overcloud-full.qcow2

overcloud-full.initrd

overcloud-full.vmlinuz

overcloud-full-rpm.manifest

overcloud-full-signature.manifest

ironic-python-agent.initramfs

ironic-python-agent.kernel

Load the undercloud environment credentials:

[stack@e26-director ~]$ source stackrc

Upload Ironic images into director’s Glance:

(undercloud) [stack@e26-director ~]$ openstack overcloud image upload --image-path /var/images

Image "overcloud-full-vmlinuz" was uploaded.

+--------------------------------------+------------------------+-------------+---------+--------+

| ID | Name | Disk Format | Size | Status |

+--------------------------------------+------------------------+-------------+---------+--------+

| c7e6c0b9-fa81-47f2-a6ca-fa134d82448f | overcloud-full-vmlinuz | aki | 8920432 | active |

+--------------------------------------+------------------------+-------------+---------+--------+

Image "overcloud-full-initrd" was uploaded.

+--------------------------------------+-----------------------+-------------+----------+--------+

| ID | Name | Disk Format | Size | Status |

+--------------------------------------+-----------------------+-------------+----------+--------+

| 45e7924c-ee32-4ed5-b710-cbc0ec261dcc | overcloud-full-initrd | ari | 72690081 | active |

+--------------------------------------+-----------------------+-------------+----------+--------+

Image "overcloud-full" was uploaded.

+--------------------------------------+----------------+-------------+------------+--------+

| ID | Name | Disk Format | Size | Status |

+--------------------------------------+----------------+-------------+------------+--------+

| 2aff792c-e534-4a04-9d45-070f26824326 | overcloud-full | qcow2 | 1095499776 | active |

+--------------------------------------+----------------+-------------+------------+--------+

We wan check the images loaded:

(undercloud) [stack@e26-director ~]$ openstack image list

+--------------------------------------+------------------------+--------+

| ID | Name | Status |

+--------------------------------------+------------------------+--------+

| 2aff792c-e534-4a04-9d45-070f26824326 | overcloud-full | active |

| 45e7924c-ee32-4ed5-b710-cbc0ec261dcc | overcloud-full-initrd | active |

| c7e6c0b9-fa81-47f2-a6ca-fa134d82448f | overcloud-full-vmlinuz | active |

+--------------------------------------+------------------------+--------+

We can check if the DNS are well set-up:

(undercloud) [stack@e26-director ~]$ openstack subnet list

+--------------------------------------+-----------------+--------------------------------------+----------------+

| ID | Name | Network | Subnet |

+--------------------------------------+-----------------+--------------------------------------+----------------+

| 62d9567a-b712-4bda-a896-f4eb792eeb85 | ctlplane-subnet | 63ca867b-7662-4ca7-9207-ea1a0e5ca9de | 172.16.16.0/24 |

+--------------------------------------+-----------------+--------------------------------------+----------------+

(undercloud) [stack@e26-director ~]$ openstack subnet show ctlplane-subnet

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| allocation_pools | 172.16.16.201-172.16.16.220 |

| cidr | 172.16.16.0/24 |

| created_at | 2020-10-30T11:43:28Z |

| description | |

| dns_nameservers | 10.19.96.1 |

| enable_dhcp | True |

| gateway_ip | 172.16.16.10 |

| host_routes | |

| id | 62d9567a-b712-4bda-a896-f4eb792eeb85 |

| ip_version | 4 |

| ipv6_address_mode | None |

| ipv6_ra_mode | None |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='63cd724dec5646d39ba904e110687cc5', project.name='admin', region_name='', zone= |

| name | ctlplane-subnet |

| network_id | 63ca867b-7662-4ca7-9207-ea1a0e5ca9de |

| prefix_length | None |

| project_id | 63cd724dec5646d39ba904e110687cc5 |

| revision_number | 0 |

| segment_id | None |

| service_types | |

| subnetpool_id | None |

| tags | |

| updated_at | 2020-10-30T11:43:28Z |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

We are good.

Create a nodes files for each of the 6 x Dell servers we want to manage:

{

"nodes": [

{

"capabilities": "profile:control,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:76:B8"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h19-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

},

{

"capabilities": "profile:control,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:6D:D4"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h21-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

},

{

"capabilities": "profile:control,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:7C:D0"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h23-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

},

{

"capabilities": "profile:compute,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:75:BC"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h25-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

},

{

"capabilities": "profile:compute,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:76:30"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h27-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

},

{

"capabilities": "profile:compute,boot_option:local",

"arch": "x86_64",

"cpu": "2",

"disk": "20",

"mac": [

"E4:43:4B:B2:7D:68"

],

"memory": "1024",

"pm_addr": "XXXXXXXX-e26-h29-740xd.XXXXXXXX.redhat.com",

"pm_password": "XXXXXXXX",

"pm_type": "pxe_ipmitool",

"pm_user": "ironic"

}

]

}

Validate the JSON file:

(undercloud) [stack@e26-director ~]$ openstack overcloud node import --validate-only ~/nodes.json

Waiting for messages on queue 'tripleo' with no timeout.

Successfully validated environment file

Import the nodes.json file:

(undercloud) [stack@e26-director ~]$ openstack overcloud node import ~/nodes.json

Waiting for messages on queue 'tripleo' with no timeout.

6 node(s) successfully moved to the "manageable" state.

Successfully registered node UUID e4b01b91-81a1-4df0-9620-fbe5cfdf2516

Successfully registered node UUID f90e0f2f-121d-4759-8a6d-a4bf69cab769

Successfully registered node UUID 45c912d0-2dbd-425a-95bc-0bcb7aa104a4

Successfully registered node UUID a57ef390-3edc-4449-8116-e2fbda6b6d05

Successfully registered node UUID e1df9b96-5e3a-4e13-9972-2b62a12e7cb7

Successfully registered node UUID e07b0890-feb5-4082-b635-185abaf5da85

Check nodes imported:

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | None | power off | available | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | None | power off | available | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | None | power off | available | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | None | power off | available | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | None | power off | available | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | None | power off | available | False |

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

Introspect all the nodes:

(undercloud) [stack@e26-director ~]$ openstack overcloud node introspect --all-manageable --provide

Waiting for introspection to finish...

Waiting for messages on queue 'tripleo' with no timeout.

Introspection of node completed:f90e0f2f-121d-4759-8a6d-a4bf69cab769. Status:SUCCESS. Errors:None

Introspection of node completed:e1df9b96-5e3a-4e13-9972-2b62a12e7cb7. Status:SUCCESS. Errors:None

Introspection of node completed:a57ef390-3edc-4449-8116-e2fbda6b6d05. Status:SUCCESS. Errors:None

Introspection of node completed:45c912d0-2dbd-425a-95bc-0bcb7aa104a4. Status:SUCCESS. Errors:None

Introspection of node completed:e07b0890-feb5-4082-b635-185abaf5da85. Status:SUCCESS. Errors:None

Introspection of node completed:e4b01b91-81a1-4df0-9620-fbe5cfdf2516. Status:SUCCESS. Errors:None

Successfully introspected 6 node(s).

Introspection completed.

Waiting for messages on queue 'tripleo' with no timeout.

6 node(s) successfully moved to the "available" state.

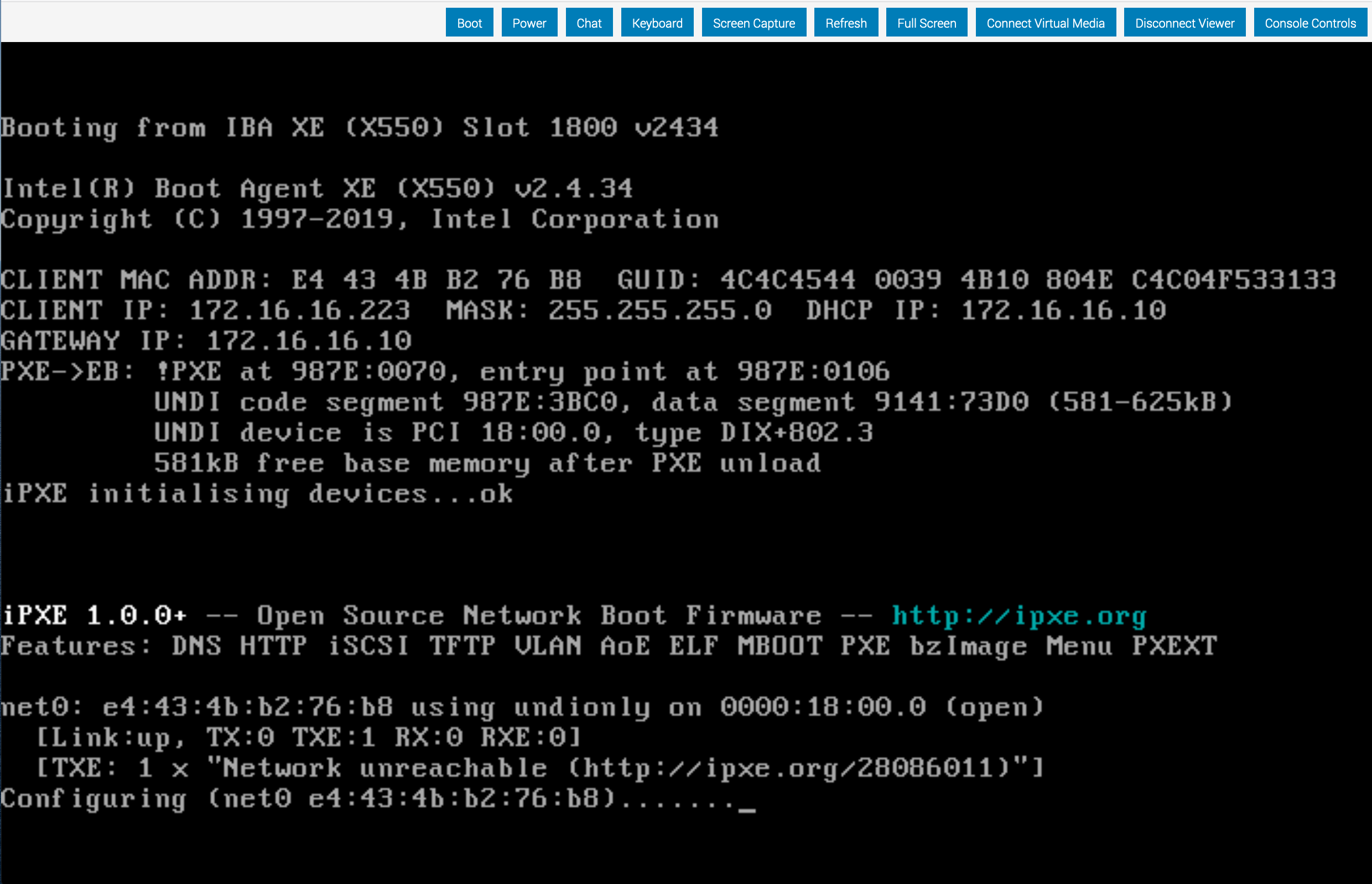

PXE boot during introspection

PXE boot during introspection

All nodes are off:

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | None | power off | available | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | None | power off | available | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | None | power off | available | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | None | power off | available | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | None | power off | available | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | None | power off | available | False |

+--------------------------------------+------+---------------+-------------+--------------------+-------------+

Profiles are allocated:

(undercloud) [stack@e26-director ~]$ openstack overcloud profiles list

+--------------------------------------+-----------+-----------------+-----------------+-------------------+

| Node UUID | Node Name | Provision State | Current Profile | Possible Profiles |

+--------------------------------------+-----------+-----------------+-----------------+-------------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | | available | control | |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | | available | control | |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | | available | control | |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | | available | compute | |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | | available | compute | |

| e07b0890-feb5-4082-b635-185abaf5da85 | | available | compute | |

+--------------------------------------+-----------+-----------------+-----------------+-------------------+

Check interfaces:

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection interface list e4b01b91-81a1-4df0-9620-fbe5cfdf2516

+-----------+-------------------+--------------------------------+-------------------+----------------+

| Interface | MAC Address | Switch Port VLAN IDs | Switch Chassis ID | Switch Port ID |

+-----------+-------------------+--------------------------------+-------------------+----------------+

| eno3 | e4:43:4b:b2:76:ba | [100] | 0c:86:10:af:b3:60 | ge-6/0/28 |

| eno1 | e4:43:4b:b2:76:b8 | [1140] | c0:03:80:f1:1b:40 | 526 |

| ens7f0 | 40:a6:b7:01:50:20 | [1138, 1139, 1140, 1141, 1142] | c0:bf:a7:1e:54:60 | et-0/0/5:2 |

| eno4 | e4:43:4b:b2:76:bb | [] | None | None |

| eno2 | e4:43:4b:b2:76:b9 | [1141] | c0:03:80:f1:1b:40 | 527 |

| ens7f1 | 40:a6:b7:01:50:21 | [1139, 1140, 1141, 1142, 1143] | c0:bf:a7:1e:54:60 | et-0/0/5:3 |

+-----------+-------------------+--------------------------------+-------------------+----------------+

...

Save instrospection data of the first node:

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | jq . > /tmp/pp

Fix boot disk

Install RHEL on one of the node to check the disk configuration:

[egallen@e26-h15-740xd ~]$ sudo lsblk -o KNAME,TYPE,SIZE,MODEL

KNAME TYPE SIZE MODEL

sda disk 1.7T PERC H740P Adp

sda1 part 512M

sda2 part 1.7T

sdb disk 1.7T PERC H740P Adp

sdc disk 1.7T PERC H740P Adp

sdd disk 1.7T PERC H740P Adp

sde disk 1.7T PERC H740P Adp

sdf disk 1.7T PERC H740P Adp

sdg disk 1.7T PERC H740P Adp

sdh disk 1.7T PERC H740P Adp

dm-0 lvm 1.6T

dm-1 lvm 8G

nvme0n1 disk 1.5T Dell Express Flash PM1725b 1.6TB AIC

Check id the root_disk match the disk you want to install OSP:

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save f90e0f2f-121d-4759-8a6d-a4bf69cab769 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save a57ef390-3edc-4449-8116-e2fbda6b6d05 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e07b0890-feb5-4082-b635-185abaf5da85 | jq . | grep -A1 root_disk

"root_disk": {

"name": "/dev/nvme0n1",

We can find the serial for each node:

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b3988b0025c3387067731ae5",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save f90e0f2f-121d-4759-8a6d-a4bf69cab769 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b38d830025c3387a7a4b9c3b",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b38d800025c3387483168c13",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save a57ef390-3edc-4449-8116-e2fbda6b6d05 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b388fa0025c338353d3e542e",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b3989c0025c33889a97c3a2d",

(undercloud) [stack@e26-director ~]$ openstack baremetal introspection data save e07b0890-feb5-4082-b635-185abaf5da85 | jq . | grep -A5 /dev/sda | grep serial

"serial": "64cd98f0b398910025c33877783a4ab0",

We can force the root disk for each node:

openstack baremetal node set --property root_device='{"serial":"64cd98f0b3988b0025c3387067731ae5"}' e4b01b91-81a1-4df0-9620-fbe5cfdf2516

openstack baremetal node set --property root_device='{"serial":"64cd98f0b38d830025c3387a7a4b9c3b"}' f90e0f2f-121d-4759-8a6d-a4bf69cab769

openstack baremetal node set --property root_device='{"serial":"64cd98f0b38d800025c3387483168c13"}' 45c912d0-2dbd-425a-95bc-0bcb7aa104a4

openstack baremetal node set --property root_device='{"serial":"64cd98f0b388fa0025c338353d3e542e"}' a57ef390-3edc-4449-8116-e2fbda6b6d05

openstack baremetal node set --property root_device='{"serial":"64cd98f0b3989c0025c33889a97c3a2d"}' e1df9b96-5e3a-4e13-9972-2b62a12e7cb7

openstack baremetal node set --property root_device='{"serial":"64cd98f0b398910025c33877783a4ab0"}' e07b0890-feb5-4082-b635-185abaf5da85

Install one NFS server

Install NFS utils:

[egallen@e26-h15-740xd new]$ sudo yum install nfs-utils

Prepare the NVMe partition:

[egallen@e26-h15-740xd ~]$ sudo fdisk /dev/nvme0n1

Welcome to fdisk (util-linux 2.32.1).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Command (m for help): n

Partition type

p primary (0 primary, 0 extended, 4 free)

e extended (container for logical partitions)

Select (default p):

Using default response p.

Partition number (1-4, default 1):

First sector (2048-3125627567, default 2048):

Last sector, +sectors or +size{K,M,G,T,P} (2048-3125627567, default 3125627567):

Created a new partition 1 of type 'Linux' and of size 1.5 TiB.

Command (m for help): w

The partition table has been altered.

Calling ioctl() to re-read partition table.

Syncing disks.

Formation the partition:

[egallen@e26-h15-740xd ~]$ sudo mkfs.xfs /dev/nvme0n1p1

meta-data=/dev/nvme0n1p1 isize=512 agcount=4, agsize=97675798 blks

= sectsz=512 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=1, rmapbt=0

= reflink=1

data = bsize=4096 blocks=390703190, imaxpct=5

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0, ftype=1

log =internal log bsize=4096 blocks=190773, version=2

= sectsz=512 sunit=0 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

Mount the partition:

[egallen@e26-h15-740xd ~]$ sudo mkdir /var/nfs

[egallen@e26-h15-740xd ~]$ echo "/dev/nvme0n1p1 /var/nfs xfs defaults 0 0" >> /etc/fstab

[root@e26-h15-740xd ]# sudo mkdir -p /var/nfs/cinder

Setup the shares:

[root@rhsec-service ~]# sudo cat << EOF > /etc/exports

/var/nfs/cinder 172.16.16.0/24(rw,insecure,no_root_squash,anonuid=1000,anongid=1000)

EOF

Enable the services:

[root@rhsec-service ~]# systemctl enable rpcbind

[root@rhsec-service ~]# systemctl enable nfs-server

Created symlink from /etc/systemd/system/multi-user.target.wants/nfs-server.service to /usr/lib/systemd/system/nfs-server.service.

[root@rhsec-service ~]# systemctl restart rpcbind

[root@rhsec-service ~]# systemctl restart nfs-server

Check the service status:

[root@e26-h15-740xd new]# systemctl status nfs-server

● nfs-server.service - NFS server and services

Loaded: loaded (/usr/lib/systemd/system/nfs-server.service; enabled; vendor preset: disabled)

Drop-In: /run/systemd/generator/nfs-server.service.d

└─order-with-mounts.conf

Active: active (exited) since Thu 2020-10-29 00:06:32 UTC; 2s ago

Process: 12518 ExecStopPost=/usr/sbin/exportfs -f (code=exited, status=0/SUCCESS)

Process: 12514 ExecStopPost=/usr/sbin/exportfs -au (code=exited, status=0/SUCCESS)

Process: 12565 ExecStart=/bin/sh -c if systemctl -q is-active gssproxy; then systemctl reload gssproxy ; fi (code=exited, status=0/SUCCESS)

Process: 12550 ExecStart=/usr/sbin/rpc.nfsd (code=exited, status=0/SUCCESS)

Process: 12547 ExecStartPre=/usr/sbin/exportfs -r (code=exited, status=0/SUCCESS)

Main PID: 12565 (code=exited, status=0/SUCCESS)

Prepare the templates

Generate the templates:

[stack@e26-director ~]$ cd /usr/share/openstack-tripleo-heat-templates

[stack@e26-director openstack-tripleo-heat-templates]$ ./tools/process-templates.py -o ~/openstack-tripleo-heat-templates-rendered

[stack@e26-director config]$ ls /home/stack/openstack-tripleo-heat-templates-rendered/network/config

2-linux-bonds-vlans bond-with-vlans multiple-nics multiple-nics-vlans single-nic-linux-bridge-vlans single-nic-vlans

Prepare the templates:

[stack@e26-director config]$ cp /home/stack/openstack-tripleo-heat-templates-rendered/network/config/bond-with-vlans/compute.yaml /home/stack/templates/nic-config/

[stack@e26-director config]$ cp /home/stack/openstack-tripleo-heat-templates-rendered/network/config/bond-with-vlans/controller.yaml /home/stack/templates/nic-config/

Installation script:

[stack@e26-director ~]$ cat overcloud-deploy.sh

#!/bin/bash

source stackrc

time openstack overcloud deploy \

-n ~/templates/network_data.yaml \

-r ~/templates/roles_data.yaml \

--answers-file ~/answers.yaml \

--overcloud-ssh-user heat-admin \

--overcloud-ssh-key ~/.ssh/id_rsa \

Check the answer.yaml configuration:

[stack@e26-director ~]$ cat answers.yaml

templates: /usr/share/openstack-tripleo-heat-templates/

environments:

- /home/stack/templates/environments/global.yaml

- /home/stack/containers-prepare-parameter.yaml

- /usr/share/openstack-tripleo-heat-templates/environments/network-environment.yaml

- /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml

- /home/stack/templates/environments/network.yaml

- /usr/share/openstack-tripleo-heat-templates/environments/disable-telemetry.yaml

- /usr/share/openstack-tripleo-heat-templates/environments/services/octavia.yaml

Check the global environment configuration:

[stack@e26-director ~]$ cat templates/environment/global.yaml

parameter_defaults:

CloudDomain: lan.redhat.com

CloudName: cloud.lan.redhat.com

ControllerHostnameFormat: 'controller-%index%'

ControllerCount: 3

OvercloudControllerFlavor: control

ComputeHostnameFormat: 'compute-%index%'

ComputeCount: 3

OvercloudComputeFlavor: compute

NovaEnableRbdBackend: false

# Glance

#GlanceBackend: file

GlanceNfsEnabled: false

#GlanceNfsShare: '172.16.16.1:/var/nfs/glance'

#GlanceNfsOptions: 'rw,sync,context=system_u:object_r:svirt_sandbox_file_t:s0'

# Cinder

CinderEnableIscsiBackend: false

CinderEnableRbdBackend: false

CinderEnableNfsBackend: true

CinderNfsServers: '172.16.16.1:/var/nfs/cinder'

CinderNfsMountOptions: 'rw,sync'

Check the network environment configuration:

[stack@e26-director ~]$ cat templates/network.yaml

# List of networks, used for j2 templating of enabled networks

- name: InternalApi

name_lower: internal_api

vip: true

vlan: '101'

ip_subnet: '172.16.11.0/24'

allocation_pools: [{'start': '172.16.11.100', 'end': '172.16.11.200'}]

- name: Tenant

name_lower: tenant

vip: false

vlan: '103'

ip_subnet: '172.16.13.0/24'

allocation_pools: [{'start': '172.16.13.100', 'end': '172.16.13.200'}]

- name: External

name_lower: external

vip: true

vlan: '104'

ip_subnet: '172.16.14.0/24'

allocation_pools: [{'start': '172.16.14.100', 'end': '172.16.14.200'}]

gateway_ip: '172.16.14.10'

- name: Storage

name_lower: storage

vip: true

vlan: '102'

ip_subnet: '172.16.12.0/24'

allocation_pools: [{'start': '172.16.12.100', 'end': '172.16.12.200'}]

Check the network configuration:

[stack@e26-director ~]$ cat templates/environment/network.yaml

resource_registry:

OS::TripleO::Controller::Net::SoftwareConfig: ../nic-config/controller.yaml

OS::TripleO::Compute::Net::SoftwareConfig: ../nic-config/compute.yaml

parameter_defaults:

DnsServers: [ "10.19.96.1" ]

NtpServer: "clock.redhat.com"

TimeZone: 'UTC'

ControlPlaneDefaultRoute: "172.16.16.10"

PublicVirtualFixedIPs: [{ 'ip_address' : "172.16.14.5" }]

NeutronBridgeMappings: "datacentre:br-ex"

NeutronFlatNetworks: "datacentre"

NetworkDeploymentActions: ['CREATE','UPDATE']

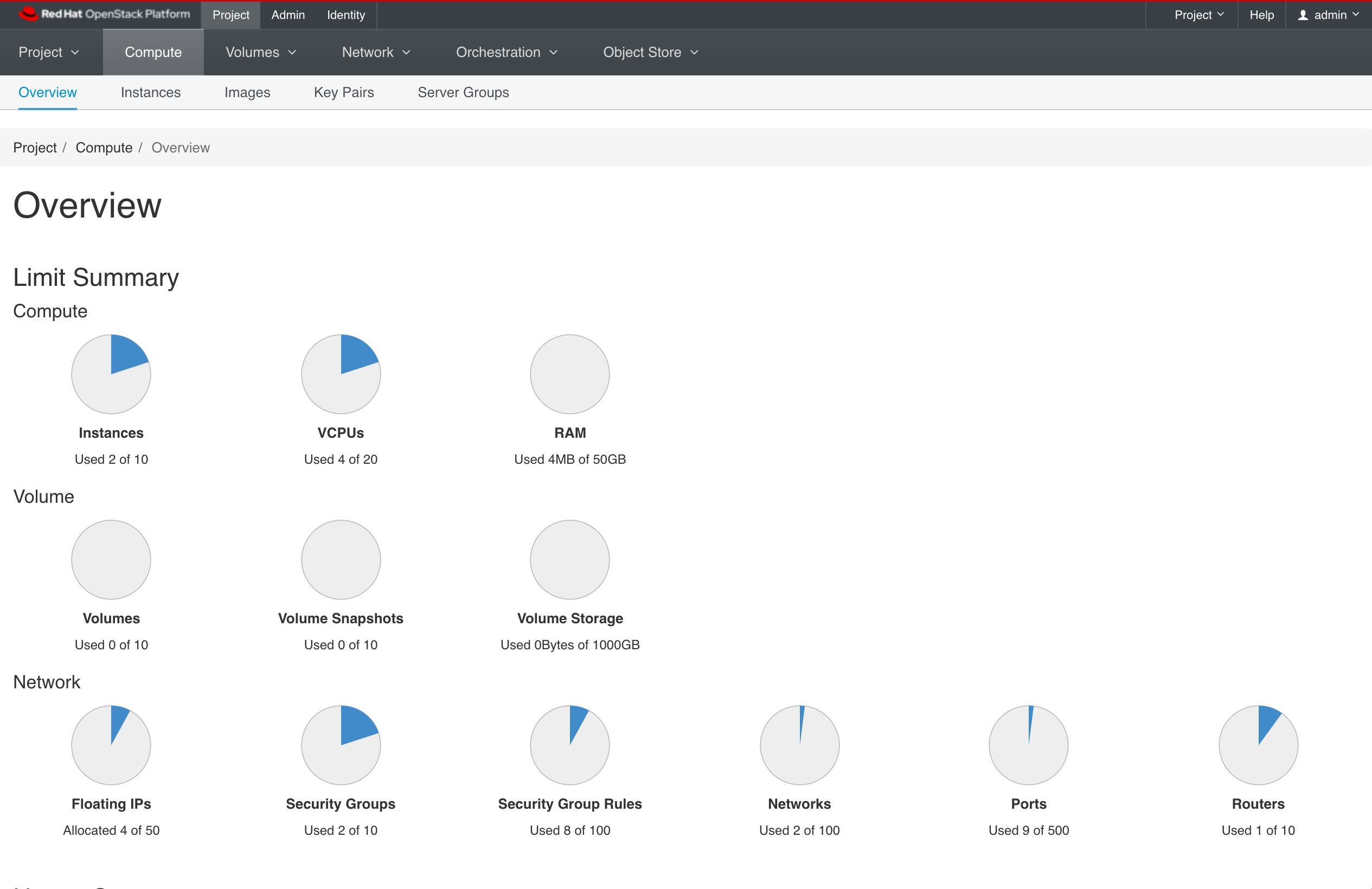

Overcloud installation

The installation took 42 minutes.

(undercloud) [stack@e26-director ~]$ ./overcloud-deploy.sh

...

Ansible passed.

Overcloud configuration completed.

Host 172.16.14.5 not found in /home/stack/.ssh/known_hosts

Overcloud Endpoint: http://172.16.14.5:5000

Overcloud Horizon Dashboard URL: http://172.16.14.5:80/dashboard

Overcloud rc file: /home/stack/overcloudrc

Overcloud Deployed without error

sys:1: ResourceWarning: unclosed <ssl.SSLSocket fd=4, family=AddressFamily.AF_INET, type=SocketKind.SOCK_STREAM, proto=6, laddr=('172.16.16.11', 48750)>

sys:1: ResourceWarning: unclosed <ssl.SSLSocket fd=5, family=AddressFamily.AF_INET, type=SocketKind.SOCK_STREAM, proto=6, laddr=('172.16.16.11', 47478)>

sys:1: ResourceWarning: unclosed <ssl.SSLSocket fd=7, family=AddressFamily.AF_INET, type=SocketKind.SOCK_STREAM, proto=6, laddr=('172.16.16.11', 51680), raddr=('172.16.16.11', 13989)>

real 42m54.775s

user 0m9.339s

sys 0m1.568s

Let’s focus on the initial steps of this 42mn deployment.

Step 1: Stack created

(undercloud) [stack@e26-director ~]$ openstack stack list

+--------------------------------------+------------+----------------------------------+--------------------+----------------------+--------------+

| ID | Stack Name | Project | Stack Status | Creation Time | Updated Time |

+--------------------------------------+------------+----------------------------------+--------------------+----------------------+--------------+

| 9b53d8ec-5f99-48b4-afd8-360176dd0959 | overcloud | 63cd724dec5646d39ba904e110687cc5 | CREATE_IN_PROGRESS | 2020-11-14T12:38:43Z | None |

+--------------------------------------+------------+----------------------------------+--------------------+----------------------+--------------+

Step 2: Compute nodes created

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | None | power off | available | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | None | power off | available | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | None | power off | available | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | 503680bd-a4bb-46f6-83e1-138000c034f9 | power off | available | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | 08763ed9-6cf8-4440-9a44-96209a847a7e | power off | available | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | 04c1305c-eef2-4abe-a7b9-073c50c059ab | power off | available | False |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

(undercloud) [stack@e26-director ~]$ openstack server list

+--------------------------------------+-----------+--------+----------+----------------+---------+

| ID | Name | Status | Networks | Image | Flavor |

+--------------------------------------+-----------+--------+----------+----------------+---------+

| 08763ed9-6cf8-4440-9a44-96209a847a7e | compute-0 | BUILD | | overcloud-full | compute |

| 503680bd-a4bb-46f6-83e1-138000c034f9 | compute-1 | BUILD | | overcloud-full | compute |

| 04c1305c-eef2-4abe-a7b9-073c50c059ab | compute-2 | BUILD | | overcloud-full | compute |

+--------------------------------------+-----------+--------+----------+----------------+---------+

Step 3: Boot of the compute nodes, creation of the controllers

Booting with PXE, writing disk with iSCSI.

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | 45290fae-5ca4-4e27-9444-f63fc77247ed | power off | available | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | 9217c2f0-df92-4fe7-8cf0-bf280d1d9fa4 | power off | available | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | None | power off | available | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | 503680bd-a4bb-46f6-83e1-138000c034f9 | power on | wait call-back | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | 08763ed9-6cf8-4440-9a44-96209a847a7e | power on | wait call-back | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | 04c1305c-eef2-4abe-a7b9-073c50c059ab | power on | wait call-back | False |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

(undercloud) [stack@e26-director ~]$ openstack server list

+--------------------------------------+--------------+--------+----------+----------------+---------+

| ID | Name | Status | Networks | Image | Flavor |

+--------------------------------------+--------------+--------+----------+----------------+---------+

| 8030b792-1aa7-43ce-bcec-0f39a3943371 | controller-0 | BUILD | | overcloud-full | control |

| 9217c2f0-df92-4fe7-8cf0-bf280d1d9fa4 | controller-1 | BUILD | | overcloud-full | control |

| 45290fae-5ca4-4e27-9444-f63fc77247ed | controller-2 | BUILD | | overcloud-full | control |

| 08763ed9-6cf8-4440-9a44-96209a847a7e | compute-0 | BUILD | | overcloud-full | compute |

| 503680bd-a4bb-46f6-83e1-138000c034f9 | compute-1 | BUILD | | overcloud-full | compute |

| 04c1305c-eef2-4abe-a7b9-073c50c059ab | compute-2 | BUILD | | overcloud-full | compute |

+--------------------------------------+--------------+--------+----------+----------------+---------+

Step 4: Boot of the controllers

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | 45290fae-5ca4-4e27-9444-f63fc77247ed | power on | wait call-back | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | 9217c2f0-df92-4fe7-8cf0-bf280d1d9fa4 | power on | wait call-back | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | 8030b792-1aa7-43ce-bcec-0f39a3943371 | power on | wait call-back | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | 503680bd-a4bb-46f6-83e1-138000c034f9 | power on | wait call-back | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | 08763ed9-6cf8-4440-9a44-96209a847a7e | power on | wait call-back | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | 04c1305c-eef2-4abe-a7b9-073c50c059ab | power on | wait call-back | False |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

Step 5: Compute nodes moving to ‘deploying’ provisioning state

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | 45290fae-5ca4-4e27-9444-f63fc77247ed | power on | wait call-back | False |

| f90e0f2f-121d-4759-8a6d-a4bf69cab769 | None | 9217c2f0-df92-4fe7-8cf0-bf280d1d9fa4 | power on | wait call-back | False |

| 45c912d0-2dbd-425a-95bc-0bcb7aa104a4 | None | 8030b792-1aa7-43ce-bcec-0f39a3943371 | power on | wait call-back | False |

| a57ef390-3edc-4449-8116-e2fbda6b6d05 | None | 503680bd-a4bb-46f6-83e1-138000c034f9 | power on | deploying | False |

| e1df9b96-5e3a-4e13-9972-2b62a12e7cb7 | None | 08763ed9-6cf8-4440-9a44-96209a847a7e | power on | deploying | False |

| e07b0890-feb5-4082-b635-185abaf5da85 | None | 04c1305c-eef2-4abe-a7b9-073c50c059ab | power on | deploying | False |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

Step 6: All nodes moving to deploying

(undercloud) [stack@e26-director ~]$ openstack baremetal node list

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| UUID | Name | Instance UUID | Power State | Provisioning State | Maintenance |

+--------------------------------------+------+--------------------------------------+-------------+--------------------+-------------+

| e4b01b91-81a1-4df0-9620-fbe5cfdf2516 | None | 45290fae-5ca4-4e27-9444-f63fc77247ed | power on | deploying | False |